modelId

stringlengths 4

81

| tags

list | pipeline_tag

stringclasses 17

values | config

dict | downloads

int64 0

59.7M

| first_commit

timestamp[ns, tz=UTC] | card

stringlengths 51

438k

|

|---|---|---|---|---|---|---|

AnonymousSub/SR_bert-base-uncased | [

"pytorch",

"bert",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 3 | null | ---

language: en

thumbnail: https://github.com/borisdayma/huggingtweets/blob/master/img/logo.png?raw=true

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1421008625095647234/Vfg52xtV_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Buddha</div>

<div style="text-align: center; font-size: 14px;">@thebuddha_3</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Buddha.

| Data | Buddha |

| --- | --- |

| Tweets downloaded | 3200 |

| Retweets | 138 |

| Short tweets | 695 |

| Tweets kept | 2367 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/14lqj1g8/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @thebuddha_3's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/3rpocant) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/3rpocant/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/thebuddha_3')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

AnonymousSub/SR_cline | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 6 | null | ---

language: en

thumbnail: https://github.com/borisdayma/huggingtweets/blob/master/img/logo.png?raw=true

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1396839225249734657/GG6ve7Qv_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1542608466077855744/a0q2rR-P_400x400.png')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1529675700772302848/uXtYNx_v_400x400.jpg')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">h b & very tall bart & ppigg</div>

<div style="text-align: center; font-size: 14px;">@h3xenbrenner2-s4m31p4n-tallbart</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from h b & very tall bart & ppigg.

| Data | h b | very tall bart | ppigg |

| --- | --- | --- | --- |

| Tweets downloaded | 1230 | 3194 | 3008 |

| Retweets | 75 | 381 | 957 |

| Short tweets | 155 | 569 | 643 |

| Tweets kept | 1000 | 2244 | 1408 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/34qe4a18/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @h3xenbrenner2-s4m31p4n-tallbart's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/kg3j88xz) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/kg3j88xz/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/h3xenbrenner2-s4m31p4n-tallbart')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

AnonymousSub/SR_declutr | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 6 | null | ---

language: en

thumbnail: http://www.huggingtweets.com/finessafudges-h3xenbrenner2-tallbart/1667781477683/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1396839225249734657/GG6ve7Qv_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1542608466077855744/a0q2rR-P_400x400.png')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1577220932648816642/T4NDjEbG_400x400.jpg')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">h b & very tall bart & Finessa Fudges</div>

<div style="text-align: center; font-size: 14px;">@finessafudges-h3xenbrenner2-tallbart</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from h b & very tall bart & Finessa Fudges.

| Data | h b | very tall bart | Finessa Fudges |

| --- | --- | --- | --- |

| Tweets downloaded | 1230 | 3194 | 3079 |

| Retweets | 75 | 381 | 308 |

| Short tweets | 155 | 569 | 814 |

| Tweets kept | 1000 | 2244 | 1957 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/5vdgcc4y/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @finessafudges-h3xenbrenner2-tallbart's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/3cqh8hdr) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/3cqh8hdr/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/finessafudges-h3xenbrenner2-tallbart')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

AnonymousSub/SR_rule_based_hier_quadruplet_epochs_1_shard_1 | [

"pytorch",

"bert",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 1 | null | ---

license: mit

---

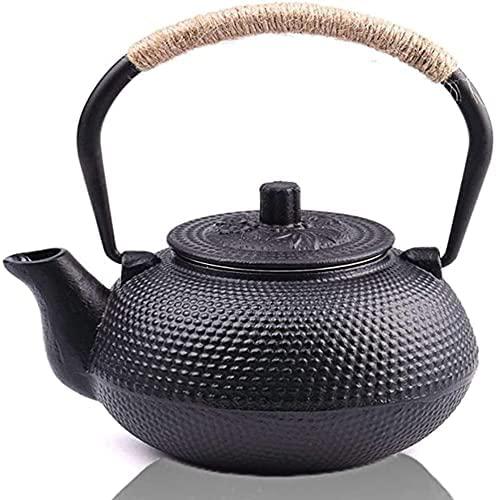

### EttBlackTeapot on Stable Diffusion

This is the `<my-teapot>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

AnonymousSub/SR_rule_based_only_classfn_twostage_epochs_1_shard_1 | [

"pytorch",

"bert",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 2 | null | ---

license: cc-by-nc-sa-4.0

tags:

- generated_from_trainer

datasets:

- wild_receipt

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: OCR-LayoutLMv3-Invoice

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: wild_receipt

type: wild_receipt

config: WildReceipt

split: train

args: WildReceipt

metrics:

- name: Precision

type: precision

value: 0.8765398302764851

- name: Recall

type: recall

value: 0.8812439796339617

- name: F1

type: f1

value: 0.8788856103753516

- name: Accuracy

type: accuracy

value: 0.92678512668641

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# OCR-LayoutLMv3-Invoice

This model is a fine-tuned version of [microsoft/layoutlmv3-base](https://huggingface.co/microsoft/layoutlmv3-base) on the wild_receipt dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3159

- Precision: 0.8765

- Recall: 0.8812

- F1: 0.8789

- Accuracy: 0.9268

## Model description

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 6000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 0.16 | 100 | 1.5032 | 0.4934 | 0.1444 | 0.2234 | 0.6064 |

| No log | 0.32 | 200 | 1.0282 | 0.5884 | 0.4420 | 0.5048 | 0.7385 |

| No log | 0.47 | 300 | 0.7856 | 0.7448 | 0.6205 | 0.6770 | 0.8133 |

| No log | 0.63 | 400 | 0.6464 | 0.7736 | 0.6689 | 0.7174 | 0.8399 |

| 1.1733 | 0.79 | 500 | 0.5672 | 0.7609 | 0.7303 | 0.7453 | 0.8557 |

| 1.1733 | 0.95 | 600 | 0.5055 | 0.7658 | 0.7652 | 0.7655 | 0.8677 |

| 1.1733 | 1.1 | 700 | 0.4735 | 0.7946 | 0.7848 | 0.7897 | 0.8784 |

| 1.1733 | 1.26 | 800 | 0.4414 | 0.7962 | 0.7946 | 0.7954 | 0.8818 |

| 1.1733 | 1.42 | 900 | 0.4094 | 0.8176 | 0.8064 | 0.8120 | 0.8894 |

| 0.5047 | 1.58 | 1000 | 0.3971 | 0.8219 | 0.8248 | 0.8234 | 0.8961 |

| 0.5047 | 1.74 | 1100 | 0.4082 | 0.7993 | 0.8362 | 0.8174 | 0.8927 |

| 0.5047 | 1.89 | 1200 | 0.3797 | 0.8240 | 0.8317 | 0.8278 | 0.8962 |

| 0.5047 | 2.05 | 1300 | 0.3597 | 0.8326 | 0.8331 | 0.8329 | 0.9020 |

| 0.5047 | 2.21 | 1400 | 0.3544 | 0.8462 | 0.8283 | 0.8371 | 0.9020 |

| 0.368 | 2.37 | 1500 | 0.3374 | 0.8428 | 0.8435 | 0.8432 | 0.9056 |

| 0.368 | 2.52 | 1600 | 0.3364 | 0.8406 | 0.8522 | 0.8464 | 0.9089 |

| 0.368 | 2.68 | 1700 | 0.3404 | 0.8467 | 0.8536 | 0.8501 | 0.9107 |

| 0.368 | 2.84 | 1800 | 0.3319 | 0.8405 | 0.8501 | 0.8453 | 0.9090 |

| 0.368 | 3.0 | 1900 | 0.3324 | 0.8584 | 0.8492 | 0.8538 | 0.9117 |

| 0.2949 | 3.15 | 2000 | 0.3204 | 0.8691 | 0.8404 | 0.8545 | 0.9119 |

| 0.2949 | 3.31 | 2100 | 0.3107 | 0.8599 | 0.8547 | 0.8573 | 0.9162 |

| 0.2949 | 3.47 | 2200 | 0.3169 | 0.8680 | 0.8489 | 0.8584 | 0.9146 |

| 0.2949 | 3.63 | 2300 | 0.3190 | 0.8683 | 0.8519 | 0.8600 | 0.9152 |

| 0.2949 | 3.79 | 2400 | 0.2975 | 0.8631 | 0.8617 | 0.8624 | 0.9182 |

| 0.2438 | 3.94 | 2500 | 0.3040 | 0.8566 | 0.8640 | 0.8603 | 0.9171 |

| 0.2438 | 4.1 | 2600 | 0.3045 | 0.8585 | 0.8642 | 0.8613 | 0.9181 |

| 0.2438 | 4.26 | 2700 | 0.3139 | 0.8498 | 0.8748 | 0.8621 | 0.9160 |

| 0.2438 | 4.42 | 2800 | 0.2985 | 0.8642 | 0.8672 | 0.8657 | 0.9214 |

| 0.2438 | 4.57 | 2900 | 0.3047 | 0.8688 | 0.8694 | 0.8691 | 0.9214 |

| 0.2028 | 4.73 | 3000 | 0.2986 | 0.8686 | 0.8695 | 0.8691 | 0.9207 |

| 0.2028 | 4.89 | 3100 | 0.3135 | 0.8628 | 0.8755 | 0.8691 | 0.9197 |

| 0.2028 | 5.05 | 3200 | 0.2927 | 0.8656 | 0.8755 | 0.8705 | 0.9217 |

| 0.2028 | 5.21 | 3300 | 0.2992 | 0.8724 | 0.8697 | 0.8711 | 0.9228 |

| 0.2028 | 5.36 | 3400 | 0.2975 | 0.8831 | 0.8639 | 0.8734 | 0.9244 |

| 0.1814 | 5.52 | 3500 | 0.2897 | 0.8736 | 0.8788 | 0.8762 | 0.9250 |

| 0.1814 | 5.68 | 3600 | 0.3118 | 0.8674 | 0.8751 | 0.8712 | 0.9216 |

| 0.1814 | 5.84 | 3700 | 0.2974 | 0.8735 | 0.8779 | 0.8757 | 0.9237 |

| 0.1814 | 5.99 | 3800 | 0.2957 | 0.8696 | 0.8815 | 0.8755 | 0.9240 |

| 0.1814 | 6.15 | 3900 | 0.3120 | 0.8698 | 0.8817 | 0.8757 | 0.9250 |

| 0.1602 | 6.31 | 4000 | 0.3080 | 0.8715 | 0.8800 | 0.8757 | 0.9238 |

| 0.1602 | 6.47 | 4100 | 0.3031 | 0.8767 | 0.8788 | 0.8777 | 0.9261 |

| 0.1602 | 6.62 | 4200 | 0.3146 | 0.8699 | 0.8784 | 0.8741 | 0.9227 |

| 0.1602 | 6.78 | 4300 | 0.3085 | 0.8717 | 0.8788 | 0.8752 | 0.9248 |

| 0.1602 | 6.94 | 4400 | 0.3023 | 0.8749 | 0.8756 | 0.8752 | 0.9250 |

| 0.1383 | 7.1 | 4500 | 0.3025 | 0.8860 | 0.8735 | 0.8797 | 0.9252 |

| 0.1383 | 7.26 | 4600 | 0.3026 | 0.8775 | 0.8810 | 0.8792 | 0.9272 |

| 0.1383 | 7.41 | 4700 | 0.3146 | 0.8715 | 0.8832 | 0.8773 | 0.9251 |

| 0.1383 | 7.57 | 4800 | 0.3113 | 0.8769 | 0.8803 | 0.8786 | 0.9275 |

| 0.1383 | 7.73 | 4900 | 0.3073 | 0.8797 | 0.8786 | 0.8792 | 0.9261 |

| 0.1306 | 7.89 | 5000 | 0.3163 | 0.8714 | 0.8828 | 0.8770 | 0.9248 |

| 0.1306 | 8.04 | 5100 | 0.3163 | 0.8753 | 0.8810 | 0.8781 | 0.9250 |

| 0.1306 | 8.2 | 5200 | 0.3132 | 0.8743 | 0.8804 | 0.8773 | 0.9257 |

| 0.1306 | 8.36 | 5300 | 0.3119 | 0.8735 | 0.8837 | 0.8786 | 0.9264 |

| 0.1306 | 8.52 | 5400 | 0.3145 | 0.8826 | 0.8779 | 0.8802 | 0.9272 |

| 0.1174 | 8.68 | 5500 | 0.3166 | 0.8776 | 0.8811 | 0.8794 | 0.9261 |

| 0.1174 | 8.83 | 5600 | 0.3146 | 0.8776 | 0.8814 | 0.8795 | 0.9260 |

| 0.1174 | 8.99 | 5700 | 0.3135 | 0.8763 | 0.8826 | 0.8795 | 0.9271 |

| 0.1174 | 9.15 | 5800 | 0.3154 | 0.8794 | 0.8818 | 0.8806 | 0.9275 |

| 0.1174 | 9.31 | 5900 | 0.3152 | 0.8788 | 0.8817 | 0.8802 | 0.9274 |

| 0.11 | 9.46 | 6000 | 0.3159 | 0.8765 | 0.8812 | 0.8789 | 0.9268 |

### Framework versions

- Transformers 4.25.0.dev0

- Pytorch 1.12.1

- Datasets 2.6.1

- Tokenizers 0.13.1

|

AnonymousSub/SR_rule_based_roberta_bert_triplet_epochs_1_shard_1 | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 2 | null | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- bleu

model-index:

- name: t5-small-finetuned-en-to-regex

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-en-to-regex

This model is a fine-tuned version of [rymaju/t5-small-finetuned-en-to-regex](https://huggingface.co/rymaju/t5-small-finetuned-en-to-regex) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0032

- Bleu: 12.1984

- Gen Len: 16.7502

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|

| 0.0092 | 1.0 | 6188 | 0.0043 | 12.1984 | 16.7522 |

| 0.0069 | 2.0 | 12376 | 0.0040 | 12.2039 | 16.7502 |

| 0.0056 | 3.0 | 18564 | 0.0034 | 12.2091 | 16.7483 |

| 0.0048 | 4.0 | 24752 | 0.0035 | 12.2103 | 16.7502 |

| 0.0049 | 5.0 | 30940 | 0.0035 | 12.1984 | 16.7502 |

| 0.0046 | 6.0 | 37128 | 0.0033 | 12.1984 | 16.7502 |

| 0.0046 | 7.0 | 43316 | 0.0035 | 12.1984 | 16.7502 |

| 0.0046 | 8.0 | 49504 | 0.0032 | 12.1984 | 16.7502 |

| 0.0042 | 9.0 | 55692 | 0.0032 | 12.1984 | 16.7502 |

| 0.0043 | 10.0 | 61880 | 0.0032 | 12.1984 | 16.7502 |

### Framework versions

- Transformers 4.24.0

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

AnonymousSub/SR_rule_based_roberta_hier_triplet_epochs_1_shard_10 | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 6 | null | ---

license: mit

tags:

- generated_from_trainer

metrics:

- f1

model-index:

- name: xlm-roberta-base-finetuned-panx-de-fr

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-panx-de-fr

This model is a fine-tuned version of [tkubotake/xlm-roberta-base-finetuned-panx-de](https://huggingface.co/tkubotake/xlm-roberta-base-finetuned-panx-de) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1829

- F1: 0.8671

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 24

- eval_batch_size: 24

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.158 | 1.0 | 715 | 0.1689 | 0.8471 |

| 0.099 | 2.0 | 1430 | 0.1781 | 0.8576 |

| 0.0599 | 3.0 | 2145 | 0.1829 | 0.8671 |

### Framework versions

- Transformers 4.24.0

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.2

|

AnonymousSub/SR_rule_based_roberta_only_classfn_twostage_epochs_1_shard_1 | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 8 | null | Access to model sreddy1/t5-end2end-questions-generation is restricted and you are not in the authorized list. Visit https://huggingface.co/sreddy1/t5-end2end-questions-generation to ask for access. |

AnonymousSub/SR_rule_based_roberta_twostagetriplet_epochs_1_shard_1 | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 8 | null | ---

language: ja

license: cc-by-nc-sa-4.0

tags:

- roberta

- medical

inference: false

---

# alabnii/jmedroberta-base-manbyo-wordpiece

## Model description

This is a Japanese RoBERTa base model pre-trained on academic articles in medical sciences collected by Japan Science and Technology Agency (JST).

This model is released under the [Creative Commons 4.0 International License](https://creativecommons.org/licenses/by-nc-sa/4.0/deed) (CC BY-NC-SA 4.0).

#### Reference

Ja:

```

@InProceedings{sugimoto_nlp2023_jmedroberta,

author = "杉本海人 and 壹岐太一 and 知田悠生 and 金沢輝一 and 相澤彰子",

title = "J{M}ed{R}o{BERT}a: 日本語の医学論文にもとづいた事前学習済み言語モデルの構築と評価",

booktitle = "言語処理学会第29回年次大会",

year = "2023",

url = "https://www.anlp.jp/proceedings/annual_meeting/2023/pdf_dir/P3-1.pdf"

}

```

En:

```

@InProceedings{sugimoto_nlp2023_jmedroberta,

author = "Sugimoto, Kaito and Iki, Taichi and Chida, Yuki and Kanazawa, Teruhito and Aizawa, Akiko",

title = "J{M}ed{R}o{BERT}a: a Japanese Pre-trained Language Model on Academic Articles in Medical Sciences (in Japanese)",

booktitle = "Proceedings of the 29th Annual Meeting of the Association for Natural Language Processing",

year = "2023",

url = "https://www.anlp.jp/proceedings/annual_meeting/2023/pdf_dir/P3-1.pdf"

}

```

## Datasets used for pre-training

- abstracts (train: 1.6GB (10M sentences), validation: 0.2GB (1.3M sentences))

- abstracts & body texts (train: 0.2GB (1.4M sentences))

## How to use

**Before using the model, make sure that [Manbyo Dictionary](https://sociocom.naist.jp/manbyou-dic/) has been downloaded under `/usr/local/lib/mecab/dic/userdic`.**

```bash

# download Manbyo-Dictionary

mkdir -p /usr/local/lib/mecab/dic/userdic

wget https://sociocom.jp/~data/2018-manbyo/data/MANBYO_201907_Dic-utf8.dic

mv MANBYO_201907_Dic-utf8.dic /usr/local/lib/mecab/dic/userdic

```

---

**Note: If you don't have root privileges and find it difficult to download the Manbyo Dictionary to `/usr/local/lib/mecab/dic/userdic`, you can still load our model by overriding tokenizer settings as follows:**

```bash

# download Manbyo-Dictionary wherever you like

wget https://sociocom.jp/~data/2018-manbyo/data/MANBYO_201907_Dic-utf8.dic

mv MANBYO_201907_Dic-utf8.dic /anywhere/you/like

```

```python

from transformers import AutoModelForMaskedLM, AutoTokenizer

model = AutoModelForMaskedLM.from_pretrained("alabnii/jmedroberta-base-manbyo-wordpiece")

tokenizer = AutoTokenizer.from_pretrained("alabnii/jmedroberta-base-manbyo-wordpiece", **{

"mecab_kwargs": {

"mecab_option": "-u /anywhere/you/like/MANBYO_201907_Dic-utf8.dic"

}

})

```

---

**Input text must be converted to full-width characters(全角)in advance.**

You can use this model for masked language modeling as follows:

```python

from transformers import AutoModelForMaskedLM, AutoTokenizer

model = AutoModelForMaskedLM.from_pretrained("alabnii/jmedroberta-base-manbyo-wordpiece")

model.eval()

tokenizer = AutoTokenizer.from_pretrained("alabnii/jmedroberta-base-manbyo-wordpiece")

texts = ['この患者は[MASK]と診断された。']

inputs = tokenizer.batch_encode_plus(texts, return_tensors='pt')

outputs = model(**inputs)

tokenizer.convert_ids_to_tokens(outputs.logits[0][1:-1].argmax(axis=-1))

# ['この', '患者', 'は', 'ALS', 'と', '診断', 'さ', 'れ', 'た', '。']

```

Alternatively, you can employ [Fill-mask pipeline](https://huggingface.co/tasks/fill-mask).

```python

from transformers import pipeline

fill = pipeline("fill-mask", model="alabnii/jmedroberta-base-manbyo-wordpiece", top_k=10)

fill("この患者は[MASK]と診断された。")

#[{'score': 0.020739275962114334,

# 'token': 11474,

# 'token_str': 'ALS',

# 'sequence': 'この 患者 は ALS と 診断 さ れ た 。'},

# {'score': 0.0193060003221035,

# 'token': 10777,

# 'token_str': '統合失調症',

# 'sequence': 'この 患者 は 統合失調症 と 診断 さ れ た 。'},

# {'score': 0.014001614414155483,

# 'token': 27318,

# 'token_str': 'Fabry病',

# 'sequence': 'この 患者 は Fabry病 と 診断 さ れ た 。'},

# ...

```

You can fine-tune this model on downstream tasks.

**See also sample Colab notebooks:** https://colab.research.google.com/drive/1yqUaqLf0Lf_imRT9TXPXEt1dowfK_2CS?usp=sharing

## Tokenization

Mecab (w/ IPAdic & [Manbyo Dictionary](https://sociocom.naist.jp/manbyou-dic/)) was used for pre-training. Each word is tokenized into tokens by [WordPiece](https://huggingface.co/course/chapter6/6).

## Vocabulary

The vocabulary consists of 30000 tokens including words (IPAdic & [Manbyo Dictionary](https://sociocom.naist.jp/manbyou-dic/)) and subwords induced by [WordPiece](https://huggingface.co/course/chapter6/6).

## Training procedure

The following hyperparameters were used during pre-training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- distributed_type: multi-GPU

- num_devices: 8

- total_train_batch_size: 256

- total_eval_batch_size: 256

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 20000

- training_steps: 2000000

- mixed_precision_training: Native AMP

## Note: Why do we call our model RoBERTa, not BERT?

As the config file suggests, our model is based on HuggingFace's `BertForMaskedLM` class. However, we consider our model as **RoBERTa** for the following reasons:

- We kept training only with max sequence length (= 512) tokens.

- We removed the next sentence prediction (NSP) training objective.

- We introduced dynamic masking (changing the masking pattern in each training iteration).

## Acknowledgements

This work was supported by Japan Japan Science and Technology Agency (JST) AIP Trilateral AI Research (Grant Number: JPMJCR20G9), and Joint Usage/Research Center for Interdisciplinary Large-scale Information Infrastructures (JHPCN) (Project ID: jh221004), in Japan.

In this research work, we used the "[mdx: a platform for the data-driven future](https://mdx.jp/)". |

AnonymousSub/SR_rule_based_roberta_twostagetriplet_hier_epochs_1_shard_1 | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 4 | null | ---

license: apache-2.0

# inference: false

# inference:

# parameters:

tags:

- classification

- zero-shot

---

# Erlangshen-UniMC-DeBERTa-v2-1.4B-Chinese

- Main Page:[Fengshenbang](https://fengshenbang-lm.com/)

- Github: [Fengshenbang-LM](https://github.com/IDEA-CCNL/Fengshenbang-LM/tree/main/fengshen/examples/unimc/)

- Docs: [Fengshenbang-Docs](https://fengshenbang-doc.readthedocs.io/)

- API: [Fengshen-OpenAPI](https://fengshenbang-lm.com/open-api)

## 简介 Brief Introduction

UniMC 核心思想是将自然语言理解任务转化为 multiple choice 任务,并且使用多个 NLU 任务来进行预训练。我们在英文数据集实验结果表明仅含有 2.35 亿参数的 [ALBERT模型](https://huggingface.co/IDEA-CCNL/Erlangshen-UniMC-Albert-235M-English)的zero-shot性能可以超越众多千亿的模型。并在中文测评基准 FewCLUE 和 ZeroCLUE 两个榜单中,13亿的[二郎神](https://huggingface.co/IDEA-CCNL/Erlangshen-UniMC-MegatronBERT-1.3B-Chinese)获得了第一的成绩。

The core idea of UniMC is to convert natural language understanding tasks into multiple choice tasks and use multiple NLU tasks for pre-training. Our experimental results on the English dataset show that the zero-shot performance of a [ALBERT](https://huggingface.co/IDEA-CCNL/Erlangshen-UniMC-Albert-235M-English) model with only 235 million parameters can surpass that of many hundreds of billions of models. And in the Chinese evaluation benchmarks FewCLUE and ZeroCLUE two lists, 1.3 billion [Erlangshen](https://huggingface.co/IDEA-CCNL/Erlangshen-UniMC-MegatronBERT-1.3B-Chinese) won the first result.

## 模型分类 Model Taxonomy

| 需求 Demand | 任务 Task | 系列 Series | 模型 Model | 参数 Parameter | 额外 Extra |

| :----: | :----: | :----: | :----: | :----: | :----: |

| 通用 General | 自然语言理解 NLU | 二郎神 Erlangshen | DeBERTa-v2 | 1.4B | Chinese |

## 模型信息 Model Information

我们为零样本学习者提出了一种与输入无关的新范式,从某种意义上说,它与任何格式兼容并适用于一系列语言任务,例如文本分类、常识推理、共指解析、情感分析。我们的方法将零样本学习转化为多项选择任务,避免常用的大型生成模型(如 FLAN)中的问题。它不仅增加了模型的泛化能力,而且显着减少了对参数的需求。我们证明了这种方法可以在通用语言基准上取得最先进的性能,并在自然语言推理和文本分类等任务上产生令人满意的结果。更多详细信息可以参考我们的[论文](https://arxiv.org/abs/2210.08590)或者[GitHub](https://github.com/IDEA-CCNL/Fengshenbang-LM/tree/main/fengshen/examples/unimc/)

We propose an new paradigm for zero-shot learners that is input-agnostic, in the sense that it is compatible with any format and applicable to a list of language tasks, such as text classification, commonsense reasoning, coreference resolution, sentiment analysis.

Our approach converts zero-shot learning into multiple choice tasks,

avoiding problems in commonly used large generative models such as FLAN. It not only adds generalization ability to the models, but also reduces the needs of parameters significantly. We demonstrate that this approach leads to state-of-the-art performance on common language benchmarks, and produces satisfactory results on tasks such as natural language inference and text classification. For more details, please refer to our [paper](https://arxiv.org/abs/2210.08590) or [github](https://github.com/IDEA-CCNL/Fengshenbang-LM/tree/main/fengshen/examples/unimc/)

### 下游效果 Performance

我们使用全量数据测评我们的模型性能,并与 RoBERTa 进行对比

We use full data to evaluate our model performance and compare it with RoBERTa

| Model | afqmc | tnews | iflytek | ocnli |

|------------|------------|----------|-----------|----------|

| RoBERTa-110M | 74.06 | 57.5 | 60.36 | 74.3 |

| RoBERTa-330M | 74.88 | 58.79 | 61.52 | 77.77 |

| [Erlangshen-UniMC-DeBERTa-v2-110M-Chinese](https://huggingface.co/IDEA-CCNL/Erlangshen-UniMC-DeBERTa-v2-110M-Chinese/) | 74.49 | 57.28 | 61.42 | 76.98 |

| [Erlangshen-UniMC-DeBERTa-v2-330M-Chinese](https://huggingface.co/IDEA-CCNL/Erlangshen-UniMC-DeBERTa-v2-330M-Chinese/) | 76.16 | 58.61 | - |81.86 |

| [Erlangshen-UniMC-DeBERTa-v2-1.4B-Chinese](https://huggingface.co/IDEA-CCNL/Erlangshen-UniMC-DeBERTa-v2-1.4B-Chinese/) | 76.96 | 60.67 | 63.24 | 83.86 |

## 使用 Usage

```shell

git clone https://github.com/IDEA-CCNL/Fengshenbang-LM.git

cd Fengshenbang-LM

pip install --editable .

```

```python3

import argparse

from fengshen.pipelines.multiplechoice import UniMCPipelines

total_parser = argparse.ArgumentParser("TASK NAME")

total_parser = UniMCPipelines.piplines_args(total_parser)

args = total_parser.parse_args()

pretrained_model_path = 'IDEA-CCNL/Erlangshen-UniMC-DeBERTa-v2-1.4B-Chinese'

args.learning_rate=2e-5

args.max_length=512

args.max_epochs=3

args.batchsize=8

args.default_root_dir='./'

model = UniMCPipelines(args, pretrained_model_path)

train_data = []

dev_data = []

test_data = [

{"texta": "放弃了途观L和荣威RX5,果断入手这部车,外观霸气又好开",

"textb": "",

"question": "下面新闻属于哪一个类别?",

"choice": [

"房产",

"汽车",

"教育",

"科技"

],

"answer": "汽车",

"label": 1,

"id": 7759}

]

if args.train:

model.train(train_data, dev_data)

result = model.predict(test_data)

for line in result[:20]:

print(line)

```

## 引用 Citation

如果您在您的工作中使用了我们的模型,可以引用我们的[论文](https://arxiv.org/abs/2210.08590):

If you are using the resource for your work, please cite the our [paper](https://arxiv.org/abs/2210.08590):

```text

@article{unimc,

author = {Ping Yang and

Junjie Wang and

Ruyi Gan and

Xinyu Zhu and

Lin Zhang and

Ziwei Wu and

Xinyu Gao and

Jiaxing Zhang and

Tetsuya Sakai},

title = {Zero-Shot Learners for Natural Language Understanding via a Unified Multiple Choice Perspective},

journal = {CoRR},

volume = {abs/2210.08590},

year = {2022}

}

```

也可以引用我们的[网站](https://github.com/IDEA-CCNL/Fengshenbang-LM/):

You can also cite our [website](https://github.com/IDEA-CCNL/Fengshenbang-LM/):

```text

@misc{Fengshenbang-LM,

title={Fengshenbang-LM},

author={IDEA-CCNL},

year={2021},

howpublished={\url{https://github.com/IDEA-CCNL/Fengshenbang-LM}},

}

``` |

AnonymousSub/SR_rule_based_roberta_twostagetriplet_hier_epochs_1_shard_10 | [

"pytorch",

"roberta",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"RobertaModel"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 7 | null | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9285

- name: F1

type: f1

value: 0.928851862350588

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2178

- Accuracy: 0.9285

- F1: 0.9289

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8227 | 1.0 | 250 | 0.3212 | 0.8985 | 0.8932 |

| 0.2463 | 2.0 | 500 | 0.2178 | 0.9285 | 0.9289 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.10.0

- Datasets 2.6.1

- Tokenizers 0.13.1

|

AnonymousSub/bert_triplet_epochs_1_shard_1 | [

"pytorch",

"bert",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 2 | null | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# {MODEL_NAME}

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('{MODEL_NAME}')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('{MODEL_NAME}')

model = AutoModel.from_pretrained('{MODEL_NAME}')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name={MODEL_NAME})

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 40 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 1,

"evaluation_steps": 0,

"evaluator": "NoneType",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": 40,

"warmup_steps": 4,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information --> |

AnonymousSub/declutr-model_squad2.0 | [

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

]

| question-answering | {

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 2 | null | ---

language: ja

license: cc-by-sa-4.0

---

# BERT Base Japanese for Irony

This is a BERT Base model for sentiment analysis in Japanese additionally finetuned for automatic irony detection.

The model was based on [bert-base-japanese-sentiment](https://huggingface.co/daigo/bert-base-japanese-sentiment), and later finetuned on a dataset containing ironic and sarcastic tweets.

## Licenses

The finetuned model with all attached files is licensed under [CC BY-SA 4.0](http://creativecommons.org/licenses/by-sa/4.0/), or Creative Commons Attribution-ShareAlike 4.0 International License.

<a rel="license" href="http://creativecommons.org/licenses/by-sa/4.0/"><img alt="Creative Commons License" style="border-width:0" src="https://i.creativecommons.org/l/by-sa/4.0/88x31.png" /></a>

## Citations

Please, cite this model using the following citation.

```

@inproceedings{dan2022bert-base-irony02,

title={北見工業大学 テキスト情報処理研究室 ELECTRA Base 皮肉検出モデル (daigo ver.)},

author={団 俊輔 and プタシンスキ ミハウ and ジェプカ ラファウ and 桝井 文人},

publisher={HuggingFace},

year={2022},

url = "https://huggingface.co/kit-nlp/bert-base-japanese-sentiment-irony"

}

```

|

AnonymousSub/declutr-model_wikiqa | [

"pytorch",

"roberta",

"text-classification",

"transformers"

]

| text-classification | {

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 26 | 2022-11-07T06:37:51Z | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imagefolder

metrics:

- accuracy

- f1

- recall

- precision

model-index:

- name: Brain_Tumor_Detector_swin

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: imagefolder

type: imagefolder

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9981308411214953

- name: F1

type: f1

value: 0.9985111662531018

- name: Recall

type: recall

value: 0.9990069513406157

- name: Precision

type: precision

value: 0.998015873015873

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Brain_Tumor_Detector_swin

This model is a fine-tuned version of [microsoft/swin-base-patch4-window7-224-in22k](https://huggingface.co/microsoft/swin-base-patch4-window7-224-in22k) on the imagefolder dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0054

- Accuracy: 0.9981

- F1: 0.9985

- Recall: 0.9990

- Precision: 0.9980

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Recall | Precision |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|:------:|:---------:|

| 0.079 | 1.0 | 113 | 0.0283 | 0.9882 | 0.9906 | 0.9930 | 0.9881 |

| 0.0575 | 2.0 | 226 | 0.0121 | 0.9956 | 0.9965 | 0.9950 | 0.9980 |

| 0.0312 | 3.0 | 339 | 0.0054 | 0.9981 | 0.9985 | 0.9990 | 0.9980 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1

- Datasets 2.6.1

- Tokenizers 0.13.1

|

AnonymousSub/declutr-s10-SR | [

"pytorch",

"roberta",

"text-classification",

"transformers"

]

| text-classification | {

"architectures": [

"RobertaForSequenceClassification"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 36 | null | ---

license: cc-by-4.0

tags:

- generated_from_trainer

model-index:

- name: roberta-base-squad-finetuned-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-base-squad-finetuned-squad

This model is a fine-tuned version of [deepset/roberta-base-squad2](https://huggingface.co/deepset/roberta-base-squad2) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 6.7060

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 17 | 5.9055 |

| No log | 2.0 | 34 | 6.2285 |

| No log | 3.0 | 51 | 6.8639 |

| No log | 4.0 | 68 | 6.3238 |

| No log | 5.0 | 85 | 7.0916 |

| No log | 6.0 | 102 | 6.7791 |

| No log | 7.0 | 119 | 6.8093 |

| No log | 8.0 | 136 | 6.7029 |

| No log | 9.0 | 153 | 6.7142 |

| No log | 10.0 | 170 | 6.7060 |

### Framework versions

- Transformers 4.21.0

- Pytorch 1.11.0+cu102

- Datasets 2.6.1

- Tokenizers 0.12.1

|

AnonymousSub/roberta-base_squad2.0 | [

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

]

| question-answering | {

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 6 | null | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- sentiment140

metrics:

- accuracy

model-index:

- name: Sentiment140_DistilBERT_5E

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: sentiment140

type: sentiment140

config: sentiment140

split: train

args: sentiment140

metrics:

- name: Accuracy

type: accuracy

value: 0.8333333333333334

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Sentiment140_DistilBERT_5E

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the sentiment140 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4897

- Accuracy: 0.8333

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.6784 | 0.08 | 50 | 0.6516 | 0.6933 |

| 0.6301 | 0.16 | 100 | 0.5384 | 0.7533 |

| 0.5438 | 0.24 | 150 | 0.4559 | 0.8 |

| 0.4625 | 0.32 | 200 | 0.4287 | 0.8133 |

| 0.4528 | 0.4 | 250 | 0.4056 | 0.8267 |

| 0.4609 | 0.48 | 300 | 0.3883 | 0.8333 |

| 0.4705 | 0.56 | 350 | 0.3886 | 0.8067 |

| 0.4539 | 0.64 | 400 | 0.3967 | 0.82 |

| 0.4483 | 0.72 | 450 | 0.3758 | 0.82 |

| 0.4699 | 0.8 | 500 | 0.4003 | 0.8133 |

| 0.467 | 0.88 | 550 | 0.4021 | 0.8267 |

| 0.454 | 0.96 | 600 | 0.3735 | 0.8333 |

| 0.4227 | 1.04 | 650 | 0.3840 | 0.8267 |

| 0.3584 | 1.12 | 700 | 0.3775 | 0.8333 |

| 0.3618 | 1.2 | 750 | 0.4026 | 0.8267 |

| 0.3634 | 1.28 | 800 | 0.3891 | 0.8133 |

| 0.3751 | 1.36 | 850 | 0.3895 | 0.8267 |

| 0.3484 | 1.44 | 900 | 0.3919 | 0.8267 |

| 0.3764 | 1.52 | 950 | 0.3770 | 0.84 |

| 0.3488 | 1.6 | 1000 | 0.4028 | 0.82 |

| 0.3665 | 1.68 | 1050 | 0.3779 | 0.8333 |

| 0.3925 | 1.76 | 1100 | 0.3726 | 0.84 |

| 0.3624 | 1.84 | 1150 | 0.3655 | 0.84 |

| 0.3876 | 1.92 | 1200 | 0.3648 | 0.8133 |

| 0.3935 | 2.0 | 1250 | 0.3633 | 0.8467 |

| 0.2944 | 2.08 | 1300 | 0.3808 | 0.8333 |

| 0.2957 | 2.16 | 1350 | 0.3836 | 0.8333 |

| 0.266 | 2.24 | 1400 | 0.3940 | 0.8267 |

| 0.2747 | 2.32 | 1450 | 0.3952 | 0.84 |

| 0.314 | 2.4 | 1500 | 0.4060 | 0.8133 |

| 0.3419 | 2.48 | 1550 | 0.4025 | 0.8133 |

| 0.2782 | 2.56 | 1600 | 0.4218 | 0.82 |

| 0.3218 | 2.64 | 1650 | 0.4039 | 0.8333 |

| 0.2863 | 2.72 | 1700 | 0.4130 | 0.8267 |

| 0.3336 | 2.8 | 1750 | 0.4026 | 0.8133 |

| 0.3224 | 2.88 | 1800 | 0.3910 | 0.8267 |

| 0.2709 | 2.96 | 1850 | 0.3979 | 0.84 |

| 0.2701 | 3.04 | 1900 | 0.4127 | 0.8333 |

| 0.2782 | 3.12 | 1950 | 0.4335 | 0.82 |

| 0.2425 | 3.2 | 2000 | 0.4229 | 0.8333 |

| 0.2457 | 3.28 | 2050 | 0.4168 | 0.8333 |

| 0.217 | 3.36 | 2100 | 0.4264 | 0.8267 |

| 0.2522 | 3.44 | 2150 | 0.4250 | 0.8333 |

| 0.2402 | 3.52 | 2200 | 0.4371 | 0.8333 |

| 0.2465 | 3.6 | 2250 | 0.4429 | 0.8333 |

| 0.2427 | 3.68 | 2300 | 0.4435 | 0.8333 |

| 0.2408 | 3.76 | 2350 | 0.4500 | 0.84 |

| 0.1976 | 3.84 | 2400 | 0.4536 | 0.8333 |

| 0.23 | 3.92 | 2450 | 0.4645 | 0.8333 |

| 0.2449 | 4.0 | 2500 | 0.4557 | 0.8467 |

| 0.1933 | 4.08 | 2550 | 0.4672 | 0.84 |

| 0.213 | 4.16 | 2600 | 0.4717 | 0.84 |

| 0.1772 | 4.24 | 2650 | 0.4843 | 0.8267 |

| 0.1917 | 4.32 | 2700 | 0.4690 | 0.8467 |

| 0.2094 | 4.4 | 2750 | 0.4728 | 0.8467 |

| 0.1903 | 4.48 | 2800 | 0.4755 | 0.8467 |

| 0.2541 | 4.56 | 2850 | 0.4791 | 0.84 |

| 0.1805 | 4.64 | 2900 | 0.4877 | 0.84 |

| 0.2183 | 4.72 | 2950 | 0.4940 | 0.8267 |

| 0.2257 | 4.8 | 3000 | 0.4905 | 0.8333 |

| 0.2496 | 4.88 | 3050 | 0.4883 | 0.84 |

| 0.1846 | 4.96 | 3100 | 0.4897 | 0.8333 |

### Framework versions

- Transformers 4.24.0

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

AnonymousSub/rule_based_bert_hier_diff_equal_wts_epochs_1_shard_10 | [

"pytorch",

"bert",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 6 | null | Access to model ardent-figment/gated-model is restricted and you are not in the authorized list. Visit https://huggingface.co/ardent-figment/gated-model to ask for access. |

AnonymousSub/rule_based_bert_mean_diff_epochs_1_shard_1 | [

"pytorch",

"bert",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 3 | null | ---

language: ja

license: cc-by-sa-4.0

---

# bert-base-irony

This is a BERT Base model for the Japanese language finetuned for automatic irony detection.

The model was based on [BERT base Japanese](https://huggingface.co/hiroshi-matsuda-rit/bert-base-japanese-basic-char-v2), and later finetuned on a dataset containing ironic and sarcastic tweets.

## Licenses

The finetuned model with all attached files is licensed under [CC BY-SA 4.0](http://creativecommons.org/licenses/by-sa/4.0/), or Creative Commons Attribution-ShareAlike 4.0 International License.

<a rel="license" href="http://creativecommons.org/licenses/by-sa/4.0/"><img alt="Creative Commons License" style="border-width:0" src="https://i.creativecommons.org/l/by-sa/4.0/88x31.png" /></a>

## Citations

Please, cite this model using the following citation.

```

@inproceedings{dan2022bert-base-irony,

title={北見工業大学 テキスト情報処理研究室 BERT Base 皮肉検出モデル (RIT ver.)},

author={団 俊輔 and プタシンスキ ミハウ and ジェプカ ラファウ and 桝井 文人},

publisher={HuggingFace},

year={2022},

url = "https://huggingface.co/kit-nlp/bert-base-japanese-basic-char-v2-irony"

}

```

|

AnonymousSub/rule_based_hier_triplet_epochs_1_shard_1_wikiqa_copy | [

"pytorch",

"bert",

"feature-extraction",

"transformers"

]

| feature-extraction | {

"architectures": [

"BertModel"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 1 | null | Access to model AustinZuo/zeo-bert is restricted and you are not in the authorized list. Visit https://huggingface.co/AustinZuo/zeo-bert to ask for access. |

AnonymousSub/rule_based_only_classfn_twostage_epochs_1_shard_1_wikiqa | [

"pytorch",

"bert",

"text-classification",

"transformers"

]

| text-classification | {

"architectures": [

"BertForSequenceClassification"

],

"model_type": "bert",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 27 | null | ---

license: mit

tags:

- audio

- music

- generation

- tensorflow

---

# Musika Model: musika_hyperpop

## Model provided by: freepina

Pretrained musika_hyperpop model for the [Musika system](https://github.com/marcoppasini/musika) for fast infinite waveform music generation.

Introduced in [this paper](https://arxiv.org/abs/2208.08706).

## How to use

You can generate music from this pretrained musika_hyperpop model using the notebook available [here](https://colab.research.google.com/drive/1HJWliBXPi-Xlx3gY8cjFI5-xaZgrTD7r).

### Model description

This pretrained GAN system consists of a ResNet-style generator and discriminator. During training, stability is controlled by adapting the strength of gradient penalty regularization on-the-fly. The gradient penalty weighting term is contained in *switch.npy*. The generator is conditioned on a latent coordinate system to produce samples of arbitrary length. The latent representations produced by the generator are then passed to a decoder which converts them into waveform audio.

The generator has a context window of about 12 seconds of audio.

|

AnonymousSub/rule_based_roberta_bert_quadruplet_epochs_1_shard_1_squad2.0 | [

"pytorch",

"roberta",

"question-answering",

"transformers",

"autotrain_compatible"

]

| question-answering | {

"architectures": [

"RobertaForQuestionAnswering"

],

"model_type": "roberta",

"task_specific_params": {

"conversational": {

"max_length": null

},

"summarization": {

"early_stopping": null,

"length_penalty": null,

"max_length": null,

"min_length": null,

"no_repeat_ngram_size": null,

"num_beams": null,

"prefix": null

},

"text-generation": {

"do_sample": null,

"max_length": null

},

"translation_en_to_de": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_fr": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

},

"translation_en_to_ro": {

"early_stopping": null,

"max_length": null,

"num_beams": null,

"prefix": null

}

}

} | 2 | null | ---

license: mit

tags:

- flair

- token-classification

- sequence-tagger-model

language: de

widget: