modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

nm-testing/tinyllama-oneshot-w8a8-channel-dynamic-token-v2 | nm-testing | 2024-06-13T20:05:56Z | 1,010 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-06-13T20:05:24Z | Entry not found |

pierreguillou/bert-large-cased-squad-v1.1-portuguese | pierreguillou | 2022-01-04T09:57:00Z | 1,009 | 39 | transformers | [

"transformers",

"pytorch",

"tf",

"bert",

"question-answering",

"bert-large",

"pt",

"dataset:brWaC",

"dataset:squad",

"dataset:squad_v1_pt",

"license:mit",

"endpoints_compatible",

"region:us"

]

| question-answering | 2022-03-02T23:29:05Z | ---

language: pt

license: mit

tags:

- question-answering

- bert

- bert-large

- pytorch

datasets:

- brWaC

- squad

- squad_v1_pt

metrics:

- squad

widget:

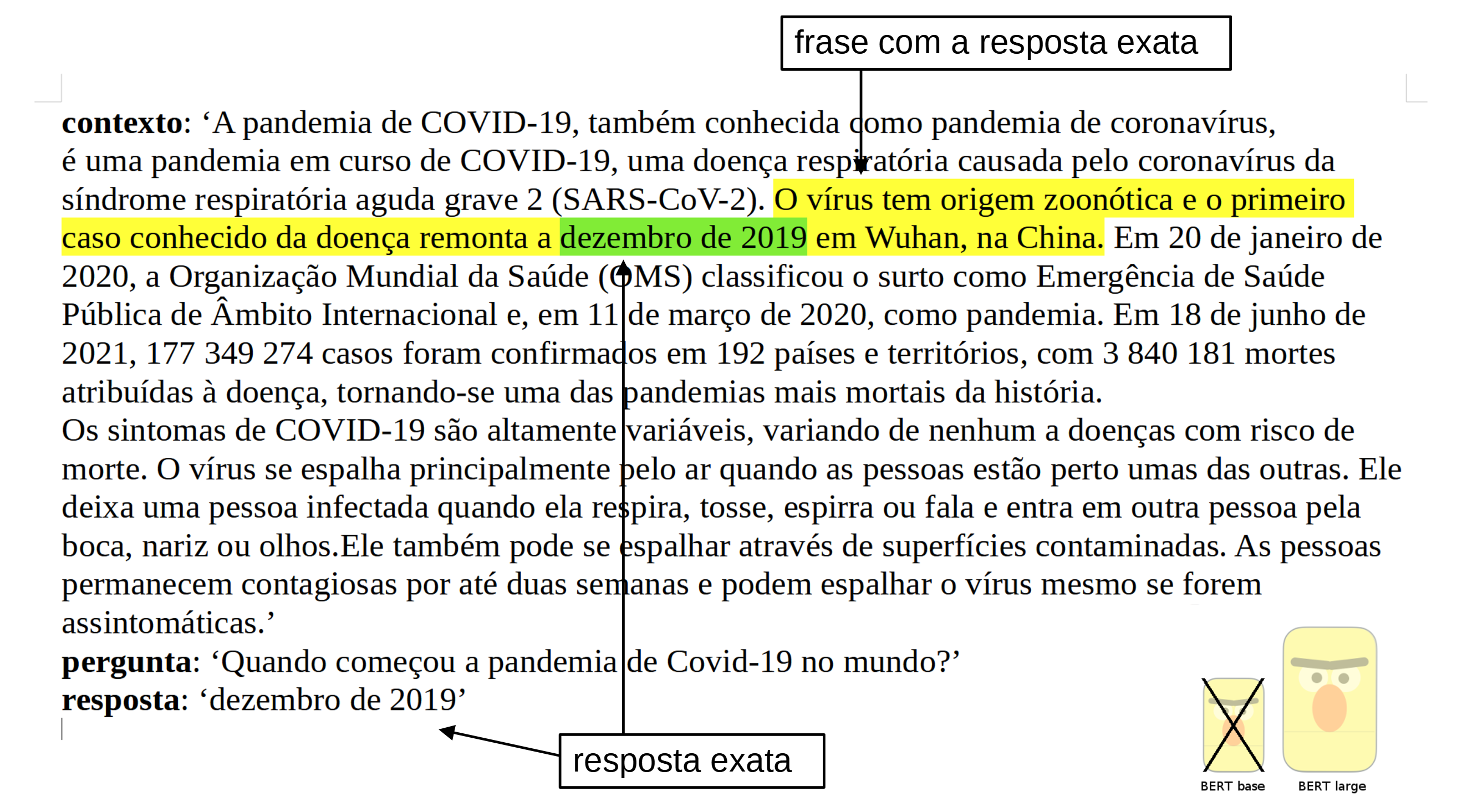

- text: "Quando começou a pandemia de Covid-19 no mundo?"

context: "A pandemia de COVID-19, também conhecida como pandemia de coronavírus, é uma pandemia em curso de COVID-19, uma doença respiratória causada pelo coronavírus da síndrome respiratória aguda grave 2 (SARS-CoV-2). O vírus tem origem zoonótica e o primeiro caso conhecido da doença remonta a dezembro de 2019 em Wuhan, na China."

- text: "Onde foi descoberta a Covid-19?"

context: "A pandemia de COVID-19, também conhecida como pandemia de coronavírus, é uma pandemia em curso de COVID-19, uma doença respiratória causada pelo coronavírus da síndrome respiratória aguda grave 2 (SARS-CoV-2). O vírus tem origem zoonótica e o primeiro caso conhecido da doença remonta a dezembro de 2019 em Wuhan, na China."

---

# Portuguese BERT large cased QA (Question Answering), finetuned on SQUAD v1.1

## Introduction

The model was trained on the dataset SQUAD v1.1 in portuguese from the [Deep Learning Brasil group](http://www.deeplearningbrasil.com.br/).

The language model used is the [BERTimbau Large](https://huggingface.co/neuralmind/bert-large-portuguese-cased) (aka "bert-large-portuguese-cased") from [Neuralmind.ai](https://neuralmind.ai/): BERTimbau is a pretrained BERT model for Brazilian Portuguese that achieves state-of-the-art performances on three downstream NLP tasks: Named Entity Recognition, Sentence Textual Similarity and Recognizing Textual Entailment. It is available in two sizes: Base and Large.

## Informations on the method used

All the informations are in the blog post : [NLP | Como treinar um modelo de Question Answering em qualquer linguagem baseado no BERT large, melhorando o desempenho do modelo utilizando o BERT base? (estudo de caso em português)](https://medium.com/@pierre_guillou/nlp-como-treinar-um-modelo-de-question-answering-em-qualquer-linguagem-baseado-no-bert-large-1c899262dd96)

## Notebook in GitHub

[question_answering_BERT_large_cased_squad_v11_pt.ipynb](https://github.com/piegu/language-models/blob/master/question_answering_BERT_large_cased_squad_v11_pt.ipynb) ([nbviewer version](https://nbviewer.jupyter.org/github/piegu/language-models/blob/master/question_answering_BERT_large_cased_squad_v11_pt.ipynb))

## Performance

The results obtained are the following:

```

f1 = 84.43 (against 82.50 for the base model)

exact match = 72.68 (against 70.49 for the base model)

```

## How to use the model... with Pipeline

```python

import transformers

from transformers import pipeline

# source: https://pt.wikipedia.org/wiki/Pandemia_de_COVID-19

context = r"""

A pandemia de COVID-19, também conhecida como pandemia de coronavírus, é uma pandemia em curso de COVID-19,

uma doença respiratória causada pelo coronavírus da síndrome respiratória aguda grave 2 (SARS-CoV-2).

O vírus tem origem zoonótica e o primeiro caso conhecido da doença remonta a dezembro de 2019 em Wuhan, na China.

Em 20 de janeiro de 2020, a Organização Mundial da Saúde (OMS) classificou o surto

como Emergência de Saúde Pública de Âmbito Internacional e, em 11 de março de 2020, como pandemia.

Em 18 de junho de 2021, 177 349 274 casos foram confirmados em 192 países e territórios,

com 3 840 181 mortes atribuídas à doença, tornando-se uma das pandemias mais mortais da história.

Os sintomas de COVID-19 são altamente variáveis, variando de nenhum a doenças com risco de morte.

O vírus se espalha principalmente pelo ar quando as pessoas estão perto umas das outras.

Ele deixa uma pessoa infectada quando ela respira, tosse, espirra ou fala e entra em outra pessoa pela boca, nariz ou olhos.

Ele também pode se espalhar através de superfícies contaminadas.

As pessoas permanecem contagiosas por até duas semanas e podem espalhar o vírus mesmo se forem assintomáticas.

"""

model_name = 'pierreguillou/bert-large-cased-squad-v1.1-portuguese'

nlp = pipeline("question-answering", model=model_name)

question = "Quando começou a pandemia de Covid-19 no mundo?"

result = nlp(question=question, context=context)

print(f"Answer: '{result['answer']}', score: {round(result['score'], 4)}, start: {result['start']}, end: {result['end']}")

# Answer: 'dezembro de 2019', score: 0.5087, start: 290, end: 306

```

## How to use the model... with the Auto classes

```python

from transformers import AutoTokenizer, AutoModelForQuestionAnswering

tokenizer = AutoTokenizer.from_pretrained("pierreguillou/bert-large-cased-squad-v1.1-portuguese")

model = AutoModelForQuestionAnswering.from_pretrained("pierreguillou/bert-large-cased-squad-v1.1-portuguese")

```

Or just clone the model repo:

```python

git lfs install

git clone https://huggingface.co/pierreguillou/bert-large-cased-squad-v1.1-portuguese

# if you want to clone without large files – just their pointers

# prepend your git clone with the following env var:

GIT_LFS_SKIP_SMUDGE=1

```

## Limitations and bias

The training data used for this model come from Portuguese SQUAD. It could contain a lot of unfiltered content, which is far from neutral, and biases.

## Author

Portuguese BERT large cased QA (Question Answering), finetuned on SQUAD v1.1 was trained and evaluated by [Pierre GUILLOU](https://www.linkedin.com/in/pierreguillou/) thanks to the Open Source code, platforms and advices of many organizations ([link to the list](https://medium.com/@pierre_guillou/nlp-como-treinar-um-modelo-de-question-answering-em-qualquer-linguagem-baseado-no-bert-large-1c899262dd96#c2f5)). In particular: [Hugging Face](https://huggingface.co/), [Neuralmind.ai](https://neuralmind.ai/), [Deep Learning Brasil group](http://www.deeplearningbrasil.com.br/) and [AI Lab](https://ailab.unb.br/).

## Citation

If you use our work, please cite:

```bibtex

@inproceedings{pierreguillou2021bertlargecasedsquadv11portuguese,

title={Portuguese BERT large cased QA (Question Answering), finetuned on SQUAD v1.1},

author={Pierre Guillou},

year={2021}

}

``` |

NeelNanda/Attn_Only_1L512W_C4_Code | NeelNanda | 2022-10-26T14:33:12Z | 1,009 | 1 | transformers | [

"transformers",

"endpoints_compatible",

"region:us"

]

| null | 2022-10-26T14:26:30Z | Entry not found |

liminerity/Blur-7b-v1.22 | liminerity | 2024-03-11T18:32:49Z | 1,009 | 1 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"mergekit",

"lazymergekit",

"s3nh/Sonya-Panda-7B-slerp",

"argilla/distilabeled-Marcoro14-7B-slerp",

"Weyaxi/MetaMath-OpenHermes-2.5-neural-chat-v3-3-Slerp",

"base_model:s3nh/Sonya-Panda-7B-slerp",

"base_model:argilla/distilabeled-Marcoro14-7B-slerp",

"base_model:Weyaxi/MetaMath-OpenHermes-2.5-neural-chat-v3-3-Slerp",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-01-18T04:40:21Z | ---

license: apache-2.0

tags:

- merge

- mergekit

- lazymergekit

- s3nh/Sonya-Panda-7B-slerp

- argilla/distilabeled-Marcoro14-7B-slerp

- Weyaxi/MetaMath-OpenHermes-2.5-neural-chat-v3-3-Slerp

base_model:

- s3nh/Sonya-Panda-7B-slerp

- argilla/distilabeled-Marcoro14-7B-slerp

- Weyaxi/MetaMath-OpenHermes-2.5-neural-chat-v3-3-Slerp

model-index:

- name: Blur-7b-v1.22

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 62.29

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=liminerity/Blur-7b-v1.22

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 82.0

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=liminerity/Blur-7b-v1.22

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 58.03

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=liminerity/Blur-7b-v1.22

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 68.01

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=liminerity/Blur-7b-v1.22

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 78.61

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=liminerity/Blur-7b-v1.22

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 31.16

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=liminerity/Blur-7b-v1.22

name: Open LLM Leaderboard

---

# Blur-7b-v1.22

Blur-7b-v1.22 is a merge of the following models using [LazyMergekit](https://colab.research.google.com/drive/1obulZ1ROXHjYLn6PPZJwRR6GzgQogxxb?usp=sharing):

* [s3nh/Sonya-Panda-7B-slerp](https://huggingface.co/s3nh/Sonya-Panda-7B-slerp)

* [argilla/distilabeled-Marcoro14-7B-slerp](https://huggingface.co/argilla/distilabeled-Marcoro14-7B-slerp)

* [Weyaxi/MetaMath-OpenHermes-2.5-neural-chat-v3-3-Slerp](https://huggingface.co/Weyaxi/MetaMath-OpenHermes-2.5-neural-chat-v3-3-Slerp)

## 🧩 Configuration

```yaml

models:

- model: s3nh/Sonya-Panda-7B-slerp

parameters:

density: [1, 0.7, 0.1] # density gradient

weight: 1.0

- model: argilla/distilabeled-Marcoro14-7B-slerp

parameters:

density: 0.5

weight: [0, 0.3, 0.7, 1] # weight gradient

- model: Weyaxi/MetaMath-OpenHermes-2.5-neural-chat-v3-3-Slerp

parameters:

density: 0.33

weight:

- filter: mlp

value: 0.5

- value: 0

merge_method: ties

base_model: liminerity/Blur-7b-v1.21

parameters:

normalize: true

int8_mask: true

dtype: bfloat16

```

## 💻 Usage

```python

!pip install -qU transformers accelerate

from transformers import AutoTokenizer

import transformers

import torch

model = "liminerity/Blur-7b-v1.22"

messages = [{"role": "user", "content": "What is a large language model?"}]

tokenizer = AutoTokenizer.from_pretrained(model)

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)

outputs = pipeline(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

```

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_liminerity__Blur-7b-v1.22)

| Metric |Value|

|---------------------------------|----:|

|Avg. |63.35|

|AI2 Reasoning Challenge (25-Shot)|62.29|

|HellaSwag (10-Shot) |82.00|

|MMLU (5-Shot) |58.03|

|TruthfulQA (0-shot) |68.01|

|Winogrande (5-shot) |78.61|

|GSM8k (5-shot) |31.16|

|

Azazelle/Mocha-SR-7b-ex | Azazelle | 2024-03-23T16:11:31Z | 1,009 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"mergekit",

"merge",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:WizardLM/WizardMath-7B-V1.1",

"base_model:akjindal53244/Mistral-7B-v0.1-Open-Platypus",

"base_model:Open-Orca/Mistral-7B-OpenOrca",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-03-23T15:29:24Z | ---

pipeline_tag: text-generation

base_model:

- mistralai/Mistral-7B-v0.1

- WizardLM/WizardMath-7B-V1.1

- akjindal53244/Mistral-7B-v0.1-Open-Platypus

- Open-Orca/Mistral-7B-OpenOrca

library_name: transformers

tags:

- mergekit

- merge

license: cc-by-4.0

---

# Moko-SR-7b-ex

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method

This model was merged using the rescaled_sample merge method using [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) as a base.

### Models Merged

The following models were included in the merge:

* [Open-Orca/Mistral-7B-OpenOrca](https://huggingface.co/Open-Orca/Mistral-7B-OpenOrca)

* [WizardLM/WizardMath-7B-V1.1](https://huggingface.co/WizardLM/WizardMath-7B-V1.1)

* [akjindal53244/Mistral-7B-v0.1-Open-Platypus](https://huggingface.co/akjindal53244/Mistral-7B-v0.1-Open-Platypus)

### Configuration

The following YAML configuration was used to produce this model:

```yaml

models:

- model: Open-Orca/Mistral-7B-OpenOrca

parameters:

density: [1, 0.7, 0.1] # density gradient

weight: 1.0

- model: akjindal53244/Mistral-7B-v0.1-Open-Platypus

parameters:

density: 0.5

weight: [0, 0.3, 0.7, 1] # weight gradient

- model: WizardLM/WizardMath-7B-V1.1

parameters:

density: 0.33

weight:

- filter: mlp

value: 0.5

- value: 0

merge_method: rescaled_sample

base_model: mistralai/Mistral-7B-v0.1

parameters:

int8_mask: true

dtype: float16

```

|

statking/zephyr-7b-sft-full-orpo | statking | 2024-05-21T09:00:33Z | 1,009 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"alignment-handbook",

"trl",

"orpo",

"generated_from_trainer",

"conversational",

"dataset:HuggingFaceH4/ultrafeedback_binarized",

"base_model:mistralai/Mistral-7B-v0.1",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-05-18T16:35:37Z | ---

license: apache-2.0

base_model: mistralai/Mistral-7B-v0.1

tags:

- alignment-handbook

- trl

- orpo

- generated_from_trainer

- trl

- orpo

- alignment-handbook

- generated_from_trainer

datasets:

- HuggingFaceH4/ultrafeedback_binarized

model-index:

- name: zephyr-7b-sft-full-orpo

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

[<img src="https://raw.githubusercontent.com/wandb/assets/main/wandb-github-badge-28.svg" alt="Visualize in Weights & Biases" width="200" height="32"/>](https://wandb.ai/statking/huggingface/runs/90a8kp39)

# zephyr-7b-sft-full-orpo

This model is a fine-tuned version of [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) on the HuggingFaceH4/ultrafeedback_binarized dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4701

- Rewards/chosen: -0.0364

- Rewards/rejected: -0.0499

- Rewards/accuracies: 0.6587

- Rewards/margins: 0.0135

- Logps/rejected: -0.9978

- Logps/chosen: -0.7282

- Logits/rejected: -2.9263

- Logits/chosen: -2.9434

- Nll Loss: 0.4357

- Log Odds Ratio: -0.6093

- Log Odds Chosen: 0.4456

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 7e-06

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- distributed_type: multi-GPU

- num_devices: 4

- gradient_accumulation_steps: 2

- total_train_batch_size: 64

- total_eval_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: inverse_sqrt

- lr_scheduler_warmup_steps: 100

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rewards/chosen | Rewards/rejected | Rewards/accuracies | Rewards/margins | Logps/rejected | Logps/chosen | Logits/rejected | Logits/chosen | Nll Loss | Log Odds Ratio | Log Odds Chosen |

|:-------------:|:------:|:----:|:---------------:|:--------------:|:----------------:|:------------------:|:---------------:|:--------------:|:------------:|:---------------:|:-------------:|:--------:|:--------------:|:---------------:|

| 0.5226 | 0.1049 | 100 | 0.5280 | -0.0386 | -0.0472 | 0.6329 | 0.0086 | -0.9448 | -0.7728 | -2.7583 | -2.7860 | 0.4953 | -0.6326 | 0.2873 |

| 0.5074 | 0.2098 | 200 | 0.5134 | -0.0381 | -0.0478 | 0.6409 | 0.0098 | -0.9566 | -0.7612 | -2.6736 | -2.7002 | 0.4774 | -0.6357 | 0.3190 |

| 0.5265 | 0.3146 | 300 | 0.5012 | -0.0379 | -0.0479 | 0.6329 | 0.0099 | -0.9572 | -0.7588 | -2.7317 | -2.7594 | 0.4653 | -0.6374 | 0.3278 |

| 0.5194 | 0.4195 | 400 | 0.4912 | -0.0371 | -0.0478 | 0.6429 | 0.0107 | -0.9559 | -0.7417 | -2.6640 | -2.6974 | 0.4560 | -0.6284 | 0.3607 |

| 0.5008 | 0.5244 | 500 | 0.4847 | -0.0373 | -0.0489 | 0.6508 | 0.0117 | -0.9786 | -0.7455 | -2.5957 | -2.6294 | 0.4499 | -0.6209 | 0.3873 |

| 0.4725 | 0.6293 | 600 | 0.4794 | -0.0362 | -0.0470 | 0.6349 | 0.0107 | -0.9394 | -0.7248 | -2.6147 | -2.6477 | 0.4435 | -0.6320 | 0.3567 |

| 0.4875 | 0.7341 | 700 | 0.4767 | -0.0368 | -0.0498 | 0.6409 | 0.0129 | -0.9955 | -0.7365 | -2.6910 | -2.7213 | 0.4416 | -0.6158 | 0.4180 |

| 0.4796 | 0.8390 | 800 | 0.4740 | -0.0371 | -0.0508 | 0.6508 | 0.0137 | -1.0162 | -0.7416 | -2.7913 | -2.8114 | 0.4396 | -0.6169 | 0.4363 |

| 0.4851 | 0.9439 | 900 | 0.4714 | -0.0357 | -0.0466 | 0.6528 | 0.0109 | -0.9324 | -0.7143 | -2.9543 | -2.9692 | 0.4361 | -0.6245 | 0.3669 |

### Framework versions

- Transformers 4.41.0.dev0

- Pytorch 2.3.0+cu121

- Datasets 2.19.1

- Tokenizers 0.19.1

|

John6666/better-than-hentai-xxxl-unreal-pony-mk2-sdxl | John6666 | 2024-06-04T11:23:41Z | 1,009 | 0 | diffusers | [

"diffusers",

"safetensors",

"text-to-image",

"stable-diffusion",

"stable-diffusion-xl",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionXLPipeline",

"region:us"

]

| text-to-image | 2024-06-04T11:18:43Z | ---

license: other

tags:

- text-to-image

- stable-diffusion

- stable-diffusion-xl

---

Original model is [here](https://civitai.com/models/258840/better-than-hentai-xxxl?modelVersionId=544529).

|

Raunak1/EI-Mistral-Q4_0-GGUF | Raunak1 | 2024-06-25T13:07:27Z | 1,009 | 1 | transformers | [

"transformers",

"gguf",

"llama-cpp",

"gguf-my-repo",

"base_model:Raunak1/EI-Mistral",

"endpoints_compatible",

"region:us"

]

| null | 2024-06-25T13:07:05Z | ---

base_model: Raunak1/EI-Mistral

library_name: transformers

tags:

- llama-cpp

- gguf-my-repo

---

# Raunak1/EI-Mistral-Q4_0-GGUF

This model was converted to GGUF format from [`Raunak1/EI-Mistral`](https://huggingface.co/Raunak1/EI-Mistral) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/Raunak1/EI-Mistral) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo Raunak1/EI-Mistral-Q4_0-GGUF --hf-file ei-mistral-q4_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo Raunak1/EI-Mistral-Q4_0-GGUF --hf-file ei-mistral-q4_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo Raunak1/EI-Mistral-Q4_0-GGUF --hf-file ei-mistral-q4_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo Raunak1/EI-Mistral-Q4_0-GGUF --hf-file ei-mistral-q4_0.gguf -c 2048

```

|

monsoon-nlp/hindi-bert | monsoon-nlp | 2023-09-20T22:22:29Z | 1,008 | 16 | transformers | [

"transformers",

"pytorch",

"tf",

"safetensors",

"electra",

"feature-extraction",

"hi",

"doi:10.57967/hf/1305",

"endpoints_compatible",

"region:us"

]

| feature-extraction | 2022-03-02T23:29:05Z | ---

language: hi

---

# Releasing Hindi ELECTRA model

This is a first attempt at a Hindi language model trained with Google Research's [ELECTRA](https://github.com/google-research/electra).

**As of 2022 I recommend Google's MuRIL model trained on English, Hindi, and other major Indian languages, both in their script and latinized script**: https://huggingface.co/google/muril-base-cased and https://huggingface.co/google/muril-large-cased

**For causal language models, I would suggest https://huggingface.co/sberbank-ai/mGPT, though this is a large model**

<a href="https://colab.research.google.com/drive/1R8TciRSM7BONJRBc9CBZbzOmz39FTLl_">Tokenization and training CoLab</a>

I originally used <a href="https://github.com/monsoonNLP/transformers">a modified ELECTRA</a> for finetuning, but now use SimpleTransformers.

<a href="https://medium.com/@mapmeld/teaching-hindi-to-electra-b11084baab81">Blog post</a> - I was greatly influenced by: https://huggingface.co/blog/how-to-train

## Example Notebooks

This small model has comparable results to Multilingual BERT on <a href="https://colab.research.google.com/drive/18FQxp9QGOORhMENafQilEmeAo88pqVtP">BBC Hindi news classification</a>

and on <a href="https://colab.research.google.com/drive/1UYn5Th8u7xISnPUBf72at1IZIm3LEDWN">Hindi movie reviews / sentiment analysis</a> (using SimpleTransformers)

You can get higher accuracy using ktrain by adjusting learning rate (also: changing model_type in config.json - this is an open issue with ktrain): https://colab.research.google.com/drive/1mSeeSfVSOT7e-dVhPlmSsQRvpn6xC05w?usp=sharing

Question-answering on MLQA dataset: https://colab.research.google.com/drive/1i6fidh2tItf_-IDkljMuaIGmEU6HT2Ar#scrollTo=IcFoAHgKCUiQ

A larger model (<a href="https://huggingface.co/monsoon-nlp/hindi-tpu-electra">Hindi-TPU-Electra</a>) using ELECTRA base size outperforms both models on Hindi movie reviews / sentiment analysis, but

does not perform as well on the BBC news classification task.

## Corpus

Download: https://drive.google.com/drive/folders/1SXzisKq33wuqrwbfp428xeu_hDxXVUUu?usp=sharing

The corpus is two files:

- Hindi CommonCrawl deduped by OSCAR https://traces1.inria.fr/oscar/

- latest Hindi Wikipedia ( https://dumps.wikimedia.org/hiwiki/ ) + WikiExtractor to txt

Bonus notes:

- Adding English wiki text or parallel corpus could help with cross-lingual tasks and training

## Vocabulary

https://drive.google.com/file/d/1-6tXrii3tVxjkbrpSJE9MOG_HhbvP66V/view?usp=sharing

Bonus notes:

- Created with HuggingFace Tokenizers; you can increase vocabulary size and re-train; remember to change ELECTRA vocab_size

## Training

Structure your files, with data-dir named "trainer" here

```

trainer

- vocab.txt

- pretrain_tfrecords

-- (all .tfrecord... files)

- models

-- modelname

--- checkpoint

--- graph.pbtxt

--- model.*

```

CoLab notebook gives examples of GPU vs. TPU setup

[configure_pretraining.py](https://github.com/google-research/electra/blob/master/configure_pretraining.py)

## Conversion

Use this process to convert an in-progress or completed ELECTRA checkpoint to a Transformers-ready model:

```

git clone https://github.com/huggingface/transformers

python ./transformers/src/transformers/convert_electra_original_tf_checkpoint_to_pytorch.py

--tf_checkpoint_path=./models/checkpointdir

--config_file=config.json

--pytorch_dump_path=pytorch_model.bin

--discriminator_or_generator=discriminator

python

```

```

from transformers import TFElectraForPreTraining

model = TFElectraForPreTraining.from_pretrained("./dir_with_pytorch", from_pt=True)

model.save_pretrained("tf")

```

Once you have formed one directory with config.json, pytorch_model.bin, tf_model.h5, special_tokens_map.json, tokenizer_config.json, and vocab.txt on the same level, run:

```

transformers-cli upload directory

```

|

Kukedlc/NeuTrixOmniBe-7B-model-remix | Kukedlc | 2024-03-04T13:08:11Z | 1,008 | 0 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"merge",

"mergekit",

"lazymergekit",

"CultriX/NeuralTrix-7B-dpo",

"paulml/OmniBeagleSquaredMBX-v3-7B-v2",

"base_model:CultriX/NeuralTrix-7B-dpo",

"base_model:paulml/OmniBeagleSquaredMBX-v3-7B-v2",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-02-10T23:20:07Z | ---

license: apache-2.0

tags:

- merge

- mergekit

- lazymergekit

- CultriX/NeuralTrix-7B-dpo

- paulml/OmniBeagleSquaredMBX-v3-7B-v2

base_model:

- CultriX/NeuralTrix-7B-dpo

- paulml/OmniBeagleSquaredMBX-v3-7B-v2

model-index:

- name: NeuTrixOmniBe-7B-model-remix

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 72.7

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Kukedlc/NeuTrixOmniBe-7B-model-remix

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 89.03

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Kukedlc/NeuTrixOmniBe-7B-model-remix

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 64.57

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Kukedlc/NeuTrixOmniBe-7B-model-remix

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 76.9

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Kukedlc/NeuTrixOmniBe-7B-model-remix

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 85.08

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Kukedlc/NeuTrixOmniBe-7B-model-remix

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 69.52

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Kukedlc/NeuTrixOmniBe-7B-model-remix

name: Open LLM Leaderboard

---

# NOT FOR USE -- BUG IN RESPONSE

# NeuTrixOmniBe-7B-model-remix

NeuTrixOmniBe-7B-model-remix is a merge of the following models using [LazyMergekit](https://colab.research.google.com/drive/1obulZ1ROXHjYLn6PPZJwRR6GzgQogxxb?usp=sharing):

* [CultriX/NeuralTrix-7B-dpo](https://huggingface.co/CultriX/NeuralTrix-7B-dpo)

* [paulml/OmniBeagleSquaredMBX-v3-7B-v2](https://huggingface.co/paulml/OmniBeagleSquaredMBX-v3-7B-v2)

## 🧩 Configuration

```yaml

slices:

- sources:

- model: CultriX/NeuralTrix-7B-dpo

layer_range: [0, 32]

- model: paulml/OmniBeagleSquaredMBX-v3-7B-v2

layer_range: [0, 32]

merge_method: slerp

base_model: CultriX/NeuralTrix-7B-dpo

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

```

## 💻 Usage

```python

!pip install -qU transformers accelerate

from transformers import AutoTokenizer

import transformers

import torch

model = "Kukedlc/NeuTrixOmniBe-7B-model-remix"

messages = [{"role": "user", "content": "What is a large language model?"}]

tokenizer = AutoTokenizer.from_pretrained(model)

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)

outputs = pipeline(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

```

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_Kukedlc__NeuTrixOmniBe-7B-model-remix)

| Metric |Value|

|---------------------------------|----:|

|Avg. |76.30|

|AI2 Reasoning Challenge (25-Shot)|72.70|

|HellaSwag (10-Shot) |89.03|

|MMLU (5-Shot) |64.57|

|TruthfulQA (0-shot) |76.90|

|Winogrande (5-shot) |85.08|

|GSM8k (5-shot) |69.52|

|

ChaoticNeutrals/Kool-Aid_7B | ChaoticNeutrals | 2024-03-15T20:32:50Z | 1,008 | 4 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"mergekit",

"merge",

"en",

"license:other",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-03-15T05:14:01Z | ---

language:

- en

license: other

library_name: transformers

tags:

- mergekit

- merge

base_model: []

model-index:

- name: Kool-Aid_7B

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 67.49

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=ChaoticNeutrals/Kool-Aid_7B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 86.13

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=ChaoticNeutrals/Kool-Aid_7B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 63.82

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=ChaoticNeutrals/Kool-Aid_7B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 65.12

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=ChaoticNeutrals/Kool-Aid_7B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 81.37

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=ChaoticNeutrals/Kool-Aid_7B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 54.21

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=ChaoticNeutrals/Kool-Aid_7B

name: Open LLM Leaderboard

---

# Kool-Aid

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method

This model was merged using the SLERP merge method.

### Models Merged

The following models were included in the merge:

* ErosEris

* CookieNexus

### Configuration

The following YAML configuration was used to produce this model:

```yaml

slices:

- sources:

- model: CookieNexus

layer_range: [0, 32]

- model: ErosEris

layer_range: [0, 32]

merge_method: slerp

base_model: CookieNexus

parameters:

t:

- filter: self_attn

value: [0, 0.5, 0.3, 0.7, 1]

- filter: mlp

value: [1, 0.5, 0.7, 0.3, 0]

- value: 0.5

dtype: bfloat16

```

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_ChaoticNeutrals__Kool-Aid_7B)

| Metric |Value|

|---------------------------------|----:|

|Avg. |69.69|

|AI2 Reasoning Challenge (25-Shot)|67.49|

|HellaSwag (10-Shot) |86.13|

|MMLU (5-Shot) |63.82|

|TruthfulQA (0-shot) |65.12|

|Winogrande (5-shot) |81.37|

|GSM8k (5-shot) |54.21|

|

Vikhrmodels/it-5.2-fp16-cp | Vikhrmodels | 2024-05-29T08:21:08Z | 1,008 | 6 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"ru",

"en",

"arxiv:2405.13929",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-05-14T19:10:54Z | ---

library_name: transformers

language:

- ru

- en

license: apache-2.0

---

# Релиз вихря 0.5

Долили сильно больше данных в sft, теперь стабильнее работает json и multiturn, слегка подточили параметры претрена модели

Added a lot more data to sft, now json and multiturn work more stable on long context and hard prompts

- [Google Colab](https://colab.research.google.com/drive/1-_BWsJycBm3rEyjpBx2_ejshpemQYHbe?usp=sharing)

- [GGUF](https://huggingface.co/Vikhrmodels/it-5.2-fp16-cp-GGUF)

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model = AutoModelForCausalLM.from_pretrained("Vikhrmodels/it-5.2-fp16-cp",

device_map="auto",

attn_implementation="sdpa",

torch_dtype=torch.bfloat16)

tokenizer = AutoTokenizer.from_pretrained("Vikhrmodels/it-5.2-fp16-cp")

from transformers import AutoTokenizer, pipeline

pipe = pipeline("text-generation", model=model, tokenizer=tokenizer)

prompts = [

"В чем разница между фруктом и овощем?",

"Годы жизни колмагорова?"]

def test_inference(prompt):

prompt = pipe.tokenizer.apply_chat_template([{"role": "user", "content": prompt}], tokenize=False, add_generation_prompt=True)

print(prompt)

outputs = pipe(prompt, max_new_tokens=512, do_sample=True, num_beams=1, temperature=0.25, top_k=50, top_p=0.98, eos_token_id=79097)

return outputs[0]['generated_text'][len(prompt):].strip()

for prompt in prompts:

print(f" prompt:\n{prompt}")

print(f" response:\n{test_inference(prompt)}")

print("-"*50)

```

```

@article{nikolich2024vikhr,

title={Vikhr: The Family of Open-Source Instruction-Tuned Large Language Models for Russian},

author={Aleksandr Nikolich and Konstantin Korolev and Artem Shelmanov},

journal={arXiv preprint arXiv:2405.13929},

year={2024},

url={https://arxiv.org/pdf/2405.13929}

}

``` |

OpenBuddy/openbuddy-yi1.5-34b-v21.3-32k | OpenBuddy | 2024-06-06T00:59:41Z | 1,008 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"mixtral",

"conversational",

"zh",

"en",

"fr",

"de",

"ja",

"ko",

"it",

"ru",

"fi",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-06-05T12:14:55Z | ---

license: apache-2.0

language:

- zh

- en

- fr

- de

- ja

- ko

- it

- ru

- fi

pipeline_tag: text-generation

inference: false

library_name: transformers

tags:

- mixtral

---

# OpenBuddy - Open Multilingual Chatbot

GitHub and Usage Guide: [https://github.com/OpenBuddy/OpenBuddy](https://github.com/OpenBuddy/OpenBuddy)

Website and Demo: [https://openbuddy.ai](https://openbuddy.ai)

Evaluation result of this model: [Evaluation.txt](Evaluation.txt)

# Copyright Notice

Base model: https://huggingface.co/01-ai/Yi-1.5-34B-32K

License: Apache 2.0

# Prompt Format

We recommend using the fast tokenizer from `transformers`, which should be enabled by default in the `transformers` and `vllm` libraries. Other implementations including `sentencepiece` may not work as expected, especially for special tokens like `<|role|>`, `<|says|>` and `<|end|>`.

```

<|role|>system<|says|>You(assistant) are a helpful, respectful and honest INTP-T AI Assistant named Buddy. You are talking to a human(user).

Always answer as helpfully and logically as possible, while being safe. Your answers should not include any harmful, political, religious, unethical, racist, sexist, toxic, dangerous, or illegal content. Please ensure that your responses are socially unbiased and positive in nature.

You cannot access the internet, but you have vast knowledge, cutoff: 2023-04.

You are trained by OpenBuddy team, (https://openbuddy.ai, https://github.com/OpenBuddy/OpenBuddy), not related to GPT or OpenAI.<|end|>

<|role|>user<|says|>History input 1<|end|>

<|role|>assistant<|says|>History output 1<|end|>

<|role|>user<|says|>History input 2<|end|>

<|role|>assistant<|says|>History output 2<|end|>

<|role|>user<|says|>Current input<|end|>

<|role|>assistant<|says|>

```

This format is also defined in `tokenizer_config.json`, which means you can directly use `vllm` to deploy an OpenAI-like API service. For more information, please refer to the [vllm documentation](https://docs.vllm.ai/en/latest/serving/openai_compatible_server.html).

## Disclaimer

All OpenBuddy models have inherent limitations and may potentially produce outputs that are erroneous, harmful, offensive, or otherwise undesirable. Users should not use these models in critical or high-stakes situations that may lead to personal injury, property damage, or significant losses. Examples of such scenarios include, but are not limited to, the medical field, controlling software and hardware systems that may cause harm, and making important financial or legal decisions.

OpenBuddy is provided "as-is" without any warranty of any kind, either express or implied, including, but not limited to, the implied warranties of merchantability, fitness for a particular purpose, and non-infringement. In no event shall the authors, contributors, or copyright holders be liable for any claim, damages, or other liabilities, whether in an action of contract, tort, or otherwise, arising from, out of, or in connection with the software or the use or other dealings in the software.

By using OpenBuddy, you agree to these terms and conditions, and acknowledge that you understand the potential risks associated with its use. You also agree to indemnify and hold harmless the authors, contributors, and copyright holders from any claims, damages, or liabilities arising from your use of OpenBuddy.

## 免责声明

所有OpenBuddy模型均存在固有的局限性,可能产生错误的、有害的、冒犯性的或其他不良的输出。用户在关键或高风险场景中应谨慎行事,不要使用这些模型,以免导致人身伤害、财产损失或重大损失。此类场景的例子包括但不限于医疗领域、可能导致伤害的软硬件系统的控制以及进行重要的财务或法律决策。

OpenBuddy按“原样”提供,不附带任何种类的明示或暗示的保证,包括但不限于适销性、特定目的的适用性和非侵权的暗示保证。在任何情况下,作者、贡献者或版权所有者均不对因软件或使用或其他软件交易而产生的任何索赔、损害赔偿或其他责任(无论是合同、侵权还是其他原因)承担责任。

使用OpenBuddy即表示您同意这些条款和条件,并承认您了解其使用可能带来的潜在风险。您还同意赔偿并使作者、贡献者和版权所有者免受因您使用OpenBuddy而产生的任何索赔、损害赔偿或责任的影响。 |

njaana/phi3-mini-demo-fine-tune-model | njaana | 2024-06-12T09:37:28Z | 1,008 | 0 | transformers | [

"transformers",

"gguf",

"mistral",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| null | 2024-06-12T04:41:57Z | Entry not found |

timm/convnextv2_large.fcmae_ft_in22k_in1k_384 | timm | 2024-02-10T23:29:26Z | 1,007 | 0 | timm | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2301.00808",

"license:cc-by-nc-4.0",

"region:us"

]

| image-classification | 2023-01-05T01:54:26Z | ---

license: cc-by-nc-4.0

library_name: timm

tags:

- image-classification

- timm

datasets:

- imagenet-1k

- imagenet-1k

---

# Model card for convnextv2_large.fcmae_ft_in22k_in1k_384

A ConvNeXt-V2 image classification model. Pretrained with a fully convolutional masked autoencoder framework (FCMAE) and fine-tuned on ImageNet-22k and then ImageNet-1k.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 198.0

- GMACs: 101.1

- Activations (M): 126.7

- Image size: 384 x 384

- **Papers:**

- ConvNeXt V2: Co-designing and Scaling ConvNets with Masked Autoencoders: https://arxiv.org/abs/2301.00808

- **Original:** https://github.com/facebookresearch/ConvNeXt-V2

- **Dataset:** ImageNet-1k

- **Pretrain Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('convnextv2_large.fcmae_ft_in22k_in1k_384', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'convnextv2_large.fcmae_ft_in22k_in1k_384',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 192, 96, 96])

# torch.Size([1, 384, 48, 48])

# torch.Size([1, 768, 24, 24])

# torch.Size([1, 1536, 12, 12])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'convnextv2_large.fcmae_ft_in22k_in1k_384',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 1536, 12, 12) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

All timing numbers from eager model PyTorch 1.13 on RTX 3090 w/ AMP.

| model |top1 |top5 |img_size|param_count|gmacs |macts |samples_per_sec|batch_size|

|------------------------------------------------------------------------------------------------------------------------------|------|------|--------|-----------|------|------|---------------|----------|

| [convnextv2_huge.fcmae_ft_in22k_in1k_512](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in22k_in1k_512) |88.848|98.742|512 |660.29 |600.81|413.07|28.58 |48 |

| [convnextv2_huge.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in22k_in1k_384) |88.668|98.738|384 |660.29 |337.96|232.35|50.56 |64 |

| [convnext_xxlarge.clip_laion2b_soup_ft_in1k](https://huggingface.co/timm/convnext_xxlarge.clip_laion2b_soup_ft_in1k) |88.612|98.704|256 |846.47 |198.09|124.45|122.45 |256 |

| [convnext_large_mlp.clip_laion2b_soup_ft_in12k_in1k_384](https://huggingface.co/timm/convnext_large_mlp.clip_laion2b_soup_ft_in12k_in1k_384) |88.312|98.578|384 |200.13 |101.11|126.74|196.84 |256 |

| [convnextv2_large.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in22k_in1k_384) |88.196|98.532|384 |197.96 |101.1 |126.74|128.94 |128 |

| [convnext_large_mlp.clip_laion2b_soup_ft_in12k_in1k_320](https://huggingface.co/timm/convnext_large_mlp.clip_laion2b_soup_ft_in12k_in1k_320) |87.968|98.47 |320 |200.13 |70.21 |88.02 |283.42 |256 |

| [convnext_xlarge.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_xlarge.fb_in22k_ft_in1k_384) |87.75 |98.556|384 |350.2 |179.2 |168.99|124.85 |192 |

| [convnextv2_base.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in22k_in1k_384) |87.646|98.422|384 |88.72 |45.21 |84.49 |209.51 |256 |

| [convnext_large.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_large.fb_in22k_ft_in1k_384) |87.476|98.382|384 |197.77 |101.1 |126.74|194.66 |256 |

| [convnext_large_mlp.clip_laion2b_augreg_ft_in1k](https://huggingface.co/timm/convnext_large_mlp.clip_laion2b_augreg_ft_in1k) |87.344|98.218|256 |200.13 |44.94 |56.33 |438.08 |256 |

| [convnextv2_large.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in22k_in1k) |87.26 |98.248|224 |197.96 |34.4 |43.13 |376.84 |256 |

| [convnext_base.clip_laion2b_augreg_ft_in12k_in1k_384](https://huggingface.co/timm/convnext_base.clip_laion2b_augreg_ft_in12k_in1k_384) |87.138|98.212|384 |88.59 |45.21 |84.49 |365.47 |256 |

| [convnext_xlarge.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_xlarge.fb_in22k_ft_in1k) |87.002|98.208|224 |350.2 |60.98 |57.5 |368.01 |256 |

| [convnext_base.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_base.fb_in22k_ft_in1k_384) |86.796|98.264|384 |88.59 |45.21 |84.49 |366.54 |256 |

| [convnextv2_base.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in22k_in1k) |86.74 |98.022|224 |88.72 |15.38 |28.75 |624.23 |256 |

| [convnext_large.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_large.fb_in22k_ft_in1k) |86.636|98.028|224 |197.77 |34.4 |43.13 |581.43 |256 |

| [convnext_base.clip_laiona_augreg_ft_in1k_384](https://huggingface.co/timm/convnext_base.clip_laiona_augreg_ft_in1k_384) |86.504|97.97 |384 |88.59 |45.21 |84.49 |368.14 |256 |

| [convnext_base.clip_laion2b_augreg_ft_in12k_in1k](https://huggingface.co/timm/convnext_base.clip_laion2b_augreg_ft_in12k_in1k) |86.344|97.97 |256 |88.59 |20.09 |37.55 |816.14 |256 |

| [convnextv2_huge.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_huge.fcmae_ft_in1k) |86.256|97.75 |224 |660.29 |115.0 |79.07 |154.72 |256 |

| [convnext_small.in12k_ft_in1k_384](https://huggingface.co/timm/convnext_small.in12k_ft_in1k_384) |86.182|97.92 |384 |50.22 |25.58 |63.37 |516.19 |256 |

| [convnext_base.clip_laion2b_augreg_ft_in1k](https://huggingface.co/timm/convnext_base.clip_laion2b_augreg_ft_in1k) |86.154|97.68 |256 |88.59 |20.09 |37.55 |819.86 |256 |

| [convnext_base.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_base.fb_in22k_ft_in1k) |85.822|97.866|224 |88.59 |15.38 |28.75 |1037.66 |256 |

| [convnext_small.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_small.fb_in22k_ft_in1k_384) |85.778|97.886|384 |50.22 |25.58 |63.37 |518.95 |256 |

| [convnextv2_large.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_large.fcmae_ft_in1k) |85.742|97.584|224 |197.96 |34.4 |43.13 |375.23 |256 |

| [convnext_small.in12k_ft_in1k](https://huggingface.co/timm/convnext_small.in12k_ft_in1k) |85.174|97.506|224 |50.22 |8.71 |21.56 |1474.31 |256 |

| [convnext_tiny.in12k_ft_in1k_384](https://huggingface.co/timm/convnext_tiny.in12k_ft_in1k_384) |85.118|97.608|384 |28.59 |13.14 |39.48 |856.76 |256 |

| [convnextv2_tiny.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in22k_in1k_384) |85.112|97.63 |384 |28.64 |13.14 |39.48 |491.32 |256 |

| [convnextv2_base.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_base.fcmae_ft_in1k) |84.874|97.09 |224 |88.72 |15.38 |28.75 |625.33 |256 |

| [convnext_small.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_small.fb_in22k_ft_in1k) |84.562|97.394|224 |50.22 |8.71 |21.56 |1478.29 |256 |

| [convnext_large.fb_in1k](https://huggingface.co/timm/convnext_large.fb_in1k) |84.282|96.892|224 |197.77 |34.4 |43.13 |584.28 |256 |

| [convnext_tiny.in12k_ft_in1k](https://huggingface.co/timm/convnext_tiny.in12k_ft_in1k) |84.186|97.124|224 |28.59 |4.47 |13.44 |2433.7 |256 |

| [convnext_tiny.fb_in22k_ft_in1k_384](https://huggingface.co/timm/convnext_tiny.fb_in22k_ft_in1k_384) |84.084|97.14 |384 |28.59 |13.14 |39.48 |862.95 |256 |

| [convnextv2_tiny.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in22k_in1k) |83.894|96.964|224 |28.64 |4.47 |13.44 |1452.72 |256 |

| [convnext_base.fb_in1k](https://huggingface.co/timm/convnext_base.fb_in1k) |83.82 |96.746|224 |88.59 |15.38 |28.75 |1054.0 |256 |

| [convnextv2_nano.fcmae_ft_in22k_in1k_384](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in22k_in1k_384) |83.37 |96.742|384 |15.62 |7.22 |24.61 |801.72 |256 |

| [convnext_small.fb_in1k](https://huggingface.co/timm/convnext_small.fb_in1k) |83.142|96.434|224 |50.22 |8.71 |21.56 |1464.0 |256 |

| [convnextv2_tiny.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_tiny.fcmae_ft_in1k) |82.92 |96.284|224 |28.64 |4.47 |13.44 |1425.62 |256 |

| [convnext_tiny.fb_in22k_ft_in1k](https://huggingface.co/timm/convnext_tiny.fb_in22k_ft_in1k) |82.898|96.616|224 |28.59 |4.47 |13.44 |2480.88 |256 |

| [convnext_nano.in12k_ft_in1k](https://huggingface.co/timm/convnext_nano.in12k_ft_in1k) |82.282|96.344|224 |15.59 |2.46 |8.37 |3926.52 |256 |

| [convnext_tiny_hnf.a2h_in1k](https://huggingface.co/timm/convnext_tiny_hnf.a2h_in1k) |82.216|95.852|224 |28.59 |4.47 |13.44 |2529.75 |256 |

| [convnext_tiny.fb_in1k](https://huggingface.co/timm/convnext_tiny.fb_in1k) |82.066|95.854|224 |28.59 |4.47 |13.44 |2346.26 |256 |

| [convnextv2_nano.fcmae_ft_in22k_in1k](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in22k_in1k) |82.03 |96.166|224 |15.62 |2.46 |8.37 |2300.18 |256 |

| [convnextv2_nano.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_nano.fcmae_ft_in1k) |81.83 |95.738|224 |15.62 |2.46 |8.37 |2321.48 |256 |

| [convnext_nano_ols.d1h_in1k](https://huggingface.co/timm/convnext_nano_ols.d1h_in1k) |80.866|95.246|224 |15.65 |2.65 |9.38 |3523.85 |256 |

| [convnext_nano.d1h_in1k](https://huggingface.co/timm/convnext_nano.d1h_in1k) |80.768|95.334|224 |15.59 |2.46 |8.37 |3915.58 |256 |

| [convnextv2_pico.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_pico.fcmae_ft_in1k) |80.304|95.072|224 |9.07 |1.37 |6.1 |3274.57 |256 |

| [convnext_pico.d1_in1k](https://huggingface.co/timm/convnext_pico.d1_in1k) |79.526|94.558|224 |9.05 |1.37 |6.1 |5686.88 |256 |

| [convnext_pico_ols.d1_in1k](https://huggingface.co/timm/convnext_pico_ols.d1_in1k) |79.522|94.692|224 |9.06 |1.43 |6.5 |5422.46 |256 |

| [convnextv2_femto.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_femto.fcmae_ft_in1k) |78.488|93.98 |224 |5.23 |0.79 |4.57 |4264.2 |256 |

| [convnext_femto_ols.d1_in1k](https://huggingface.co/timm/convnext_femto_ols.d1_in1k) |77.86 |93.83 |224 |5.23 |0.82 |4.87 |6910.6 |256 |

| [convnext_femto.d1_in1k](https://huggingface.co/timm/convnext_femto.d1_in1k) |77.454|93.68 |224 |5.22 |0.79 |4.57 |7189.92 |256 |

| [convnextv2_atto.fcmae_ft_in1k](https://huggingface.co/timm/convnextv2_atto.fcmae_ft_in1k) |76.664|93.044|224 |3.71 |0.55 |3.81 |4728.91 |256 |

| [convnext_atto_ols.a2_in1k](https://huggingface.co/timm/convnext_atto_ols.a2_in1k) |75.88 |92.846|224 |3.7 |0.58 |4.11 |7963.16 |256 |

| [convnext_atto.d2_in1k](https://huggingface.co/timm/convnext_atto.d2_in1k) |75.664|92.9 |224 |3.7 |0.55 |3.81 |8439.22 |256 |

## Citation

```bibtex

@article{Woo2023ConvNeXtV2,

title={ConvNeXt V2: Co-designing and Scaling ConvNets with Masked Autoencoders},

author={Sanghyun Woo, Shoubhik Debnath, Ronghang Hu, Xinlei Chen, Zhuang Liu, In So Kweon and Saining Xie},

year={2023},

journal={arXiv preprint arXiv:2301.00808},

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

|

timm/swinv2_small_window16_256.ms_in1k | timm | 2024-02-10T23:31:11Z | 1,007 | 0 | timm | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2111.09883",

"license:mit",

"region:us"

]

| image-classification | 2023-03-18T03:36:57Z | ---

license: mit

library_name: timm

tags:

- image-classification

- timm

datasets:

- imagenet-1k

---

# Model card for swinv2_small_window16_256.ms_in1k

A Swin Transformer V2 image classification model. Pretrained on ImageNet-1k by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 49.7

- GMACs: 12.8

- Activations (M): 66.3

- Image size: 256 x 256

- **Papers:**

- Swin Transformer V2: Scaling Up Capacity and Resolution: https://arxiv.org/abs/2111.09883

- **Original:** https://github.com/microsoft/Swin-Transformer

- **Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('swinv2_small_window16_256.ms_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'swinv2_small_window16_256.ms_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g. for swin_base_patch4_window7_224 (NHWC output)

# torch.Size([1, 56, 56, 128])

# torch.Size([1, 28, 28, 256])

# torch.Size([1, 14, 14, 512])

# torch.Size([1, 7, 7, 1024])

# e.g. for swinv2_cr_small_ns_224 (NCHW output)

# torch.Size([1, 96, 56, 56])

# torch.Size([1, 192, 28, 28])

# torch.Size([1, 384, 14, 14])

# torch.Size([1, 768, 7, 7])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'swinv2_small_window16_256.ms_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled (ie.e a (batch_size, H, W, num_features) tensor for swin / swinv2

# or (batch_size, num_features, H, W) for swinv2_cr

output = model.forward_head(output, pre_logits=True)

# output is (batch_size, num_features) tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@inproceedings{liu2021swinv2,

title={Swin Transformer V2: Scaling Up Capacity and Resolution},

author={Ze Liu and Han Hu and Yutong Lin and Zhuliang Yao and Zhenda Xie and Yixuan Wei and Jia Ning and Yue Cao and Zheng Zhang and Li Dong and Furu Wei and Baining Guo},

booktitle={International Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2022}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

|

Quake24/easyTermsSummerizer | Quake24 | 2023-04-22T11:15:07Z | 1,007 | 1 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"bart",

"text2text-generation",

"summarization",

"generated_from_trainer",

"en",

"dataset:Quake24/paraphrasedPayPal",

"dataset:Quake24/paraphrasedTwitter",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| summarization | 2023-04-22T11:05:20Z | ---

license: apache-2.0

tags:

- summarization

- generated_from_trainer

metrics:

- rouge

model-index:

- name: easyTermsSummerizer

results: []

datasets:

- Quake24/paraphrasedPayPal

- Quake24/paraphrasedTwitter

language:

- en

library_name: transformers

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# easyTermsSummerizer

This model is a fine-tuned version of [sshleifer/distilbart-cnn-12-6](https://huggingface.co/sshleifer/distilbart-cnn-12-6) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.8124

- Rouge1: 0.7533

- Rouge2: 0.6964

- Rougel: 0.6806

- Rougelsum: 0.6793

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum |

|:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|

| No log | 1.0 | 2 | 2.2083 | 0.7332 | 0.6595 | 0.6374 | 0.6376 |

| No log | 2.0 | 4 | 1.9331 | 0.7776 | 0.7268 | 0.6991 | 0.7005 |

| No log | 3.0 | 6 | 1.8124 | 0.7533 | 0.6964 | 0.6806 | 0.6793 |

### Framework versions

- Transformers 4.27.3

- Pytorch 1.13.0

- Datasets 2.1.0

- Tokenizers 0.13.2 |

digiplay/fantexi_v0.9 | digiplay | 2023-10-01T00:48:08Z | 1,007 | 1 | diffusers | [

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

]

| text-to-image | 2023-08-20T16:51:06Z | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Model info :

https://civitai.com/models/131601?modelVersionId=144665

|

Labbeti/conette | Labbeti | 2023-11-09T16:50:29Z | 1,007 | 0 | transformers | [

"transformers",

"pytorch",

"audio",

"captioning",

"text",

"audio-captioning",

"automated-audio-captioning",

"en",

"arxiv:2309.00454",

"license:mit",

"endpoints_compatible",

"region:us"

]

| null | 2023-09-26T16:51:40Z | ---

language: en

license: mit

tags:

- audio

- captioning

- text

- audio-captioning

- automated-audio-captioning

model_name: CoNeTTE

task_categories:

- audio-captioning

---

<div align="center">

# CoNeTTE model for Audio Captioning

</div>

CoNeTTE is an audio captioning system, which generate a short textual description of the sound events in any audio file. The architecture and training are explained in the corresponding [paper](https://arxiv.org/pdf/2309.00454.pdf). The model has been developped by me ([Étienne Labbé](https://labbeti.github.io/)) during my PhD.

## Installation

```bash

python -m pip install conette

```

## Usage with python

```py

from conette import CoNeTTEConfig, CoNeTTEModel

config = CoNeTTEConfig.from_pretrained("Labbeti/conette")

model = CoNeTTEModel.from_pretrained("Labbeti/conette", config=config)

path = "/your/path/to/audio.wav"

outputs = model(path)

candidate = outputs["cands"][0]

print(candidate)

```

The model can also accept several audio files at the same time (list[str]), or a list of pre-loaded audio files (list[Tensor]). In this second case you also need to provide the sampling rate of this files:

```py

import torchaudio

path_1 = "/your/path/to/audio_1.wav"

path_2 = "/your/path/to/audio_2.wav"

audio_1, sr_1 = torchaudio.load(path_1)

audio_2, sr_2 = torchaudio.load(path_2)

outputs = model([audio_1, audio_2], sr=[sr_1, sr_2])

candidates = outputs["cands"]

print(candidates)

```

The model can also produces different captions using a Task Embedding input which indicates the dataset caption style. The default task is "clotho".

```py

outputs = model(path, task="clotho")

candidate = outputs["cands"][0]

print(candidate)

outputs = model(path, task="audiocaps")

candidate = outputs["cands"][0]

print(candidate)

```

## Usage with command line

Simply use the command `conette-predict` with `--audio PATH1 PATH2 ...` option. You can also export results to a CSV file using `--csv_export PATH`.

```bash

conette-predict --audio "/your/path/to/audio.wav"

```

## Performance

| Test data | SPIDEr (%) | SPIDEr-FL (%) | FENSE (%) | Vocab | Outputs | Scores |

| ------------- | ------------- | ------------- | ------------- | ------------- | ------------- | ------------- |

| AC-test | 44.14 | 43.98 | 60.81 | 309 | [Link](https://github.com/Labbeti/conette-audio-captioning/blob/main/results/conette/outputs_audiocaps_test.csv) | [Link](https://github.com/Labbeti/conette-audio-captioning/blob/main/results/conette/scores_audiocaps_test.yaml) |

| CL-eval | 30.97 | 30.87 | 51.72 | 636 | [Link](https://github.com/Labbeti/conette-audio-captioning/blob/main/results/conette/outputs_clotho_eval.csv) | [Link](https://github.com/Labbeti/conette-audio-captioning/blob/main/results/conette/scores_clotho_eval.yaml) |

This model checkpoint has been trained for the Clotho dataset, but it can also reach a good performance on AudioCaps with the "audiocaps" task.

## Limitations

- The model expected audio sampled at 32 kHz. The model automatically resample up or down the input audio files. However, it might give worse results, especially when using audio with lower sampling rates.

- The model has been trained on audio lasting from 1 to 30 seconds. It can handle longer audio files, but it might require more memory and give worse results.

## Citation

The preprint version of the paper describing CoNeTTE is available on arxiv: https://arxiv.org/pdf/2309.00454.pdf

```bibtex

@misc{labbé2023conette,

title = {CoNeTTE: An efficient Audio Captioning system leveraging multiple datasets with Task Embedding},

author = {Étienne Labbé and Thomas Pellegrini and Julien Pinquier},

year = 2023,

journal = {arXiv preprint arXiv:2309.00454},

url = {https://arxiv.org/pdf/2309.00454.pdf},

eprint = {2309.00454},

archiveprefix = {arXiv},

primaryclass = {cs.SD}

}

```

## Additional information

- CoNeTTE stands for **Co**nv**Ne**Xt-**T**ransformer with **T**ask **E**mbedding.

- Model weights are available on HuggingFace: https://huggingface.co/Labbeti/conette

- The encoder part of the architecture is based on a ConvNeXt model for audio classification, available here: https://huggingface.co/topel/ConvNeXt-Tiny-AT. More precisely, the encoder weights used are named "convnext_tiny_465mAP_BL_AC_70kit.pth", available on Zenodo: https://zenodo.org/record/8020843.

## Contact

Maintainer:

- Etienne Labbé "Labbeti": [email protected] |

AIGym/deepseek-coder-1.3b-chat | AIGym | 2024-03-04T17:17:29Z | 1,007 | 1 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"finetuned",

"conversational",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-01-04T21:37:03Z | ---

license: apache-2.0

tags:

- finetuned

pipeline_tag: text-generation

model-index:

- name: deepseek-coder-1.3b-chat

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 25.85

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=AIGym/deepseek-coder-1.3b-chat

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 39.59

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=AIGym/deepseek-coder-1.3b-chat

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 26.36

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=AIGym/deepseek-coder-1.3b-chat

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 43.92

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=AIGym/deepseek-coder-1.3b-chat

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 51.7

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=AIGym/deepseek-coder-1.3b-chat

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 3.03

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=AIGym/deepseek-coder-1.3b-chat

name: Open LLM Leaderboard

---

# deepseek-coder-1.3b-chat

It was created by starting with the deepseek-coder-1.3b and training it on the open assistant dataset. We have attached the wandb report in pdf form to view the training run at a glance.

# Reson

This model was fine tned to allow it to follow direction and is a steeping stone to further training, but still would be good for asking qestions about code.

# How to use

You will need the transformers>=4.31

```python

from transformers import AutoTokenizer

import transformers

import torch

model = "AIGym/deepseek-coder-1.3b-chat"

tokenizer = AutoTokenizer.from_pretrained(model)

pipeline = transformers.pipeline(

"text-generation",

model=model,

torch_dtype=torch.float16,

device_map="auto",

)