modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

google/bert2bert_L-24_wmt_de_en | google | 2023-01-24T16:35:54Z | 936 | 8 | transformers | [

"transformers",

"pytorch",

"encoder-decoder",

"text2text-generation",

"translation",

"en",

"de",

"dataset:wmt14",

"arxiv:1907.12461",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| translation | 2022-03-02T23:29:05Z | ---

language:

- en

- de

license: apache-2.0

datasets:

- wmt14

tags:

- translation

---

# bert2bert_L-24_wmt_de_en EncoderDecoder model

The model was introduced in

[this paper](https://arxiv.org/abs/1907.12461) by Sascha Rothe, Shashi Narayan, Aliaksei Severyn and first released in [this repository](https://tfhub.dev/google/bertseq2seq/bert24_de_en/1).

The model is an encoder-decoder model that was initialized on the `bert-large` checkpoints for both the encoder

and decoder and fine-tuned on German to English translation on the WMT dataset, which is linked above.

Disclaimer: The model card has been written by the Hugging Face team.

## How to use

You can use this model for translation, *e.g.*

```python

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

tokenizer = AutoTokenizer.from_pretrained("google/bert2bert_L-24_wmt_de_en", pad_token="<pad>", eos_token="</s>", bos_token="<s>")

model = AutoModelForSeq2SeqLM.from_pretrained("google/bert2bert_L-24_wmt_de_en")

sentence = "Willst du einen Kaffee trinken gehen mit mir?"

input_ids = tokenizer(sentence, return_tensors="pt", add_special_tokens=False).input_ids

output_ids = model.generate(input_ids)[0]

print(tokenizer.decode(output_ids, skip_special_tokens=True))

# should output

# Want to drink a kaffee go with me? .

```

|

nghuyong/ernie-3.0-xbase-zh | nghuyong | 2022-10-22T11:01:58Z | 936 | 18 | transformers | [

"transformers",

"pytorch",

"ernie",

"fill-mask",

"zh",

"arxiv:2107.02137",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| fill-mask | 2022-10-22T10:57:03Z | ---

language: zh

---

# ERNIE-3.0-xbase-zh

## Introduction

ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation

More detail: https://arxiv.org/abs/2107.02137

## Released Model Info

This released pytorch model is converted from the officially released PaddlePaddle ERNIE model and

a series of experiments have been conducted to check the accuracy of the conversion.

- Official PaddlePaddle ERNIE repo:https://paddlenlp.readthedocs.io/zh/latest/model_zoo/transformers/ERNIE/contents.html

- Pytorch Conversion repo: https://github.com/nghuyong/ERNIE-Pytorch

## How to use

```Python

from transformers import BertTokenizer, ErnieModel

tokenizer = BertTokenizer.from_pretrained("nghuyong/ernie-3.0-xbase-zh")

model = ErnieModel.from_pretrained("nghuyong/ernie-3.0-xbase-zh")

```

## Citation

```bibtex

@article{sun2021ernie,

title={Ernie 3.0: Large-scale knowledge enhanced pre-training for language understanding and generation},

author={Sun, Yu and Wang, Shuohuan and Feng, Shikun and Ding, Siyu and Pang, Chao and Shang, Junyuan and Liu, Jiaxiang and Chen, Xuyi and Zhao, Yanbin and Lu, Yuxiang and others},

journal={arXiv preprint arXiv:2107.02137},

year={2021}

}

```

|

to-be/donut-base-finetuned-invoices | to-be | 2023-03-03T19:18:45Z | 936 | 10 | transformers | [

"transformers",

"pytorch",

"vision-encoder-decoder",

"donut",

"image-to-text",

"vision",

"invoices",

"arxiv:2111.15664",

"license:cc-by-nc-sa-4.0",

"endpoints_compatible",

"region:us"

]

| image-to-text | 2023-03-03T19:04:52Z | ---

license: cc-by-nc-sa-4.0

tags:

- donut

- image-to-text

- vision

- invoices

---

# Donut finetuned on invoices

Based on Donut base model (introduced in the paper [OCR-free Document Understanding Transformer](https://arxiv.org/abs/2111.15664) by Geewok et al. and first released in [this repository](https://github.com/clovaai/donut).

The model was trained with a few thousand of annotated invoices and non-invoices (for those the doctype will be 'Other'). They span across different countries and languages. They are always one page only. The dataset is proprietary unfortunately. Model is set to input resolution of 1280x1920 pixels. So any sample you want to try with higher dpi than 150 has no added value.

It was trained for about 4 hours on a NVIDIA RTX A4000 for 20k steps with a val_metric of 0.03413819904382196 at the end.

The following indexes were included in the train set:

DocType

Currency

DocumentDate

GrossAmount

InvoiceNumber

NetAmount

TaxAmount

OrderNumber

CreditorCountry

[Demo space can be found here](https://huggingface.co/spaces/to-be/invoice_document_headers_extraction_with_donut)

## Model description

Donut consists of a vision encoder (Swin Transformer) and a text decoder (BART). Given an image, the encoder first encodes the image into a tensor of embeddings (of shape batch_size, seq_len, hidden_size), after which the decoder autoregressively generates text, conditioned on the encoding of the encoder.

## Intended uses & limitations

This model is meant as a research in how well it fares with multilanguage invoices.

See my observations in the [demo space](https://huggingface.co/spaces/to-be/invoice_document_headers_extraction_with_donut).

### How to use

Look at the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/donut) which includes code examples.

|

digiplay/Colorful_v1.3 | digiplay | 2024-05-18T20:18:44Z | 936 | 2 | diffusers | [

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

]

| text-to-image | 2023-07-18T00:46:41Z | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

https://civitai.com/models/7279?modelVersionId=10282

|

vonjack/Phi-3-mini-4k-instruct-LLaMAfied | vonjack | 2024-04-25T02:33:12Z | 936 | 11 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"nlp",

"code",

"conversational",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-04-24T02:29:00Z | ---

license: mit

license_link: https://huggingface.co/microsoft/Phi-3-mini-4k-instruct/resolve/main/LICENSE

language:

- en

pipeline_tag: text-generation

tags:

- nlp

- code

---

## Model Summary

The Phi-3-Mini-4K-Instruct is a 3.8B parameters, lightweight, state-of-the-art open model trained with the Phi-3 datasets that includes both synthetic data and the filtered publicly available websites data with a focus on high-quality and reasoning dense properties.

The model belongs to the Phi-3 family with the Mini version in two variants [4K](https://huggingface.co/microsoft/Phi-3-mini-4k-instruct) and [128K](https://huggingface.co/microsoft/Phi-3-mini-128k-instruct) which is the context length (in tokens) that it can support.

The model has underwent a post-training process that incorporates both supervised fine-tuning and direct preference optimization for the instruction following and safety measures.

When assessed against benchmarks testing common sense, language understanding, math, code, long context and logical reasoning, Phi-3 Mini-4K-Instruct showcased a robust and state-of-the-art performance among models with less than 13 billion parameters.

Resources and Technical Documentation:

+ [Phi-3 Microsoft Blog](https://aka.ms/phi3blog-april)

+ [Phi-3 Technical Report](https://aka.ms/phi3-tech-report)

+ [Phi-3 on Azure AI Studio](https://aka.ms/phi3-azure-ai)

+ Phi-3 GGUF: [4K](https://aka.ms/Phi3-mini-4k-instruct-gguf)

+ Phi-3 ONNX: [4K](https://aka.ms/Phi3-mini-4k-instruct-onnx)

## Intended Uses

**Primary use cases**

The model is intended for commercial and research use in English. The model provides uses for applications which require:

1) Memory/compute constrained environments

2) Latency bound scenarios

3) Strong reasoning (especially code, math and logic)

Our model is designed to accelerate research on language and multimodal models, for use as a building block for generative AI powered features.

**Use case considerations**

Our models are not specifically designed or evaluated for all downstream purposes. Developers should consider common limitations of language models as they select use cases, and evaluate and mitigate for accuracy, safety, and fariness before using within a specific downstream use case, particularly for high risk scenarios. Developers should be aware of and adhere to applicable laws or regulations (including privacy, trade compliance laws, etc.) that are relevant to their use case.

Nothing contained in this Model Card should be interpreted as or deemed a restriction or modification to the license the model is released under.

## How to Use

Phi-3 Mini-4K-Instruct has been integrated in the development version (4.40.0) of `transformers`. Until the official version is released through `pip`, ensure that you are doing one of the following:

* When loading the model, ensure that `trust_remote_code=True` is passed as an argument of the `from_pretrained()` function.

* Update your local `transformers` to the development version: `pip uninstall -y transformers && pip install git+https://github.com/huggingface/transformers`. The previous command is an alternative to cloning and installing from the source.

The current `transformers` version can be verified with: `pip list | grep transformers`.

Phi-3 Mini-4K-Instruct is also available in [HuggingChat](https://aka.ms/try-phi3-hf-chat).

### Chat Format

Given the nature of the training data, the Phi-3 Mini-4K-Instruct model is best suited for prompts using the chat format as follows.

You can provide the prompt as a question with a generic template as follow:

```markdown

<|user|>\nQuestion <|end|>\n<|assistant|>

```

For example:

```markdown

<|system|>

You are a helpful AI assistant.<|end|>

<|user|>

How to explain Internet for a medieval knight?<|end|>

<|assistant|>

```

where the model generates the text after `<|assistant|>` . In case of few-shots prompt, the prompt can be formatted as the following:

```markdown

<|system|>

You are a helpful AI assistant.<|end|>

<|user|>

I am going to Paris, what should I see?<|end|>

<|assistant|>

Paris, the capital of France, is known for its stunning architecture, art museums, historical landmarks, and romantic atmosphere. Here are some of the top attractions to see in Paris:\n\n1. The Eiffel Tower: The iconic Eiffel Tower is one of the most recognizable landmarks in the world and offers breathtaking views of the city.\n2. The Louvre Museum: The Louvre is one of the world's largest and most famous museums, housing an impressive collection of art and artifacts, including the Mona Lisa.\n3. Notre-Dame Cathedral: This beautiful cathedral is one of the most famous landmarks in Paris and is known for its Gothic architecture and stunning stained glass windows.\n\nThese are just a few of the many attractions that Paris has to offer. With so much to see and do, it's no wonder that Paris is one of the most popular tourist destinations in the world."<|end|>

<|user|>

What is so great about #1?<|end|>

<|assistant|>

```

### Sample inference code

This code snippets show how to get quickly started with running the model on a GPU:

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

torch.random.manual_seed(0)

model = AutoModelForCausalLM.from_pretrained(

"microsoft/Phi-3-mini-4k-instruct",

device_map="cuda",

torch_dtype="auto",

trust_remote_code=True,

)

tokenizer = AutoTokenizer.from_pretrained("microsoft/Phi-3-mini-4k-instruct")

messages = [

{"role": "system", "content": "You are a helpful digital assistant. Please provide safe, ethical and accurate information to the user."},

{"role": "user", "content": "Can you provide ways to eat combinations of bananas and dragonfruits?"},

{"role": "assistant", "content": "Sure! Here are some ways to eat bananas and dragonfruits together: 1. Banana and dragonfruit smoothie: Blend bananas and dragonfruits together with some milk and honey. 2. Banana and dragonfruit salad: Mix sliced bananas and dragonfruits together with some lemon juice and honey."},

{"role": "user", "content": "What about solving an 2x + 3 = 7 equation?"},

]

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

)

generation_args = {

"max_new_tokens": 500,

"return_full_text": False,

"temperature": 0.0,

"do_sample": False,

}

output = pipe(messages, **generation_args)

print(output[0]['generated_text'])

```

## Responsible AI Considerations

Like other language models, the Phi series models can potentially behave in ways that are unfair, unreliable, or offensive. Some of the limiting behaviors to be aware of include:

+ Quality of Service: the Phi models are trained primarily on English text. Languages other than English will experience worse performance. English language varieties with less representation in the training data might experience worse performance than standard American English.

+ Representation of Harms & Perpetuation of Stereotypes: These models can over- or under-represent groups of people, erase representation of some groups, or reinforce demeaning or negative stereotypes. Despite safety post-training, these limitations may still be present due to differing levels of representation of different groups or prevalence of examples of negative stereotypes in training data that reflect real-world patterns and societal biases.

+ Inappropriate or Offensive Content: these models may produce other types of inappropriate or offensive content, which may make it inappropriate to deploy for sensitive contexts without additional mitigations that are specific to the use case.

+ Information Reliability: Language models can generate nonsensical content or fabricate content that might sound reasonable but is inaccurate or outdated.

+ Limited Scope for Code: Majority of Phi-3 training data is based in Python and use common packages such as "typing, math, random, collections, datetime, itertools". If the model generates Python scripts that utilize other packages or scripts in other languages, we strongly recommend users manually verify all API uses.

Developers should apply responsible AI best practices and are responsible for ensuring that a specific use case complies with relevant laws and regulations (e.g. privacy, trade, etc.). Important areas for consideration include:

+ Allocation: Models may not be suitable for scenarios that could have consequential impact on legal status or the allocation of resources or life opportunities (ex: housing, employment, credit, etc.) without further assessments and additional debiasing techniques.

+ High-Risk Scenarios: Developers should assess suitability of using models in high-risk scenarios where unfair, unreliable or offensive outputs might be extremely costly or lead to harm. This includes providing advice in sensitive or expert domains where accuracy and reliability are critical (ex: legal or health advice). Additional safeguards should be implemented at the application level according to the deployment context.

+ Misinformation: Models may produce inaccurate information. Developers should follow transparency best practices and inform end-users they are interacting with an AI system. At the application level, developers can build feedback mechanisms and pipelines to ground responses in use-case specific, contextual information, a technique known as Retrieval Augmented Generation (RAG).

+ Generation of Harmful Content: Developers should assess outputs for their context and use available safety classifiers or custom solutions appropriate for their use case.

+ Misuse: Other forms of misuse such as fraud, spam, or malware production may be possible, and developers should ensure that their applications do not violate applicable laws and regulations.

## Training

### Model

* Architecture: Phi-3 Mini-4K-Instruct has 3.8B parameters and is a dense decoder-only Transformer model. The model is fine-tuned with Supervised fine-tuning (SFT) and Direct Preference Optimization (DPO) to ensure alignment with human preferences and safety guidlines.

* Inputs: Text. It is best suited for prompts using chat format.

* Context length: 4K tokens

* GPUs: 512 H100-80G

* Training time: 7 days

* Training data: 3.3T tokens

* Outputs: Generated text in response to the input

* Dates: Our models were trained between February and April 2024

* Status: This is a static model trained on an offline dataset with cutoff date October 2023. Future versions of the tuned models may be released as we improve models.

### Datasets

Our training data includes a wide variety of sources, totaling 3.3 trillion tokens, and is a combination of

1) Publicly available documents filtered rigorously for quality, selected high-quality educational data, and code;

2) Newly created synthetic, “textbook-like” data for the purpose of teaching math, coding, common sense reasoning, general knowledge of the world (science, daily activities, theory of mind, etc.);

3) High quality chat format supervised data covering various topics to reflect human preferences on different aspects such as instruct-following, truthfulness, honesty and helpfulness.

### Fine-tuning

A basic example of multi-GPUs supervised fine-tuning (SFT) with TRL and Accelerate modules is provided [here](https://huggingface.co/microsoft/Phi-3-mini-4k-instruct/resolve/main/sample_finetune.py).

## Benchmarks

We report the results for Phi-3-Mini-4K-Instruct on standard open-source benchmarks measuring the model's reasoning ability (both common sense reasoning and logical reasoning). We compare to Phi-2, Mistral-7b-v0.1, Mixtral-8x7b, Gemma 7B, Llama-3-8B-Instruct, and GPT-3.5.

All the reported numbers are produced with the exact same pipeline to ensure that the numbers are comparable. These numbers might differ from other published numbers due to slightly different choices in the evaluation.

As is now standard, we use few-shot prompts to evaluate the models, at temperature 0.

The prompts and number of shots are part of a Microsoft internal tool to evaluate language models, and in particular we did no optimization to the pipeline for Phi-3.

More specifically, we do not change prompts, pick different few-shot examples, change prompt format, or do any other form of optimization for the model.

The number of k–shot examples is listed per-benchmark.

| | Phi-3-Mini-4K-In<br>3.8b | Phi-3-Small<br>7b (preview) | Phi-3-Medium<br>14b (preview) | Phi-2<br>2.7b | Mistral<br>7b | Gemma<br>7b | Llama-3-In<br>8b | Mixtral<br>8x7b | GPT-3.5<br>version 1106 |

|---|---|---|---|---|---|---|---|---|---|

| MMLU <br>5-Shot | 68.8 | 75.3 | 78.2 | 56.3 | 61.7 | 63.6 | 66.5 | 68.4 | 71.4 |

| HellaSwag <br> 5-Shot | 76.7 | 78.7 | 83.2 | 53.6 | 58.5 | 49.8 | 71.1 | 70.4 | 78.8 |

| ANLI <br> 7-Shot | 52.8 | 55.0 | 58.7 | 42.5 | 47.1 | 48.7 | 57.3 | 55.2 | 58.1 |

| GSM-8K <br> 0-Shot; CoT | 82.5 | 86.4 | 90.8 | 61.1 | 46.4 | 59.8 | 77.4 | 64.7 | 78.1 |

| MedQA <br> 2-Shot | 53.8 | 58.2 | 69.8 | 40.9 | 49.6 | 50.0 | 60.5 | 62.2 | 63.4 |

| AGIEval <br> 0-Shot | 37.5 | 45.0 | 49.7 | 29.8 | 35.1 | 42.1 | 42.0 | 45.2 | 48.4 |

| TriviaQA <br> 5-Shot | 64.0 | 59.1 | 73.3 | 45.2 | 72.3 | 75.2 | 67.7 | 82.2 | 85.8 |

| Arc-C <br> 10-Shot | 84.9 | 90.7 | 91.9 | 75.9 | 78.6 | 78.3 | 82.8 | 87.3 | 87.4 |

| Arc-E <br> 10-Shot | 94.6 | 97.1 | 98.0 | 88.5 | 90.6 | 91.4 | 93.4 | 95.6 | 96.3 |

| PIQA <br> 5-Shot | 84.2 | 87.8 | 88.2 | 60.2 | 77.7 | 78.1 | 75.7 | 86.0 | 86.6 |

| SociQA <br> 5-Shot | 76.6 | 79.0 | 79.4 | 68.3 | 74.6 | 65.5 | 73.9 | 75.9 | 68.3 |

| BigBench-Hard <br> 0-Shot | 71.7 | 75.0 | 82.5 | 59.4 | 57.3 | 59.6 | 51.5 | 69.7 | 68.32 |

| WinoGrande <br> 5-Shot | 70.8 | 82.5 | 81.2 | 54.7 | 54.2 | 55.6 | 65 | 62.0 | 68.8 |

| OpenBookQA <br> 10-Shot | 83.2 | 88.4 | 86.6 | 73.6 | 79.8 | 78.6 | 82.6 | 85.8 | 86.0 |

| BoolQ <br> 0-Shot | 77.6 | 82.9 | 86.5 | -- | 72.2 | 66.0 | 80.9 | 77.6 | 79.1 |

| CommonSenseQA <br> 10-Shot | 80.2 | 80.3 | 82.6 | 69.3 | 72.6 | 76.2 | 79 | 78.1 | 79.6 |

| TruthfulQA <br> 10-Shot | 65.0 | 68.1 | 74.8 | -- | 52.1 | 53.0 | 63.2 | 60.1 | 85.8 |

| HumanEval <br> 0-Shot | 59.1 | 59.1 | 54.7 | 59.0 | 28.0 | 34.1 | 60.4 | 37.8 | 62.2 |

| MBPP <br> 3-Shot | 53.8 | 71.4 | 73.7 | 60.6 | 50.8 | 51.5 | 67.7 | 60.2 | 77.8 |

## Software

* [PyTorch](https://github.com/pytorch/pytorch)

* [DeepSpeed](https://github.com/microsoft/DeepSpeed)

* [Transformers](https://github.com/huggingface/transformers)

* [Flash-Attention](https://github.com/HazyResearch/flash-attention)

## Hardware

Note that by default, the Phi-3-mini model uses flash attention, which requires certain types of GPU hardware to run. We have tested on the following GPU types:

* NVIDIA A100

* NVIDIA A6000

* NVIDIA H100

If you want to run the model on:

* NVIDIA V100 or earlier generation GPUs: call AutoModelForCausalLM.from_pretrained() with attn_implementation="eager"

* CPU: use the **GGUF** quantized models [4K](https://aka.ms/Phi3-mini-4k-instruct-gguf)

+ Optimized inference on GPU, CPU, and Mobile: use the **ONNX** models [4K](https://aka.ms/Phi3-mini-4k-instruct-onnx)

## Cross Platform Support

ONNX runtime ecosystem now supports Phi-3 Mini models across platforms and hardware. You can find the optimized Phi-3 Mini-4K-Instruct ONNX model [here](https://aka.ms/phi3-mini-4k-instruct-onnx).

Optimized Phi-3 models are also published here in ONNX format, to run with ONNX Runtime on CPU and GPU across devices, including server platforms, Windows, Linux and Mac desktops, and mobile CPUs, with the precision best suited to each of these targets. DirectML support lets developers bring hardware acceleration to Windows devices at scale across AMD, Intel, and NVIDIA GPUs.

Along with DirectML, ONNX Runtime provides cross platform support for Phi-3 across a range of devices CPU, GPU, and mobile.

Here are some of the optimized configurations we have added:

1. ONNX models for int4 DML: Quantized to int4 via AWQ

2. ONNX model for fp16 CUDA

3. ONNX model for int4 CUDA: Quantized to int4 via RTN

4. ONNX model for int4 CPU and Mobile: Quantized to int4 via RTN

## License

The model is licensed under the [MIT license](https://huggingface.co/microsoft/Phi-3-mini-4k/resolve/main/LICENSE).

## Trademarks

This project may contain trademarks or logos for projects, products, or services. Authorized use of Microsoft trademarks or logos is subject to and must follow [Microsoft’s Trademark & Brand Guidelines](https://www.microsoft.com/en-us/legal/intellectualproperty/trademarks). Use of Microsoft trademarks or logos in modified versions of this project must not cause confusion or imply Microsoft sponsorship. Any use of third-party trademarks or logos are subject to those third-party’s policies.

|

pruned-yi/pruned-yi-3b-prerelease-ckpt01 | pruned-yi | 2024-05-17T17:43:08Z | 936 | 1 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-05-17T17:13:22Z | ---

license: apache-2.0

---

this is a very early pre-release model. see the github page for more details https://github.com/fakerybakery/pruned-yi

do not expect high-quality generations. longer prompts work better. trained on a mix of cosmopedia + chinese wikipedia (mix in a 2:1 ratio to preserve bilingual abilities). licensed under apache 2.0.

please open a github issue for any questions |

kwoncho/gaincut_news_pre2017_2 | kwoncho | 2024-05-28T02:39:22Z | 936 | 0 | transformers | [

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| text-classification | 2024-05-28T02:29:15Z | Entry not found |

studio-ousia/luke-large | studio-ousia | 2022-04-13T09:06:10Z | 935 | 5 | transformers | [

"transformers",

"pytorch",

"luke",

"fill-mask",

"named entity recognition",

"entity typing",

"relation classification",

"question answering",

"en",

"arxiv:1906.08237",

"arxiv:1903.07785",

"arxiv:2002.01808",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| fill-mask | 2022-03-02T23:29:05Z | ---

language: en

thumbnail: https://github.com/studio-ousia/luke/raw/master/resources/luke_logo.png

tags:

- luke

- named entity recognition

- entity typing

- relation classification

- question answering

license: apache-2.0

---

## LUKE: Deep Contextualized Entity Representations with Entity-aware Self-attention

**LUKE** (**L**anguage **U**nderstanding with **K**nowledge-based

**E**mbeddings) is a new pre-trained contextualized representation of words and

entities based on transformer. LUKE treats words and entities in a given text as

independent tokens, and outputs contextualized representations of them. LUKE

adopts an entity-aware self-attention mechanism that is an extension of the

self-attention mechanism of the transformer, and considers the types of tokens

(words or entities) when computing attention scores.

LUKE achieves state-of-the-art results on five popular NLP benchmarks including

**[SQuAD v1.1](https://rajpurkar.github.io/SQuAD-explorer/)** (extractive

question answering),

**[CoNLL-2003](https://www.clips.uantwerpen.be/conll2003/ner/)** (named entity

recognition), **[ReCoRD](https://sheng-z.github.io/ReCoRD-explorer/)**

(cloze-style question answering),

**[TACRED](https://nlp.stanford.edu/projects/tacred/)** (relation

classification), and

**[Open Entity](https://www.cs.utexas.edu/~eunsol/html_pages/open_entity.html)**

(entity typing).

Please check the [official repository](https://github.com/studio-ousia/luke) for

more details and updates.

This is the LUKE large model with 24 hidden layers, 1024 hidden size. The total number

of parameters in this model is 483M. It is trained using December 2018 version of

Wikipedia.

### Experimental results

The experimental results are provided as follows:

| Task | Dataset | Metric | LUKE-large | luke-base | Previous SOTA |

| ------------------------------ | ---------------------------------------------------------------------------- | ------ | ----------------- | --------- | ------------------------------------------------------------------------- |

| Extractive Question Answering | [SQuAD v1.1](https://rajpurkar.github.io/SQuAD-explorer/) | EM/F1 | **90.2**/**95.4** | 86.1/92.3 | 89.9/95.1 ([Yang et al., 2019](https://arxiv.org/abs/1906.08237)) |

| Named Entity Recognition | [CoNLL-2003](https://www.clips.uantwerpen.be/conll2003/ner/) | F1 | **94.3** | 93.3 | 93.5 ([Baevski et al., 2019](https://arxiv.org/abs/1903.07785)) |

| Cloze-style Question Answering | [ReCoRD](https://sheng-z.github.io/ReCoRD-explorer/) | EM/F1 | **90.6**/**91.2** | - | 83.1/83.7 ([Li et al., 2019](https://www.aclweb.org/anthology/D19-6011/)) |

| Relation Classification | [TACRED](https://nlp.stanford.edu/projects/tacred/) | F1 | **72.7** | - | 72.0 ([Wang et al. , 2020](https://arxiv.org/abs/2002.01808)) |

| Fine-grained Entity Typing | [Open Entity](https://www.cs.utexas.edu/~eunsol/html_pages/open_entity.html) | F1 | **78.2** | - | 77.6 ([Wang et al. , 2020](https://arxiv.org/abs/2002.01808)) |

### Citation

If you find LUKE useful for your work, please cite the following paper:

```latex

@inproceedings{yamada2020luke,

title={LUKE: Deep Contextualized Entity Representations with Entity-aware Self-attention},

author={Ikuya Yamada and Akari Asai and Hiroyuki Shindo and Hideaki Takeda and Yuji Matsumoto},

booktitle={EMNLP},

year={2020}

}

```

|

inkoziev/rugpt_chitchat | inkoziev | 2022-10-19T07:44:11Z | 935 | 17 | transformers | [

"transformers",

"pytorch",

"gpt2",

"text-generation",

"PyTorch",

"Transformers",

"ru",

"license:unlicense",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2022-09-15T07:20:18Z | ---

pipeline_tag: text-generation

tags:

- PyTorch

- Transformers

- gpt2

license: unlicense

language: ru

widget:

- text: "- У Джульетты было 7 пончиков, а потом она 3 съела. Сколько у нее осталось пончиков? -"

- text: "- Поглажено 4 манула. Осталось погладить 6. Сколько всего манулов надо погладить? -"

- text: "- Для начала скажи, чему равно пятью девять? -"

- text: "- ты чё такой борзый? -"

- text: "- Привет! Как ваше ничего? -"

---

## Russian Chit-chat, Deductive and Common Sense reasoning model

Модель является ядром прототипа [диалоговой системы](https://github.com/Koziev/chatbot) с двумя основными функциями.

Первая функция - **генерация реплик чит-чата**. В качестве затравки подается история диалога (предшествующие несколько реплик, от 1 до 10).

```

- Привет, как дела?

- Привет, так себе.

- <<< эту реплику ожидаем от модели >>>

```

Вторая функция модели - вывод ответа на заданный вопрос, опираясь на дополнительные факты или на "здравый смысл". Предполагается, что релевантные факты извлекаются

из стороннего хранилища (базы знаний) с помощью другой модели, например [sbert_pq](https://huggingface.co/inkoziev/sbert_pq).

Используя указанный факт(ы) и текст вопроса, модель построит грамматичный и максимально краткий ответ, как это сделал бы

человек в подобной коммуникативной ситуации. Релевантные факты следует указывать перед текстом заданного вопроса так,

будто сам собеседник сказал их:

```

- Сегодня 15 сентября. Какой сейчас у нас месяц?

- Сентябрь

```

Модель не ожидает, что все найденные и добавленные в контекст диалога факты действительно имеют отношение к заданному вопросу. Поэтому

модель, извлекающая из базы знаний информацию, может жертвовать точностью в пользу полноте и добавлять что-то лишнее. Модель читчата

в этом случае сама выберет среди добавленных в контекст фактов необходимую фактуру и проигнорирует лишнее. Текущая версия модели

допускает до 5 фактов перед вопросом. Например:

```

- Стасу 16 лет. Стас живет в Подольске. У Стаса нет своей машины. Где живет Стас?

- в Подольске

```

В некоторых случаях модель может выполнять **силлогический вывод** ответа, опираясь на 2 предпосылки, связанные друг с другом. Выводимое из двух предпосылок следствие не фигурирует явно, а *как бы* используется для вывода ответа:

```

- Смертен ли Аристофан, если он был греческим философом, а все философы смертны?

- Да

```

Как можно видеть из приведенных примеров, формат подаваемой на вход модели фактической информации для выполнения вывода предельно естественный и свободный.

Кроме логического вывода, модель также умеет решать простые арифметические задачи в рамках 1-2 классов начальной школы, с двумя числовыми аргументами:

```

- Чему равно 2+8?

- 10

```

### Варианты модели и метрики

Выложенная на данный момент модель имеет 760 млн. параметров, т.е. уровня sberbank-ai/rugpt3large_based_on_gpt2. Далее приводится

результат замера точности решения арифметических задач на отложенном тестовом наборе сэмплов:

| base model | arith. accuracy |

| --------------------------------------- | --------------- |

| sberbank-ai/rugpt3large_based_on_gpt2 | 0.91 |

| sberbank-ai/rugpt3medium_based_on_gpt2 | 0.70 |

| sberbank-ai/rugpt3small_based_on_gpt2 | 0.58 |

| tinkoff-ai/ruDialoGPT-small | 0.44 |

| tinkoff-ai/ruDialoGPT-medium | 0.69 |

Цифра 0.91 в столбце "arith. accuracy" означает, что 91% тестовых задач решено полностью верно.

Любое отклонение сгенерированного ответа от эталонного рассматривается

как ошибка. Например, выдача ответа "120" вместо "119" тоже фиксируется как ошибка.

### Пример использования

```

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

device = "cuda" if torch.cuda.is_available() else "cpu"

model_name = "inkoziev/rugpt_chitchat"

tokenizer = AutoTokenizer.from_pretrained(model_name)

tokenizer.add_special_tokens({'bos_token': '<s>', 'eos_token': '</s>', 'pad_token': '<pad>'})

model = AutoModelForCausalLM.from_pretrained(model_name)

model.to(device)

model.eval()

# На вход модели подаем последние 2-3 реплики диалога. Каждая реплика на отдельной строке, начинается с символа "-"

input_text = """<s>- Привет! Что делаешь?

- Привет :) В такси еду

-"""

encoded_prompt = tokenizer.encode(input_text, add_special_tokens=False, return_tensors="pt").to(device)

output_sequences = model.generate(input_ids=encoded_prompt, max_length=100, num_return_sequences=1, pad_token_id=tokenizer.pad_token_id)

text = tokenizer.decode(output_sequences[0].tolist(), clean_up_tokenization_spaces=True)[len(input_text)+1:]

text = text[: text.find('</s>')]

print(text)

```

### Контакты

Если у Вас есть какие-то вопросы по использованию этой модели, или предложения по ее улучшению - пишите мне [email protected]

### Citation:

```

@MISC{rugpt_chitchat,

author = {Ilya Koziev},

title = {Russian Chit-chat with Common sence Reasoning},

url = {https://huggingface.co/inkoziev/rugpt_chitchat},

year = 2022

}

```

|

beomi/KcBERT-v2023 | beomi | 2023-05-07T11:08:50Z | 935 | 0 | transformers | [

"transformers",

"pytorch",

"jax",

"roberta",

"fill-mask",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| fill-mask | 2023-04-15T07:08:27Z | ---

license: mit

---

|

lorahub/flan_t5_large-race_high_Select_the_best_answer | lorahub | 2023-07-24T10:18:46Z | 935 | 0 | peft | [

"peft",

"region:us"

]

| null | 2023-07-24T10:18:37Z | ---

library_name: peft

---

|

TheBloke/Pygmalion-2-13B-AWQ | TheBloke | 2023-11-09T18:20:07Z | 935 | 4 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"text generation",

"instruct",

"en",

"dataset:PygmalionAI/PIPPA",

"dataset:Open-Orca/OpenOrca",

"dataset:Norquinal/claude_multiround_chat_30k",

"dataset:jondurbin/airoboros-gpt4-1.4.1",

"dataset:databricks/databricks-dolly-15k",

"base_model:PygmalionAI/pygmalion-2-13b",

"license:llama2",

"autotrain_compatible",

"text-generation-inference",

"4-bit",

"awq",

"region:us"

]

| text-generation | 2023-09-19T07:25:03Z | ---

language:

- en

license: llama2

tags:

- text generation

- instruct

datasets:

- PygmalionAI/PIPPA

- Open-Orca/OpenOrca

- Norquinal/claude_multiround_chat_30k

- jondurbin/airoboros-gpt4-1.4.1

- databricks/databricks-dolly-15k

model_name: Pygmalion 2 13B

base_model: PygmalionAI/pygmalion-2-13b

inference: false

model_creator: PygmalionAI

model_type: llama

pipeline_tag: text-generation

prompt_template: 'The model has been trained on prompts using three different roles,

which are denoted by the following tokens: `<|system|>`, `<|user|>` and `<|model|>`.

The `<|system|>` prompt can be used to inject out-of-channel information behind

the scenes, while the `<|user|>` prompt should be used to indicate user input.

The `<|model|>` token should then be used to indicate that the model should generate

a response. These tokens can happen multiple times and be chained up to form a conversation

history.

The system prompt has been designed to allow the model to "enter" various modes

and dictate the reply length. Here''s an example:

```

<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

```

'

quantized_by: TheBloke

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Pygmalion 2 13B - AWQ

- Model creator: [PygmalionAI](https://huggingface.co/PygmalionAI)

- Original model: [Pygmalion 2 13B](https://huggingface.co/PygmalionAI/pygmalion-2-13b)

<!-- description start -->

## Description

This repo contains AWQ model files for [PygmalionAI's Pygmalion 2 13B](https://huggingface.co/PygmalionAI/pygmalion-2-13b).

### About AWQ

AWQ is an efficient, accurate and blazing-fast low-bit weight quantization method, currently supporting 4-bit quantization. Compared to GPTQ, it offers faster Transformers-based inference.

It is also now supported by continuous batching server [vLLM](https://github.com/vllm-project/vllm), allowing use of AWQ models for high-throughput concurrent inference in multi-user server scenarios. Note that, at the time of writing, overall throughput is still lower than running vLLM with unquantised models, however using AWQ enables using much smaller GPUs which can lead to easier deployment and overall cost savings. For example, a 70B model can be run on 1 x 48GB GPU instead of 2 x 80GB.

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Pygmalion-2-13B-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Pygmalion-2-13B-GGUF)

* [PygmalionAI's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/PygmalionAI/pygmalion-2-13b)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Custom

The model has been trained on prompts using three different roles, which are denoted by the following tokens: `<|system|>`, `<|user|>` and `<|model|>`.

The `<|system|>` prompt can be used to inject out-of-channel information behind the scenes, while the `<|user|>` prompt should be used to indicate user input.

The `<|model|>` token should then be used to indicate that the model should generate a response. These tokens can happen multiple times and be chained up to form a conversation history.

The system prompt has been designed to allow the model to "enter" various modes and dictate the reply length. Here's an example:

```

<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

```

<!-- prompt-template end -->

<!-- README_AWQ.md-provided-files start -->

## Provided files and AWQ parameters

For my first release of AWQ models, I am releasing 128g models only. I will consider adding 32g as well if there is interest, and once I have done perplexity and evaluation comparisons, but at this time 32g models are still not fully tested with AutoAWQ and vLLM.

Models are released as sharded safetensors files.

| Branch | Bits | GS | AWQ Dataset | Seq Len | Size |

| ------ | ---- | -- | ----------- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Pygmalion-2-13B-AWQ/tree/main) | 4 | 128 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.25 GB

<!-- README_AWQ.md-provided-files end -->

<!-- README_AWQ.md-use-from-vllm start -->

## Serving this model from vLLM

Documentation on installing and using vLLM [can be found here](https://vllm.readthedocs.io/en/latest/).

- When using vLLM as a server, pass the `--quantization awq` parameter, for example:

```shell

python3 python -m vllm.entrypoints.api_server --model TheBloke/Pygmalion-2-13B-AWQ --quantization awq

```

When using vLLM from Python code, pass the `quantization=awq` parameter, for example:

```python

from vllm import LLM, SamplingParams

prompts = [

"Hello, my name is",

"The president of the United States is",

"The capital of France is",

"The future of AI is",

]

sampling_params = SamplingParams(temperature=0.8, top_p=0.95)

llm = LLM(model="TheBloke/Pygmalion-2-13B-AWQ", quantization="awq")

outputs = llm.generate(prompts, sampling_params)

# Print the outputs.

for output in outputs:

prompt = output.prompt

generated_text = output.outputs[0].text

print(f"Prompt: {prompt!r}, Generated text: {generated_text!r}")

```

<!-- README_AWQ.md-use-from-vllm start -->

<!-- README_AWQ.md-use-from-python start -->

## How to use this AWQ model from Python code

### Install the necessary packages

Requires: [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) 0.0.2 or later

```shell

pip3 install autoawq

```

If you have problems installing [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) using the pre-built wheels, install it from source instead:

```shell

pip3 uninstall -y autoawq

git clone https://github.com/casper-hansen/AutoAWQ

cd AutoAWQ

pip3 install .

```

### You can then try the following example code

```python

from awq import AutoAWQForCausalLM

from transformers import AutoTokenizer

model_name_or_path = "TheBloke/Pygmalion-2-13B-AWQ"

# Load model

model = AutoAWQForCausalLM.from_quantized(model_name_or_path, fuse_layers=True,

trust_remote_code=False, safetensors=True)

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=False)

prompt = "Tell me about AI"

prompt_template=f'''<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

'''

print("\n\n*** Generate:")

tokens = tokenizer(

prompt_template,

return_tensors='pt'

).input_ids.cuda()

# Generate output

generation_output = model.generate(

tokens,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

max_new_tokens=512

)

print("Output: ", tokenizer.decode(generation_output[0]))

# Inference can also be done using transformers' pipeline

from transformers import pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

```

<!-- README_AWQ.md-use-from-python end -->

<!-- README_AWQ.md-compatibility start -->

## Compatibility

The files provided are tested to work with [AutoAWQ](https://github.com/casper-hansen/AutoAWQ), and [vLLM](https://github.com/vllm-project/vllm).

[Huggingface Text Generation Inference (TGI)](https://github.com/huggingface/text-generation-inference) is not yet compatible with AWQ, but a PR is open which should bring support soon: [TGI PR #781](https://github.com/huggingface/text-generation-inference/issues/781).

<!-- README_AWQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Alicia Loh, Stephen Murray, K, Ajan Kanaga, RoA, Magnesian, Deo Leter, Olakabola, Eugene Pentland, zynix, Deep Realms, Raymond Fosdick, Elijah Stavena, Iucharbius, Erik Bjäreholt, Luis Javier Navarrete Lozano, Nicholas, theTransient, John Detwiler, alfie_i, knownsqashed, Mano Prime, Willem Michiel, Enrico Ros, LangChain4j, OG, Michael Dempsey, Pierre Kircher, Pedro Madruga, James Bentley, Thomas Belote, Luke @flexchar, Leonard Tan, Johann-Peter Hartmann, Illia Dulskyi, Fen Risland, Chadd, S_X, Jeff Scroggin, Ken Nordquist, Sean Connelly, Artur Olbinski, Swaroop Kallakuri, Jack West, Ai Maven, David Ziegler, Russ Johnson, transmissions 11, John Villwock, Alps Aficionado, Clay Pascal, Viktor Bowallius, Subspace Studios, Rainer Wilmers, Trenton Dambrowitz, vamX, Michael Levine, 준교 김, Brandon Frisco, Kalila, Trailburnt, Randy H, Talal Aujan, Nathan Dryer, Vadim, 阿明, ReadyPlayerEmma, Tiffany J. Kim, George Stoitzev, Spencer Kim, Jerry Meng, Gabriel Tamborski, Cory Kujawski, Jeffrey Morgan, Spiking Neurons AB, Edmond Seymore, Alexandros Triantafyllidis, Lone Striker, Cap'n Zoog, Nikolai Manek, danny, ya boyyy, Derek Yates, usrbinkat, Mandus, TL, Nathan LeClaire, subjectnull, Imad Khwaja, webtim, Raven Klaugh, Asp the Wyvern, Gabriel Puliatti, Caitlyn Gatomon, Joseph William Delisle, Jonathan Leane, Luke Pendergrass, SuperWojo, Sebastain Graf, Will Dee, Fred von Graf, Andrey, Dan Guido, Daniel P. Andersen, Nitin Borwankar, Elle, Vitor Caleffi, biorpg, jjj, NimbleBox.ai, Pieter, Matthew Berman, terasurfer, Michael Davis, Alex, Stanislav Ovsiannikov

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: PygmalionAI's Pygmalion 2 13B

<h1 style="text-align: center">Pygmalion-2 13B</h1>

<h2 style="text-align: center">An instruction-tuned Llama-2 biased towards fiction writing and conversation.</h2>

## Model Details

The long-awaited release of our new models based on Llama-2 is finally here. Pygmalion-2 13B (formerly known as Metharme) is based on

[Llama-2 13B](https://huggingface.co/meta-llama/llama-2-13b-hf) released by Meta AI.

The Metharme models were an experiment to try and get a model that is usable for conversation, roleplaying and storywriting,

but which can be guided using natural language like other instruct models. After much deliberation, we reached the conclusion

that the Metharme prompting format is superior (and easier to use) compared to the classic Pygmalion.

This model was trained by doing supervised fine-tuning over a mixture of regular instruction data alongside roleplay, fictional stories

and conversations with synthetically generated instructions attached.

This model is freely available for both commercial and non-commercial use, as per the Llama-2 license.

## Prompting

The model has been trained on prompts using three different roles, which are denoted by the following tokens: `<|system|>`, `<|user|>` and `<|model|>`.

The `<|system|>` prompt can be used to inject out-of-channel information behind the scenes, while the `<|user|>` prompt should be used to indicate user input.

The `<|model|>` token should then be used to indicate that the model should generate a response. These tokens can happen multiple times and be chained up to

form a conversation history.

### Prompting example

The system prompt has been designed to allow the model to "enter" various modes and dictate the reply length. Here's an example:

```

<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

```

## Dataset

The dataset used to fine-tune this model includes our own [PIPPA](https://huggingface.co/datasets/PygmalionAI/PIPPA), along with several other instruction

datasets, and datasets acquired from various RP forums.

## Limitations and biases

The intended use-case for this model is fictional writing for entertainment purposes. Any other sort of usage is out of scope.

As such, it was **not** fine-tuned to be safe and harmless: the base model _and_ this fine-tune have been trained on data known to contain profanity and texts that are lewd or otherwise offensive. It may produce socially unacceptable or undesirable text, even if the prompt itself does not include anything explicitly offensive. Outputs might often be factually wrong or misleading.

## Acknowledgements

We would like to thank [SpicyChat](https://spicychat.ai/) for sponsoring the training for this model.

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

|

TownsWu/PEG | TownsWu | 2024-05-23T09:42:26Z | 935 | 30 | transformers | [

"transformers",

"pytorch",

"bert",

"feature-extraction",

"PEG",

"sentence-similarity",

"mteb",

"zh",

"arxiv:2311.11691",

"model-index",

"endpoints_compatible",

"text-embeddings-inference",

"region:us"

]

| sentence-similarity | 2023-11-15T07:59:13Z | ---

language:

- zh

pipeline_tag: sentence-similarity

tags:

- PEG

- feature-extraction

- sentence-similarity

- transformers

- mteb

model-index:

- name: PEG

results:

- task:

type: Reranking

dataset:

type: C-MTEB/CMedQAv1-reranking

name: MTEB CMedQAv1

config: default

split: test

revision: None

metrics:

- type: map

value: 84.09137463267582

- type: mrr

value: 86.6288888888889

- task:

type: Reranking

dataset:

type: C-MTEB/CMedQAv2-reranking

name: MTEB CMedQAv2

config: default

split: test

revision: None

metrics:

- type: map

value: 86.55765031914974

- type: mrr

value: 89.4325396825397

- task:

type: Retrieval

dataset:

type: C_MTEB/CmedqaRetrieval

name: MTEB CmedqaRetrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 26.101000000000003

- type: map_at_10

value: 38.239000000000004

- type: map_at_100

value: 40.083

- type: map_at_1000

value: 40.205

- type: map_at_3

value: 34.386

- type: map_at_5

value: 36.425999999999995

- type: mrr_at_1

value: 39.434999999999995

- type: mrr_at_10

value: 46.967999999999996

- type: mrr_at_100

value: 47.946

- type: mrr_at_1000

value: 47.997

- type: mrr_at_3

value: 44.803

- type: mrr_at_5

value: 45.911

- type: ndcg_at_1

value: 39.434999999999995

- type: ndcg_at_10

value: 44.416

- type: ndcg_at_100

value: 51.773

- type: ndcg_at_1000

value: 53.888000000000005

- type: ndcg_at_3

value: 39.816

- type: ndcg_at_5

value: 41.467999999999996

- type: precision_at_1

value: 39.434999999999995

- type: precision_at_10

value: 9.786999999999999

- type: precision_at_100

value: 1.5810000000000002

- type: precision_at_1000

value: 0.184

- type: precision_at_3

value: 22.414

- type: precision_at_5

value: 15.943999999999999

- type: recall_at_1

value: 26.101000000000003

- type: recall_at_10

value: 53.82900000000001

- type: recall_at_100

value: 84.63199999999999

- type: recall_at_1000

value: 98.782

- type: recall_at_3

value: 39.585

- type: recall_at_5

value: 45.141

- task:

type: Retrieval

dataset:

type: C_MTEB/CovidRetrieval

name: MTEB CovidRetrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 70.39

- type: map_at_10

value: 78.93599999999999

- type: map_at_100

value: 79.202

- type: map_at_1000

value: 79.205

- type: map_at_3

value: 77.538

- type: map_at_5

value: 78.312

- type: mrr_at_1

value: 70.706

- type: mrr_at_10

value: 79.018

- type: mrr_at_100

value: 79.28399999999999

- type: mrr_at_1000

value: 79.288

- type: mrr_at_3

value: 77.713

- type: mrr_at_5

value: 78.462

- type: ndcg_at_1

value: 70.601

- type: ndcg_at_10

value: 82.555

- type: ndcg_at_100

value: 83.718

- type: ndcg_at_1000

value: 83.855

- type: ndcg_at_3

value: 79.779

- type: ndcg_at_5

value: 81.149

- type: precision_at_1

value: 70.601

- type: precision_at_10

value: 9.463000000000001

- type: precision_at_100

value: 0.9979999999999999

- type: precision_at_1000

value: 0.101

- type: precision_at_3

value: 28.871999999999996

- type: precision_at_5

value: 18.019

- type: recall_at_1

value: 70.39

- type: recall_at_10

value: 93.572

- type: recall_at_100

value: 98.736

- type: recall_at_1000

value: 99.895

- type: recall_at_3

value: 86.091

- type: recall_at_5

value: 89.384

- task:

type: Retrieval

dataset:

type: C_MTEB/DuRetrieval

name: MTEB DuRetrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 26.147

- type: map_at_10

value: 80.205

- type: map_at_100

value: 82.96

- type: map_at_1000

value: 82.999

- type: map_at_3

value: 55.16799999999999

- type: map_at_5

value: 69.798

- type: mrr_at_1

value: 89.8

- type: mrr_at_10

value: 93.16799999999999

- type: mrr_at_100

value: 93.22500000000001

- type: mrr_at_1000

value: 93.228

- type: mrr_at_3

value: 92.85

- type: mrr_at_5

value: 93.067

- type: ndcg_at_1

value: 89.8

- type: ndcg_at_10

value: 87.668

- type: ndcg_at_100

value: 90.16

- type: ndcg_at_1000

value: 90.505

- type: ndcg_at_3

value: 85.842

- type: ndcg_at_5

value: 85.101

- type: precision_at_1

value: 89.8

- type: precision_at_10

value: 42.225

- type: precision_at_100

value: 4.8149999999999995

- type: precision_at_1000

value: 0.48900000000000005

- type: precision_at_3

value: 76.967

- type: precision_at_5

value: 65.32

- type: recall_at_1

value: 26.147

- type: recall_at_10

value: 89.30399999999999

- type: recall_at_100

value: 97.609

- type: recall_at_1000

value: 99.409

- type: recall_at_3

value: 57.56

- type: recall_at_5

value: 74.78200000000001

- task:

type: Retrieval

dataset:

type: C_MTEB/EcomRetrieval

name: MTEB EcomRetrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 53.300000000000004

- type: map_at_10

value: 62.507000000000005

- type: map_at_100

value: 63.068000000000005

- type: map_at_1000

value: 63.08200000000001

- type: map_at_3

value: 60.050000000000004

- type: map_at_5

value: 61.41

- type: mrr_at_1

value: 53.300000000000004

- type: mrr_at_10

value: 62.507000000000005

- type: mrr_at_100

value: 63.068000000000005

- type: mrr_at_1000

value: 63.08200000000001

- type: mrr_at_3

value: 60.050000000000004

- type: mrr_at_5

value: 61.41

- type: ndcg_at_1

value: 53.300000000000004

- type: ndcg_at_10

value: 67.31700000000001

- type: ndcg_at_100

value: 69.862

- type: ndcg_at_1000

value: 70.231

- type: ndcg_at_3

value: 62.222

- type: ndcg_at_5

value: 64.66300000000001

- type: precision_at_1

value: 53.300000000000004

- type: precision_at_10

value: 8.260000000000002

- type: precision_at_100

value: 0.941

- type: precision_at_1000

value: 0.097

- type: precision_at_3

value: 22.833000000000002

- type: precision_at_5

value: 14.879999999999999

- type: recall_at_1

value: 53.300000000000004

- type: recall_at_10

value: 82.6

- type: recall_at_100

value: 94.1

- type: recall_at_1000

value: 97.0

- type: recall_at_3

value: 68.5

- type: recall_at_5

value: 74.4

- task:

type: Retrieval

dataset:

type: C_MTEB/MMarcoRetrieval

name: MTEB MMarcoRetrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 70.68799999999999

- type: map_at_10

value: 79.28399999999999

- type: map_at_100

value: 79.537

- type: map_at_1000

value: 79.545

- type: map_at_3

value: 77.643

- type: map_at_5

value: 78.694

- type: mrr_at_1

value: 73.05199999999999

- type: mrr_at_10

value: 79.794

- type: mrr_at_100

value: 80.024

- type: mrr_at_1000

value: 80.03099999999999

- type: mrr_at_3

value: 78.441

- type: mrr_at_5

value: 79.29

- type: ndcg_at_1

value: 73.05199999999999

- type: ndcg_at_10

value: 82.627

- type: ndcg_at_100

value: 83.737

- type: ndcg_at_1000

value: 83.946

- type: ndcg_at_3

value: 79.585

- type: ndcg_at_5

value: 81.306

- type: precision_at_1

value: 73.05199999999999

- type: precision_at_10

value: 9.835

- type: precision_at_100

value: 1.038

- type: precision_at_1000

value: 0.106

- type: precision_at_3

value: 29.756

- type: precision_at_5

value: 18.788

- type: recall_at_1

value: 70.68799999999999

- type: recall_at_10

value: 92.38300000000001

- type: recall_at_100

value: 97.347

- type: recall_at_1000

value: 98.992

- type: recall_at_3

value: 84.37

- type: recall_at_5

value: 88.434

- task:

type: Retrieval

dataset:

type: C_MTEB/MedicalRetrieval

name: MTEB MedicalRetrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 53.1

- type: map_at_10

value: 58.36599999999999

- type: map_at_100

value: 58.939

- type: map_at_1000

value: 58.99100000000001

- type: map_at_3

value: 57.15

- type: map_at_5

value: 57.794999999999995

- type: mrr_at_1

value: 53.2

- type: mrr_at_10

value: 58.416000000000004

- type: mrr_at_100

value: 58.989999999999995

- type: mrr_at_1000

value: 59.041

- type: mrr_at_3

value: 57.199999999999996

- type: mrr_at_5

value: 57.845

- type: ndcg_at_1

value: 53.1

- type: ndcg_at_10

value: 60.989000000000004

- type: ndcg_at_100

value: 63.967

- type: ndcg_at_1000

value: 65.436

- type: ndcg_at_3

value: 58.425000000000004

- type: ndcg_at_5

value: 59.583

- type: precision_at_1

value: 53.1

- type: precision_at_10

value: 6.93

- type: precision_at_100

value: 0.8370000000000001

- type: precision_at_1000

value: 0.096

- type: precision_at_3

value: 20.7

- type: precision_at_5

value: 12.98

- type: recall_at_1

value: 53.1

- type: recall_at_10

value: 69.3

- type: recall_at_100

value: 83.7

- type: recall_at_1000

value: 95.5

- type: recall_at_3

value: 62.1

- type: recall_at_5

value: 64.9

- task:

type: Reranking

dataset:

type: C-MTEB/Mmarco-reranking

name: MTEB MMarcoReranking

config: default

split: dev

revision: None

metrics:

- type: map

value: 33.548800108363665

- type: mrr

value: 32.529761904761905

- task:

type: Reranking

dataset:

type: C-MTEB/T2Reranking

name: MTEB T2Reranking

config: default

split: dev

revision: None

metrics:

- type: map

value: 69.43381583724414

- type: mrr

value: 80.47879657392181

- task:

type: Retrieval

dataset:

type: C_MTEB/T2Retrieval

name: MTEB T2Retrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 28.116000000000003

- type: map_at_10

value: 80.026

- type: map_at_100

value: 83.541

- type: map_at_1000

value: 83.592

- type: map_at_3

value: 56.092

- type: map_at_5

value: 69.114

- type: mrr_at_1

value: 91.557

- type: mrr_at_10

value: 93.73700000000001

- type: mrr_at_100

value: 93.808

- type: mrr_at_1000

value: 93.811

- type: mrr_at_3

value: 93.384

- type: mrr_at_5

value: 93.614

- type: ndcg_at_1

value: 91.553

- type: ndcg_at_10

value: 87.003

- type: ndcg_at_100

value: 90.128

- type: ndcg_at_1000

value: 90.615

- type: ndcg_at_3

value: 88.205

- type: ndcg_at_5

value: 86.978

- type: precision_at_1

value: 91.553

- type: precision_at_10

value: 43.25

- type: precision_at_100

value: 5.067

- type: precision_at_1000

value: 0.518

- type: precision_at_3

value: 77.25

- type: precision_at_5

value: 64.902

- type: recall_at_1

value: 28.116000000000003

- type: recall_at_10

value: 85.994

- type: recall_at_100

value: 96.345

- type: recall_at_1000

value: 98.867

- type: recall_at_3

value: 57.67099999999999

- type: recall_at_5

value: 72.26

- task:

type: Retrieval

dataset:

type: C_MTEB/VideoRetrieval

name: MTEB VideoRetrieval

config: default

split: dev

revision: None

metrics:

- type: map_at_1

value: 64.9

- type: map_at_10

value: 73.763

- type: map_at_100

value: 74.116

- type: map_at_1000

value: 74.12100000000001

- type: map_at_3

value: 72.15

- type: map_at_5

value: 73.25

- type: mrr_at_1

value: 64.9

- type: mrr_at_10

value: 73.763

- type: mrr_at_100

value: 74.116

- type: mrr_at_1000

value: 74.12100000000001

- type: mrr_at_3

value: 72.15

- type: mrr_at_5

value: 73.25

- type: ndcg_at_1

value: 64.9

- type: ndcg_at_10

value: 77.639

- type: ndcg_at_100

value: 79.396

- type: ndcg_at_1000

value: 79.554

- type: ndcg_at_3

value: 74.406

- type: ndcg_at_5

value: 76.385

- type: precision_at_1

value: 64.9

- type: precision_at_10

value: 8.959999999999999

- type: precision_at_100

value: 0.979

- type: precision_at_1000

value: 0.099

- type: precision_at_3

value: 26.967000000000002

- type: precision_at_5

value: 17.14

- type: recall_at_1

value: 64.9

- type: recall_at_10

value: 89.60000000000001

- type: recall_at_100

value: 97.89999999999999

- type: recall_at_1000

value: 99.2

- type: recall_at_3

value: 80.9

- type: recall_at_5

value: 85.7

---

license: apache-2.0

library_name: transformers

---

<h1 align="center">PEG: Towards Robust Text Retrieval with Progressive Learning</h1>

## Model Details

We propose the PEG model (a Progressively Learned Textual Embedding), which progressively adjusts the weights of samples contributing to the loss within an extremely large batch, based on the difficulty levels of negative samples.

we have amassed an extensive collection of over 110 million data, spanning a wide range of fields such as general knowledge, finance, tourism, medicine, and more.

Our technical report is available at [Paper](https://arxiv.org/pdf/2311.11691.pdf)

## Usage (HuggingFace Transformers)

Install transformers:

```

pip install transformers

```

Then load model and predict:

```python

from transformers import AutoModel, AutoTokenizer

import torch

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('TownsWu/PEG')

model = AutoModel.from_pretrained('TownsWu/PEG')

sentences = ['如何更换花呗绑定银行卡', '花呗更改绑定银行卡']

# Tokenize sentences

inputs = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

last_hidden_state = model(**inputs, return_dict=True).last_hidden_state

embeddings = last_hidden_state[:, 0]

print("embeddings:")

print(embeddings)

```

## Contact

If you have any question or suggestion related to this project, feel free to open an issue or pull request.

You also can email Tong Wu([email protected]).

## Citation

If you find our work helpful for your research, please consider citing the following BibTeX entry:

```

@article{wu2023towards,

title={Towards Robust Text Retrieval with Progressive Learning},

author={Wu, Tong and Qin, Yulei and Zhang, Enwei and Xu, Zihan and Gao, Yuting and Li, Ke and Sun, Xing},

journal={arXiv preprint arXiv:2311.11691},

year={2023}

}

``` |

MaziyarPanahi/Calme-7B-Instruct-v0.2 | MaziyarPanahi | 2024-04-03T16:54:37Z | 935 | 11 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"generated_from_trainer",

"7b",

"calme",

"conversational",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-03-14T20:18:50Z | ---

license: apache-2.0

tags:

- generated_from_trainer

- mistral

- 7b

- calme

model-index:

- name: Calme-7B-Instruct-v0.2

results: []

model_name: Calme-7B-Instruct-v0.2

inference: false

model_creator: MaziyarPanahi

pipeline_tag: text-generation

quantized_by: MaziyarPanahi

---

<img src="https://cdn-uploads.huggingface.co/production/uploads/5fd5e18a90b6dc4633f6d292/LzEf6vvq2qIiys-q7l9Hq.webp" width="550" />

# MaziyarPanahi/Calme-7B-Instruct-v0.2

## Model Description

Calme-7B is a state-of-the-art language model with 7 billion parameters, fine-tuned over high-quality datasets on top of Mistral-7B. The Calme-7B models excel in generating text that resonates with clarity, calmness, and coherence.

### How to Use

```python

# Use a pipeline as a high-level helper

from transformers import pipeline

pipe = pipeline("text-generation", model="MaziyarPanahi/Calme-7B-Instruct-v0.2")

# Load model directly

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("MaziyarPanahi/Calme-7B-Instruct-v0.2")

model = AutoModelForCausalLM.from_pretrained("MaziyarPanahi/Calme-7B-Instruct-v0.2")

```

### Quantized Models

> I love how GGUF democratizes the use of Large Language Models (LLMs) on commodity hardware, more specifically, personal computers without any accelerated hardware. Because of this, I am committed to converting and quantizing any models I fine-tune to make them accessible to everyone!

- GGUF (2/3/4/5/6/8 bits): [MaziyarPanahi/Calme-7B-Instruct-v0.2-GGUF](https://huggingface.co/MaziyarPanahi/Calme-7B-Instruct-v0.2-GGUF)

## Examples

```

<s>[INST] You are a helpful, respectful and honest assistant. Always answer as helpfully as possible, while being safe. Your answers should not include any harmful, unethical, racist, sexist, toxic, dangerous, or illegal content. Please ensure that your responses are socially unbiased and positive in nature.

If a question does not make any sense, or is not factually coherent, explain why instead of answering something not correct. If you don't know the answer to a question, please don't share false information.

describe about pros and cons of docker system. [/INST]

```

<details>

<summary>Show me the response</summary>

```

```

</details>

```

```

<details>

<summary>Show me the response</summary>

```

```

</details>

```

<s> [INST] Mark is faster than Mary, Mary is faster than Joe. Is Joe faster than Mark? Let's think step by step [/INST]

```

<details>

<summary>Show me the response</summary>

```

```

</details>

```

```

<details>

<summary>Show me the response</summary>

```

```

</details>

```

<s> [INST] explain step by step 25-4*2+3=? [/INST]

```

<details>

<summary>Show me the response</summary>

```

```

</details>

**Multilingual:**

```

<s> [INST] Vous êtes un assistant utile, respectueux et honnête. Répondez toujours de la manière la plus utile possible, tout en étant sûr. Vos réponses ne doivent inclure aucun contenu nuisible, contraire à l'éthique, raciste, sexiste, toxique, dangereux ou illégal. Assurez-vous que vos réponses sont socialement impartiales et de nature positive.

Si une question n'a pas de sens ou n'est pas cohérente d'un point de vue factuel, expliquez pourquoi au lieu de répondre quelque chose d'incorrect. Si vous ne connaissez pas la réponse à une question, veuillez ne pas partager de fausses informations.

Décrivez les avantages et les inconvénients du système Docker.[/INST]

```

<details>

<summary>Show me the response</summary>

```

```

<details>

<summary>Show me the response</summary>

```

```

</details>

```

<s>[INST] Ви - корисний, поважний та чесний помічник. Завжди відповідайте максимально корисно, будучи безпечним. Ваші відповіді не повинні містити шкідливого, неетичного, расистського, сексистського, токсичного, небезпечного або нелегального контенту. Будь ласка, переконайтеся, що ваші відповіді соціально неупереджені та мають позитивний характер.

Якщо питання не має сенсу або не є фактично послідовним, поясніть чому, замість того, щоб відповідати щось некоректне. Якщо ви не знаєте відповіді на питання, будь ласка, не діліться неправдивою інформацією.

Опис про переваги та недоліки системи Docker.[/INST]

```

<details>

<summary>Show me the response</summary>

```

```

</details> |

MoritzLaurer/deberta-v3-large-zeroshot-v2.0-c | MoritzLaurer | 2024-04-04T07:04:53Z | 935 | 15 | transformers | [

"transformers",

"safetensors",

"deberta-v2",

"text-classification",

"zero-shot-classification",

"en",

"dataset:nyu-mll/multi_nli",

"dataset:fever",

"arxiv:2312.17543",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| zero-shot-classification | 2024-03-20T21:23:01Z | ---

language:

- en

tags:

- text-classification

- zero-shot-classification

pipeline_tag: zero-shot-classification

library_name: transformers

license: mit

datasets:

- nyu-mll/multi_nli

- fever

---

# Model description: deberta-v3-large-zeroshot-v2.0-c

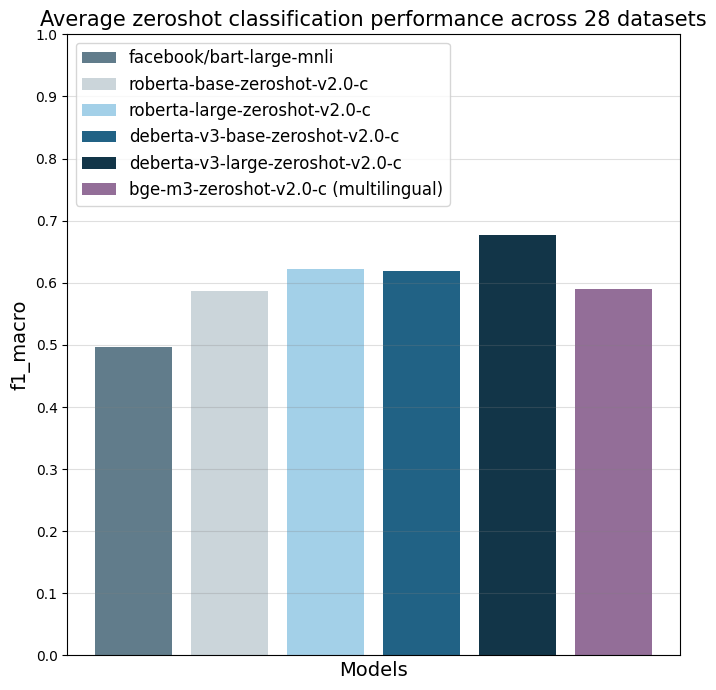

## zeroshot-v2.0 series of models

Models in this series are designed for efficient zeroshot classification with the Hugging Face pipeline.

These models can do classification without training data and run on both GPUs and CPUs.

An overview of the latest zeroshot classifiers is available in my [Zeroshot Classifier Collection](https://huggingface.co/collections/MoritzLaurer/zeroshot-classifiers-6548b4ff407bb19ff5c3ad6f).

The main update of this `zeroshot-v2.0` series of models is that several models are trained on fully commercially-friendly data for users with strict license requirements.

These models can do one universal classification task: determine whether a hypothesis is "true" or "not true" given a text

(`entailment` vs. `not_entailment`).

This task format is based on the Natural Language Inference task (NLI).

The task is so universal that any classification task can be reformulated into this task by the Hugging Face pipeline.

## Training data

Models with a "`-c`" in the name are trained on two types of fully commercially-friendly data:

1. Synthetic data generated with [Mixtral-8x7B-Instruct-v0.1](https://huggingface.co/mistralai/Mixtral-8x7B-Instruct-v0.1).

I first created a list of 500+ diverse text classification tasks for 25 professions in conversations with Mistral-large. The data was manually curated.

I then used this as seed data to generate several hundred thousand texts for these tasks with Mixtral-8x7B-Instruct-v0.1.

The final dataset used is available in the [synthetic_zeroshot_mixtral_v0.1](https://huggingface.co/datasets/MoritzLaurer/synthetic_zeroshot_mixtral_v0.1) dataset

in the subset `mixtral_written_text_for_tasks_v4`. Data curation was done in multiple iterations and will be improved in future iterations.

2. Two commercially-friendly NLI datasets: ([MNLI](https://huggingface.co/datasets/nyu-mll/multi_nli), [FEVER-NLI](https://huggingface.co/datasets/fever)).

These datasets were added to increase generalization.