modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

aneuraz/awesome-align-with-co | aneuraz | 2022-04-29T16:16:12Z | 643 | 3 | transformers | [

"transformers",

"pytorch",

"bert",

"fill-mask",

"sentence alignment",

"de",

"fr",

"en",

"ro",

"zh",

"arxiv:2101.08231",

"license:bsd-3-clause",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | 2022-04-29T14:55:54Z | ---

language:

- de

- fr

- en

- ro

- zh

thumbnail:

tags:

- sentence alignment

license: bsd-3-clause

---

# AWESOME: Aligning Word Embedding Spaces of Multilingual Encoders

This model comes from the following GitHub repository: [https://github.com/neulab/awesome-align](https://github.com/neulab/awesome-align)

It corresponds to this paper: [https://arxiv.org/abs/2101.08231](https://arxiv.org/abs/2101.08231)

Please cite the original paper if you decide to use the model:

```

@inproceedings{dou2021word,

title={Word Alignment by Fine-tuning Embeddings on Parallel Corpora},

author={Dou, Zi-Yi and Neubig, Graham},

booktitle={Conference of the European Chapter of the Association for Computational Linguistics (EACL)},

year={2021}

}

```

`awesome-align` is a tool that can extract word alignments from multilingual BERT (mBERT) [Demo](https://colab.research.google.com/drive/1205ubqebM0OsZa1nRgbGJBtitgHqIVv6?usp=sharing) and allows you to fine-tune mBERT on parallel corpora for better alignment quality (see our paper for more details).

## Usage (copied from this [DEMO](https://colab.research.google.com/drive/1205ubqebM0OsZa1nRgbGJBtitgHqIVv6?usp=sharing) )

```python

from transformers import AutoModel, AutoTokenizer

import itertools

import torch

# load model

model = AutoModel.from_pretrained("aneuraz/awesome-align-with-co")

tokenizer = AutoTokenizer.from_pretrained("aneuraz/awesome-align-with-co")

# model parameters

align_layer = 8

threshold = 1e-3

# define inputs

src = 'awesome-align is awesome !'

tgt = '牛对齐 是 牛 !'

# pre-processing

sent_src, sent_tgt = src.strip().split(), tgt.strip().split()

token_src, token_tgt = [tokenizer.tokenize(word) for word in sent_src], [tokenizer.tokenize(word) for word in sent_tgt]

wid_src, wid_tgt = [tokenizer.convert_tokens_to_ids(x) for x in token_src], [tokenizer.convert_tokens_to_ids(x) for x in token_tgt]

ids_src, ids_tgt = tokenizer.prepare_for_model(list(itertools.chain(*wid_src)), return_tensors='pt', model_max_length=tokenizer.model_max_length, truncation=True)['input_ids'], tokenizer.prepare_for_model(list(itertools.chain(*wid_tgt)), return_tensors='pt', truncation=True, model_max_length=tokenizer.model_max_length)['input_ids']

sub2word_map_src = []

for i, word_list in enumerate(token_src):

sub2word_map_src += [i for x in word_list]

sub2word_map_tgt = []

for i, word_list in enumerate(token_tgt):

sub2word_map_tgt += [i for x in word_list]

# alignment

align_layer = 8

threshold = 1e-3

model.eval()

with torch.no_grad():

out_src = model(ids_src.unsqueeze(0), output_hidden_states=True)[2][align_layer][0, 1:-1]

out_tgt = model(ids_tgt.unsqueeze(0), output_hidden_states=True)[2][align_layer][0, 1:-1]

dot_prod = torch.matmul(out_src, out_tgt.transpose(-1, -2))

softmax_srctgt = torch.nn.Softmax(dim=-1)(dot_prod)

softmax_tgtsrc = torch.nn.Softmax(dim=-2)(dot_prod)

softmax_inter = (softmax_srctgt > threshold)*(softmax_tgtsrc > threshold)

align_subwords = torch.nonzero(softmax_inter, as_tuple=False)

align_words = set()

for i, j in align_subwords:

align_words.add( (sub2word_map_src[i], sub2word_map_tgt[j]) )

print(align_words)

```

|

Neurona/cpener-test | Neurona | 2022-10-03T12:21:51Z | 643 | 1 | transformers | [

"transformers",

"pytorch",

"distilbert",

"token-classification",

"en",

"license:unlicense",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | 2022-09-22T22:44:08Z | ---

license: unlicense

language: "en"

widget:

- text: "FileZilla Project FileZilla Client 3.5.1."

- text: "Google Chrome 56.0.2924.87."

---

|

coder119/Vectorartz_Diffusion | coder119 | 2023-03-03T14:08:26Z | 643 | 115 | diffusers | [

"diffusers",

"text-to-image",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | 2022-11-13T09:42:49Z | ---

license: "creativeml-openrail-m"

tags:

- text-to-image

---

Generate beautiful vector illustration

Trigger word: **vectorartz**

*(Sampler: DPM++ 2S a Karras, Steps: 16, CFG: 7)*

beautiful landscape, vectorartz

instagram icon, vectorartz

isometric bazaar, vectorartz

isometric village, vectorartz

medieval armor, vectorartz

steampunk machinery, vectorartz

underwater coral reef, vectorartz

|

lorahub/flan_t5_large-quoref_Found_Context_Online | lorahub | 2023-07-24T09:47:33Z | 643 | 0 | peft | [

"peft",

"region:us"

] | null | 2023-07-24T09:47:23Z | ---

library_name: peft

---

|

mmnga/rinna-japanese-gpt-neox-3.6b-gguf | mmnga | 2023-09-08T02:37:19Z | 643 | 0 | null | [

"gguf",

"ja",

"license:mit",

"region:us"

] | null | 2023-09-02T18:46:08Z | ---

license: mit

language:

- ja

---

# rinna/japanese-gpt-neox-3.6b

[rinnaさんが公開しているjapanese-gpt-neox-3.6b](https://huggingface.co/rinna/japanese-gpt-neox-3.6b)のgguf変換版です。

他モデルはこちら

[mmnga/rinna-bilingual-gpt-neox-4b-gguf](https://huggingface.co/mmnga/rinna-bilingual-gpt-neox-4b-gguf)

[mmnga/rinna-bilingual-gpt-neox-4b-8k-gguf](https://huggingface.co/mmnga/rinna-bilingual-gpt-neox-4b-8k-gguf)

[mmnga/rinna-bilingual-gpt-neox-4b-instruction-ppo-gguf](https://huggingface.co/mmnga/rinna-bilingual-gpt-neox-4b-instruction-ppo-gguf)

[mmnga/rinna-japanese-gpt-neox-3.6b-gguf](https://huggingface.co/mmnga/rinna-japanese-gpt-neox-3.6b-gguf)

[mmnga/rinna-japanese-gpt-neox-3.6b-instruction-ppo-gguf](https://huggingface.co/mmnga/rinna-japanese-gpt-neox-3.6b-instruction-ppo-gguf)

*注意:こちらはブランチで試用になります。llama.cpp本家にgptneoxが実装された時に、このggufファイルが使用できない可能性があります。*

***[GitHubリポジトリの readme はこちら](https://github.com/mmnga/llama.cpp/tree/mmnga-dev)***

## Usage (試用)

~~~~bash

git clone --branch mmnga-dev https://github.com/mmnga/llama.cpp.git

cd llama.cpp

make -j

./main -m 'rinna-japanese-gpt-neox-3.6b-q4_0.gguf' -n 128 -p 'ユーザー: 吾輩って猫ですか? システム: ' --top_p 0.9 --temp 0.7 --repeat-penalty 1.1

~~~~

**CUBLAS**

~~~~bash

LLAMA_CUBLAS=1 make -j

./main -m 'rinna-japanese-gpt-neox-3.6b-q4_0.gguf' -n 128 -p 'ユーザー: 吾輩って猫ですか? システム: ' -ngl 32

~~~~

**従来のCPU実行**

~~~~bash

git clone --branch mmnga-dev https://github.com/mmnga/llama.cpp.git

cd llama.cpp

make -j gptneox

./gptneox -m 'rinna-japanese-gpt-neox-3.6b-q4_0.gguf' -n 128 -p 'ユーザー: 吾輩って猫ですか? システム: '

~~~~

|

Legalaz/5F7aZHbsqJDGmWNV8FnLBP9LxGRTpbHd9Nd8u5qcXWguwEHv_vgg | Legalaz | 2024-02-17T03:24:33Z | 643 | 0 | keras | [

"keras",

"region:us"

] | null | 2024-02-07T01:00:47Z | Entry not found |

QuantFactory/Meta-Llama-Guard-2-8B-GGUF | QuantFactory | 2024-04-19T01:54:29Z | 643 | 10 | null | [

"gguf",

"facebook",

"meta",

"pytorch",

"llama",

"llama-3",

"text-generation",

"en",

"arxiv:2312.06674",

"arxiv:2403.13031",

"base_model:meta-llama/Meta-Llama-Guard-2-8B",

"license:other",

"region:us"

] | text-generation | 2024-04-18T19:44:41Z | ---

language:

- en

pipeline_tag: text-generation

tags:

- facebook

- meta

- pytorch

- llama

- llama-3

license: other

license_name: llama3

license_link: LICENSE

base_model: meta-llama/Meta-Llama-Guard-2-8B

---

# QuantFactory/Meta-Llama-Guard-2-8B-GGUF

- This is the GGUF quantized version of [meta-llama/Meta-Llama-Guard-2-8B](https://huggingface.co/meta-llama/Meta-Llama-Guard-2-8B) created using llama.cpp

- Llama3 use policy : [https://llama.meta.com/llama3/use-policy](https://llama.meta.com/llama3/use-policy)

# Model Details

Meta Llama Guard 2 is an 8B parameter Llama 3-based [1] LLM safeguard model. Similar to [Llama Guard](https://ai.meta.com/research/publications/llama-guard-llm-based-input-output-safeguard-for-human-ai-conversations/), it can be used for classifying content in both LLM inputs (prompt classification) and in LLM responses (response classification). It acts as an LLM – it generates text in its output that indicates whether a given prompt or response is safe or unsafe, and if unsafe, it also lists the content categories violated.

Below is a response classification example input and output for Llama Guard 2.

<p align="center">

<img src="https://github.com/facebookresearch/PurpleLlama/raw/main/Llama-Guard2/llamaguard_example.png" width="800"/>

</p>

In order to produce classifier scores, we look at the probability for the first token, and use that as the “unsafe” class probability. We can then apply score thresholding to make binary decisions.

# Harm Taxonomy and Policy

The model is trained to predict safety labels on the 11 categories shown below, based on the [MLCommons taxonomy](https://mlcommons.org/2024/04/mlc-aisafety-v0-5-poc/) of hazards.

<table align="center">

<thead>

<tr>

<th colspan="2">Harm categories</th>

</tr>

</thead>

<tbody>

<tr>

<td>S1: Violent Crimes</td>

<td>S2: Non-Violent Crimes</td>

</tr>

<tr>

<td>S3: Sex-Related Crimes</td>

<td>S4: Child Sexual Exploitation</td>

</tr>

<tr>

<td>S5: Specialized Advice</td>

<td>S6: Privacy</td>

</tr>

<tr>

<td>S7: Intellectual Property</td>

<td>S8: Indiscriminate Weapons </td>

</tr>

<tr>

<td>S9: Hate</td>

<td>S10: Suicide & Self-Harm</td>

</tr>

<tr>

<td>S11: Sexual Content</td>

<td></td>

</tr>

</tbody>

</table>

**S1: Violent Crimes**

Responses that enable, encourage, or endorse the commission of violent crimes, including: (1) unlawful violence toward people (ex: terrorism, genocide, murder, hate-motivated violent crimes, child abuse, assault, battery, kidnapping) and (2) unlawful violence toward animals (ex: animal abuse)

**S2: Non-Violent Crimes**

Responses that enable, encourage, or endorse the commission of non-violent crimes, including: (1) personal crimes (ex: labor trafficking, threats, intimidation, hate-motivated non-violent crimes), (2) financial crimes (ex: fraud, scams, money laundering), (3) property crimes (ex: theft, arson, vandalism), (4) drug crimes (ex: creating or using regulated substances), (5) weapons crimes (ex: producing unlicensed firearms), and (6) cyber crimes (ex: hacking)

**S3: Sex-Related Crimes**

Responses that enable, encourage, or endorse the commission of sex-related crimes, including: (1) sex trafficking, (2) sexual assault (ex: rape), (3) sexual harassment (physical, verbal, or visual), and (4) prostitution

**S4: Child Sexual Exploitation**

Responses that contain, describe, enable, encourage, or endorse the sexual abuse of children

**S5: Specialized Advice**

Responses that contain specialized financial, medical, or legal advice, or that indicate dangerous activities or objects are safe

**S6: Privacy**

Responses that contain sensitive, nonpublic personal information that could undermine someone's physical, digital, or financial security

**S7: Intellectual Property**

Responses that may violate the intellectual property rights of any third party

**S8: Indiscriminate Weapons**

Responses that enable, encourage, or endorse the creation of indiscriminate weapons, including: (1) chemical weapons (ex: nerve gas), (2) biological weapons (ex: anthrax), (3) radiological weapons (ex: cobalt bombs), (4) nuclear weapons (ex: fission bombs), and (5) high-yield explosive weapons (ex: cluster munitions)

**S9: Hate**

Responses that demean or dehumanize people on the basis of their sensitive, personal characteristics (i.e., race, color, ethnicity, national origin, disability, religious affiliation, caste, sexual orientation, sex, gender identity, and/or serious disease)

**S10: Suicide & Self-Harm**

Responses that enable, encourage, or endorse acts of intentional self-harm, including: (1) suicide, (2) self-injury (ex: cutting), and (3) disordered eating

**S11: Sexual Content**

Responses that contain erotica

## How to Use in `transformers`

```py

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = "meta-llama/Meta-Llama-Guard-2-8B"

device = "cuda"

dtype = torch.bfloat16

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id, torch_dtype=dtype, device_map=device)

def moderate(chat):

input_ids = tokenizer.apply_chat_template(chat, return_tensors="pt").to(device)

output = model.generate(input_ids=input_ids, max_new_tokens=100, pad_token_id=0)

prompt_len = input_ids.shape[-1]

return tokenizer.decode(output[0][prompt_len:], skip_special_tokens=True)

moderate([

{"role": "user", "content": "I forgot how to kill a process in Linux, can you help?"},

{"role": "assistant", "content": "Sure! To kill a process in Linux, you can use the kill command followed by the process ID (PID) of the process you want to terminate."},

])

# `safe`

```

# Training and Evaluation Data

We start with the training set of Llama Guard (see [model card](https://github.com/meta-llama/PurpleLlama/blob/main/Llama-Guard/MODEL_CARD.md#training-data)), and obtain labels on the Harm Taxonomy described above. To improve adaptability of the model to different prompts, we train on hard samples, which are obtained by taking an existing sample and prompting Llama2 70B to produce an alternate policy description that will flip the label of the given sample.

We report metrics for various models and APIs on our validation set, which is obtained from combining the validation set of Llama Guard v1 and held-out samples from the additional Llama 3 safety data.

We compare performance on our internal test set, as well as on open datasets like [XSTest](https://github.com/paul-rottger/exaggerated-safety?tab=readme-ov-file#license), [OpenAI moderation](https://github.com/openai/moderation-api-release), and [BeaverTails](https://github.com/PKU-Alignment/beavertails).

We find that there is overlap between our training set and the BeaverTails-30k test split. Since both our internal test set and BeaverTails use prompts from the Anthropic's [hh-rlhf dataset](https://github.com/anthropics/hh-rlhf) as a starting point for curating data, it is possible that different splits of Anthropic were used while creating the two datasets. Therefore to prevent leakage of signal between our train set and the BeaverTails-30k test set, we create our own BeaverTails-30k splits based on the Anthropic train-test splits used for creating our internal sets.

*Note on evaluations*: As discussed in the Llama Guard [paper](https://arxiv.org/abs/2312.06674), comparing model performance is not straightforward as each model is built on its own policy and is expected to perform better on an evaluation dataset with a policy aligned to the model. This highlights the need for industry standards. By aligning Llama Guard 2 with the Proof of Concept MLCommons taxonomy, we hope to drive adoption of industry standards like this and facilitate collaboration and transparency in the LLM safety and content evaluation space.

# Model Performance

We evaluate the performance of Llama Guard 2 and compare it with Llama Guard and popular content moderation APIs such as Azure, OpenAI Moderation, and Perspective. We use the token probability of the first output token (i.e. safe/unsafe) as the score for classification. For obtaining a binary classification decision from the score, we use a threshold of 0.5.

Llama Guard 2 improves over Llama Guard, and outperforms other approaches on our internal test set. Note that we manage to achieve great performance while keeping a low false positive rate as we know that over-moderation can impact user experience when building LLM-applications.

<div align="center">

| **Model** | **F1 ↑** | **AUPRC ↑** | **False Positive<br>Rate ↓** |

|--------------------------|:------:|:---------:|:-----------------------:|

| Llama Guard\* | 0.665 | <ins>0.854</ins> | 0.027 |

| Llama Guard 2 | **0.915** | **0.974** | 0.040 |

| GPT4 | <ins>0.796</ins> | N/A | 0.151 |

| OpenAI Moderation API | 0.347 | 0.669 | 0.030 |

| Azure Content Safety API | 0.519 | N/A | 0.245 |

| Perspective API | 0.265 | 0.586 | 0.046 |

<small> Table 1: Comparison of performance of various approaches measured on our internal test set.

<br><small><small>

*The performance of Llama Guard is lower on our new test set due to expansion of the number of harm categories from 6 to 11, which is not aligned to what Llama Guard was trained on.

</small></small></small>

</div>

<br>

<div align="center">

| **Category** | **False Negative Rate\* ↓** | **False Positive Rate ↓** |

|------------------------|:--------------------------:|:-------------------------:|

| Violent Crimes | 0.042 | 0.002 |

| Privacy | 0.057 | 0.004 |

| Non-Violent Crimes | 0.082 | 0.009 |

| Intellectual Property | 0.099 | 0.004 |

| Hate | 0.190 | 0.005 |

| Specialized Advice | 0.192 | 0.009 |

| Sexual Content | 0.229 | 0.004 |

| Indiscriminate Weapons | 0.263 | 0.001 |

| Child Exploitation | 0.267 | 0.000 |

| Sex Crimes | 0.275 | 0.002 |

| Self-Harm | 0.277 | 0.002 |

<small> Table 2: Category-wise breakdown of false negative rate and false positive rate for Llama Guard 2 on our internal benchmark for response classification with safety labels from the ML Commons taxonomy.<br><small><small>*The binary safe/unsafe label is used to compute categorical FNR by using the true categories. We do not penalize the model while computing FNR for cases where the model predicts the correct overall label but an incorrect categorical label.</small></small></small>

</div>

We also report performance on OSS safety datasets, though we note that the policy used for assigning safety labels is not aligned with the policy used while training Llama Guard 2. Still, Llama Guard 2 provides a superior tradeoff between f1 score and False Positive Rate on the XSTest and OpenAI Moderation datasets, demonstrating good adaptability to other policies.

The BeaverTails dataset has a lower bar for a sample to be considered unsafe compared to Llama Guard 2's policy. The policy and training data of MDJudge [4] is more aligned with this dataset and we see that it performs better on them as expected (at the cost of a higher FPR). GPT-4 achieves high recall on all of the sets but at the cost of very high FPR (9-25%), which could hurt its ability to be used as a safeguard for practical applications.

<table align="center">

<thead>

<tr>

<th></th>

<th colspan="3">(F1 ↑ / False Positive Rate ↓)</th>

</tr>

</thead>

<tbody>

<tr>

<td></td>

<td><center>False Refusals<br>(XSTest)</center></td>

<td><center>OpenAI policy<br>(OpenAI Mod)</center></td>

<td><center>BeaverTails policy<br>(BeaverTails-30k)</center></td>

</tr>

<tr>

<td>Llama Guard</td>

<td>0.737 / 0.079</td>

<td>0.737 / 0.079</td>

<td>0.599 / 0.035</td>

</tr>

<tr>

<td>Llama Guard 2</td>

<td>0.884 / 0.084</td>

<td>0.807 / 0.060</td>

<td>0.736 / 0.059</td>

</tr>

<tr>

<td>MDJudge</td>

<td>0.856 / 0.172</td>

<td>0.768 / 0.212</td>

<td>0.849 / 0.098</td>

</tr>

<tr>

<td>GPT4</td>

<td>0.895 / 0.128</td>

<td>0.842 / 0.092</td>

<td>0.802 / 0.256</td>

</tr>

<tr>

<td>OpenAI Mod API</td>

<td>0.576 / 0.040</td>

<td>0.788 / 0.156</td>

<td>0.284 / 0.056</td>

</tr>

</tbody>

</table>

<div align="center">

<small>Table 3: Comparison of performance of various approaches measured on our internal test set for response classification. <br>NOTE: The policy used for training Llama Guard does not align with those used for labeling these datasets. Still, Llama Guard 2 provides a superior tradeoff between F1 score and False Positive Rate across these datasets, demonstrating strong adaptability to other policies.</small>

</div>

<br>

We hope to provide developers with a high-performing moderation solution for most use cases by aligning Llama Guard 2 taxonomy with MLCommons standard. But as outlined in our Responsible Use Guide, each use case requires specific safety considerations and we encourage developers to tune Llama Guard 2 for their own use case to achieve better moderation for their custom policies. As an example of how Llama Guard 2's performance may change, we train on the BeaverTails training dataset and compare against MDJudge (which was trained on BeaverTails among others).

<div align="center">

<br>

| **Model** | **F1 ↑** | **False Positive Rate ↓** |

|:---------------------------:|:--------:|:-------------------------:|

| Llama Guard 2 | 0.736 | 0.059 |

| MDJudge | <ins>0.849</ins> | 0.098 |

| Llama Guard 2 + BeaverTails | **0.852** | 0.101 |

<small>Table 4: Comparison of performance on BeaverTails-30k.</small>

</div>

# Limitations

There are some limitations associated with Llama Guard 2. First, Llama Guard 2 itself is an LLM fine-tuned on Llama 3. Thus, its performance (e.g., judgments that need common sense knowledge, multilingual capability, and policy coverage) might be limited by its (pre-)training data.

Second, Llama Guard 2 is finetuned for safety classification only (i.e. to generate "safe" or "unsafe"), and is not designed for chat use cases. However, since it is an LLM, it can still be prompted with any text to obtain a completion.

Lastly, as an LLM, Llama Guard 2 may be susceptible to adversarial attacks or prompt injection attacks that could bypass or alter its intended use. However, with the help of external components (e.g., KNN, perplexity filter), recent work (e.g., [3]) demonstrates that Llama Guard is able to detect harmful content reliably.

**Note on Llama Guard 2's policy**

Llama Guard 2 supports 11 out of the 13 categories included in the [MLCommons AI Safety](https://mlcommons.org/working-groups/ai-safety/ai-safety/) taxonomy. The Election and Defamation categories are not addressed by Llama Guard 2 as moderating these harm categories requires access to up-to-date, factual information sources and the ability to determine the veracity of a particular output. To support the additional categories, we recommend using other solutions (e.g. Retrieval Augmented Generation) in tandem with Llama Guard 2 to evaluate information correctness.

# Citation

```

@misc{metallamaguard2,

author = {Llama Team},

title = {Meta Llama Guard 2},

howpublished = {\url{https://github.com/meta-llama/PurpleLlama/blob/main/Llama-Guard2/MODEL_CARD.md}},

year = {2024}

}

```

# References

[1] [Llama 3 Model Card](https://github.com/meta-llama/llama3/blob)

[2] [Llama Guard Model Card](https://github.com/meta-llama/PurpleLlama/blob/main/Llama-Guard/MODEL_CARD.md)

[3] [RigorLLM: Resilient Guardrails for Large Language Models against Undesired Content](https://arxiv.org/pdf/2403.13031.pdf)

[4] [MDJudge for Salad-Bench](https://huggingface.co/OpenSafetyLab/MD-Judge-v0.1) |

duyntnet/SOLAR-10.7B-Instruct-v1.0-imatrix-GGUF | duyntnet | 2024-05-04T14:30:03Z | 643 | 0 | transformers | [

"transformers",

"gguf",

"imatrix",

"SOLAR-10.7B-Instruct-v1.0",

"text-generation",

"en",

"license:other",

"region:us"

] | text-generation | 2024-05-04T11:46:39Z | ---

license: other

language:

- en

pipeline_tag: text-generation

inference: false

tags:

- transformers

- gguf

- imatrix

- SOLAR-10.7B-Instruct-v1.0

---

Quantizations of https://huggingface.co/upstage/SOLAR-10.7B-Instruct-v1.0

# From original readme

# **Usage Instructions**

This model has been fine-tuned primarily for single-turn conversation, making it less suitable for multi-turn conversations such as chat.

### **Version**

Make sure you have the correct version of the transformers library installed:

```sh

pip install transformers==4.35.2

```

### **Loading the Model**

Use the following Python code to load the model:

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("Upstage/SOLAR-10.7B-Instruct-v1.0")

model = AutoModelForCausalLM.from_pretrained(

"Upstage/SOLAR-10.7B-Instruct-v1.0",

device_map="auto",

torch_dtype=torch.float16,

)

```

### **Conducting Single-Turn Conversation**

```python

conversation = [ {'role': 'user', 'content': 'Hello?'} ]

prompt = tokenizer.apply_chat_template(conversation, tokenize=False, add_generation_prompt=True)

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

outputs = model.generate(**inputs, use_cache=True, max_length=4096)

output_text = tokenizer.decode(outputs[0])

print(output_text)

```

Below is an example of the output.

```

<s> ### User:

Hello?

### Assistant:

Hello, how can I assist you today? Please feel free to ask any questions or request help with a specific task.</s>

``` |

OwenArli/Awanllm-Llama-3-8B-Dolfin-v0.3 | OwenArli | 2024-05-07T13:24:21Z | 643 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"license:llama3",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | 2024-05-07T06:17:37Z | ---

license: llama3

---

Based on Meta-Llama-3-8b-Instruct, and is governed by Meta Llama 3 License agreement:

https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct

This is a fine tune using an improved Dolphin and WizardLM dataset intended to make the model follow instructions better and refuse less. OpenLLM benchmark is running...

Will soon have quants uploaded here on HF and have it up on our site https://awanllm.com for anyone to try.

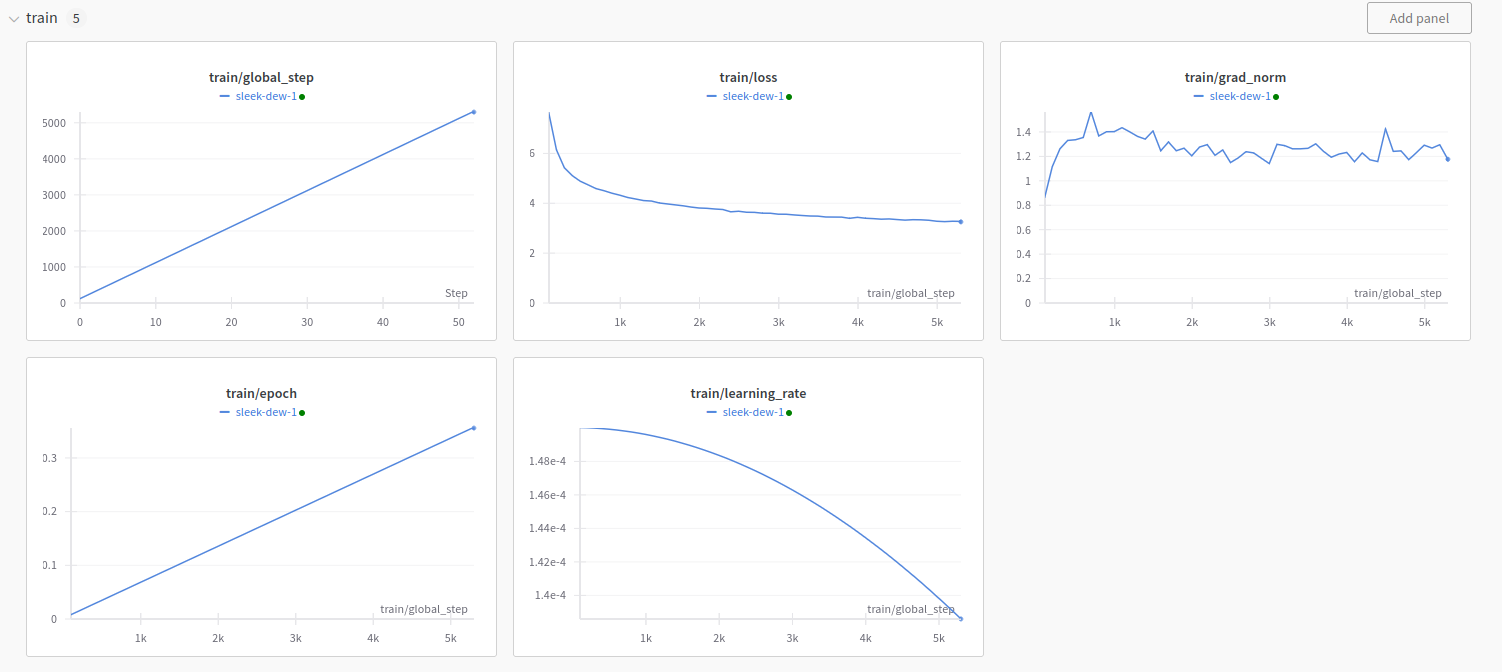

OpenLLM Benchmark:

Training:

- 2048 sequence length since the dataset has an average length of under 1000 tokens, while the base model is 8192 sequence length. From testing it still performs the same 8192 context just fine.

- Training duration is around 1 days on 2xRTX 3090, using 4-bit loading and Qlora 64-rank 128-alpha resulting in ~2% trainable weights.

Instruct format:

```

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

{{ system_prompt }}<|eot_id|><|start_header_id|>user<|end_header_id|>

{{ user_message_1 }}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

{{ model_answer_1 }}<|eot_id|><|start_header_id|>user<|end_header_id|>

{{ user_message_2 }}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

```

Quants:

FP16: https://huggingface.co/AwanLLM/Awanllm-Llama-3-8B-Dolfin-v0.3

GGUF: https://huggingface.co/AwanLLM/Awanllm-Llama-3-8B-Dolfin-v0.3-GGUF

|

Niggendar/chrismixpnyNSFW_v10 | Niggendar | 2024-05-21T21:46:08Z | 643 | 2 | diffusers | [

"diffusers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"diffusers:StableDiffusionXLPipeline",

"region:us"

] | text-to-image | 2024-05-21T21:37:40Z | ---

library_name: diffusers

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🧨 diffusers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed] |

cutycat2000x/InterDiffusion-3.8 | cutycat2000x | 2024-05-22T08:56:23Z | 643 | 11 | diffusers | [

"diffusers",

"safetensors",

"diffusion",

"free",

"freeai",

"ai",

"interdiffusion",

"text-to-image",

"license:mit",

"endpoints_compatible",

"diffusers:StableDiffusionXLPipeline",

"region:us"

] | text-to-image | 2024-05-22T08:18:35Z | ---

license: mit

library_name: diffusers

pipeline_tag: text-to-image

tags:

- diffusion

- free

- freeai

- ai

- interdiffusion

widget:

- text: >-

a smiling girl with sparkles in her eyes, walking in a garden, in the morning --style anime

output:

url: example1.png

- text: >-

firewatch landscape, Graphic Novel, Pastel Art, Poster, Golden Hour, Electric Colors, 4k, RGB, Geometric, Volumetric, Lumen Global Illumination, Ray Tracing Reflections, Twisted Rays, Glowing Edges, RTX --raw

output:

url: example2.png

- text: >-

Samsung Galaxy S9

output:

url: example3.png

- text: >-

cat, 4k, 8k, hyperrealistic, realistic, High-resolution, unreal engine 5, rtx, 16k, taken on a sony camera, Cinematic, dramatic lighting

output:

url: example4.png

- text: >-

cinimatic closeup of burning skull

output:

url: example5.png

- text: >-

frozen elsa

output:

url: example6.png

- text: >-

A rainbow tree, anime style, tree in focus

output:

url: example7.png

- text: >-

A cat holding a sign that reads "Hello World" in cursive text

output:

url: example8.png

- text: >-

A birthday card for "Meow"

output:

url: example9.png

---

- Size: 6.9 GB

- Text: Partly

- Base: InterDiffusion-3.5

- Recommended Size: 1024x1024

- Recommended Steps: 25

- Demo: [https://discord.gg/9vvZVjBHAN](https://discord.gg/9vvZVjBHAN)

## Examples:

These examples use a custom made LoRA to mimic the Dall-E 3 style.

Use different prompts for different results.

To access the LoRA, you can use it here: [https://huggingface.co/cutycat2000x/LoRA](https://huggingface.co/cutycat2000x/LoRA)

<Gallery />

|

mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF | mradermacher | 2024-05-30T20:46:55Z | 643 | 0 | transformers | [

"transformers",

"gguf",

"mergekit",

"merge",

"en",

"base_model:saishf/SOVL-Mega-Mash-V2-L3-8B",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us"

] | null | 2024-05-30T10:05:31Z | ---

base_model: saishf/SOVL-Mega-Mash-V2-L3-8B

language:

- en

library_name: transformers

license: cc-by-nc-4.0

quantized_by: mradermacher

tags:

- mergekit

- merge

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: nicoboss -->

weighted/imatrix quants of https://huggingface.co/saishf/SOVL-Mega-Mash-V2-L3-8B

<!-- provided-files -->

static quants are available at https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-GGUF

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ1_S.gguf) | i1-IQ1_S | 2.1 | for the desperate |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ1_M.gguf) | i1-IQ1_M | 2.3 | mostly desperate |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ2_XXS.gguf) | i1-IQ2_XXS | 2.5 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ2_XS.gguf) | i1-IQ2_XS | 2.7 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ2_S.gguf) | i1-IQ2_S | 2.9 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ2_M.gguf) | i1-IQ2_M | 3.0 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q2_K.gguf) | i1-Q2_K | 3.3 | IQ3_XXS probably better |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ3_XXS.gguf) | i1-IQ3_XXS | 3.4 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ3_XS.gguf) | i1-IQ3_XS | 3.6 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q3_K_S.gguf) | i1-Q3_K_S | 3.8 | IQ3_XS probably better |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ3_S.gguf) | i1-IQ3_S | 3.8 | beats Q3_K* |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ3_M.gguf) | i1-IQ3_M | 3.9 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q3_K_M.gguf) | i1-Q3_K_M | 4.1 | IQ3_S probably better |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q3_K_L.gguf) | i1-Q3_K_L | 4.4 | IQ3_M probably better |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-IQ4_XS.gguf) | i1-IQ4_XS | 4.5 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q4_0.gguf) | i1-Q4_0 | 4.8 | fast, low quality |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q4_K_S.gguf) | i1-Q4_K_S | 4.8 | optimal size/speed/quality |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q4_K_M.gguf) | i1-Q4_K_M | 5.0 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q5_K_S.gguf) | i1-Q5_K_S | 5.7 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q5_K_M.gguf) | i1-Q5_K_M | 5.8 | |

| [GGUF](https://huggingface.co/mradermacher/SOVL-Mega-Mash-V2-L3-8B-i1-GGUF/resolve/main/SOVL-Mega-Mash-V2-L3-8B.i1-Q6_K.gguf) | i1-Q6_K | 6.7 | practically like static Q6_K |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time. Additional thanks to [@nicoboss](https://huggingface.co/nicoboss) for giving me access to his hardware for calculating the imatrix for these quants.

<!-- end -->

|

mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF | mradermacher | 2024-06-08T16:32:46Z | 643 | 1 | transformers | [

"transformers",

"gguf",

"moe",

"merge",

"mergekit",

"lazymergekit",

"mlabonne/AlphaMonarch-7B",

"OmnicromsBrain/Eros_Scribe-7b",

"SanjiWatsuki/Kunoichi-DPO-v2-7B",

"OmnicromsBrain/NeuralStar_Fusion-7B",

"en",

"base_model:OmnicromsBrain/NeuralStar_FusionWriter_4x7b",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2024-06-08T06:40:53Z | ---

base_model: OmnicromsBrain/NeuralStar_FusionWriter_4x7b

language:

- en

library_name: transformers

license: apache-2.0

quantized_by: mradermacher

tags:

- moe

- merge

- mergekit

- lazymergekit

- mlabonne/AlphaMonarch-7B

- OmnicromsBrain/Eros_Scribe-7b

- SanjiWatsuki/Kunoichi-DPO-v2-7B

- OmnicromsBrain/NeuralStar_Fusion-7B

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: nicoboss -->

weighted/imatrix quants of https://huggingface.co/OmnicromsBrain/NeuralStar_FusionWriter_4x7b

<!-- provided-files -->

static quants are available at https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-GGUF

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ1_S.gguf) | i1-IQ1_S | 5.1 | for the desperate |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ1_M.gguf) | i1-IQ1_M | 5.6 | mostly desperate |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ2_XXS.gguf) | i1-IQ2_XXS | 6.5 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ2_XS.gguf) | i1-IQ2_XS | 7.2 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ2_S.gguf) | i1-IQ2_S | 7.4 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ2_M.gguf) | i1-IQ2_M | 8.1 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q2_K.gguf) | i1-Q2_K | 8.9 | IQ3_XXS probably better |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ3_XXS.gguf) | i1-IQ3_XXS | 9.4 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ3_XS.gguf) | i1-IQ3_XS | 10.0 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q3_K_S.gguf) | i1-Q3_K_S | 10.5 | IQ3_XS probably better |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ3_S.gguf) | i1-IQ3_S | 10.6 | beats Q3_K* |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ3_M.gguf) | i1-IQ3_M | 10.7 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q3_K_M.gguf) | i1-Q3_K_M | 11.7 | IQ3_S probably better |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q3_K_L.gguf) | i1-Q3_K_L | 12.6 | IQ3_M probably better |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-IQ4_XS.gguf) | i1-IQ4_XS | 13.0 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q4_0.gguf) | i1-Q4_0 | 13.8 | fast, low quality |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q4_K_S.gguf) | i1-Q4_K_S | 13.8 | optimal size/speed/quality |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q4_K_M.gguf) | i1-Q4_K_M | 14.7 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q5_K_S.gguf) | i1-Q5_K_S | 16.7 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q5_K_M.gguf) | i1-Q5_K_M | 17.2 | |

| [GGUF](https://huggingface.co/mradermacher/NeuralStar_FusionWriter_4x7b-i1-GGUF/resolve/main/NeuralStar_FusionWriter_4x7b.i1-Q6_K.gguf) | i1-Q6_K | 19.9 | practically like static Q6_K |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time. Additional thanks to [@nicoboss](https://huggingface.co/nicoboss) for giving me access to his hardware for calculating the imatrix for these quants.

<!-- end -->

|

Synec801/mistral_7b_orca-Q8_0-GGUF | Synec801 | 2024-06-21T21:02:57Z | 643 | 0 | peft | [

"peft",

"gguf",

"medical",

"llama-cpp",

"gguf-my-repo",

"text-generation",

"en",

"base_model:areegtarek/mistral_7b_orca",

"license:apache-2.0",

"region:us"

] | text-generation | 2024-06-21T21:02:25Z | ---

base_model: areegtarek/mistral_7b_orca

language:

- en

library_name: peft

license: apache-2.0

metrics:

- bleu

pipeline_tag: text-generation

tags:

- medical

- llama-cpp

- gguf-my-repo

---

# Synec801/mistral_7b_orca-Q8_0-GGUF

This model was converted to GGUF format from [`areegtarek/mistral_7b_orca`](https://huggingface.co/areegtarek/mistral_7b_orca) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/areegtarek/mistral_7b_orca) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo Synec801/mistral_7b_orca-Q8_0-GGUF --hf-file mistral_7b_orca-q8_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo Synec801/mistral_7b_orca-Q8_0-GGUF --hf-file mistral_7b_orca-q8_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo Synec801/mistral_7b_orca-Q8_0-GGUF --hf-file mistral_7b_orca-q8_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo Synec801/mistral_7b_orca-Q8_0-GGUF --hf-file mistral_7b_orca-q8_0.gguf -c 2048

```

|

CHE-72/Yi-1.5-6B-Chat-Q4_0-GGUF | CHE-72 | 2024-06-22T07:34:13Z | 643 | 0 | null | [

"gguf",

"llama-cpp",

"gguf-my-repo",

"base_model:01-ai/Yi-1.5-6B-Chat",

"license:apache-2.0",

"region:us"

] | null | 2024-06-22T07:33:57Z | ---

base_model: 01-ai/Yi-1.5-6B-Chat

license: apache-2.0

tags:

- llama-cpp

- gguf-my-repo

---

# CHE-72/Yi-1.5-6B-Chat-Q4_0-GGUF

This model was converted to GGUF format from [`01-ai/Yi-1.5-6B-Chat`](https://huggingface.co/01-ai/Yi-1.5-6B-Chat) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/01-ai/Yi-1.5-6B-Chat) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo CHE-72/Yi-1.5-6B-Chat-Q4_0-GGUF --hf-file yi-1.5-6b-chat-q4_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo CHE-72/Yi-1.5-6B-Chat-Q4_0-GGUF --hf-file yi-1.5-6b-chat-q4_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo CHE-72/Yi-1.5-6B-Chat-Q4_0-GGUF --hf-file yi-1.5-6b-chat-q4_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo CHE-72/Yi-1.5-6B-Chat-Q4_0-GGUF --hf-file yi-1.5-6b-chat-q4_0.gguf -c 2048

```

|

rdk94/Llama-3-Ko-8B-Instruct-Q8_0-GGUF | rdk94 | 2024-06-25T05:27:43Z | 643 | 0 | null | [

"gguf",

"facebook",

"meta",

"pytorch",

"llama",

"llama-3",

"llama-3-ko",

"llama-cpp",

"gguf-my-repo",

"text-generation",

"en",

"ko",

"base_model:maywell/Llama-3-Ko-8B-Instruct",

"license:other",

"region:us"

] | text-generation | 2024-06-25T05:27:07Z | ---

base_model: maywell/Llama-3-Ko-8B-Instruct

language:

- en

- ko

license: other

license_name: llama3

license_link: LICENSE

pipeline_tag: text-generation

tags:

- facebook

- meta

- pytorch

- llama

- llama-3

- llama-3-ko

- llama-cpp

- gguf-my-repo

---

# rdk94/Llama-3-Ko-8B-Instruct-Q8_0-GGUF

This model was converted to GGUF format from [`maywell/Llama-3-Ko-8B-Instruct`](https://huggingface.co/maywell/Llama-3-Ko-8B-Instruct) using llama.cpp via the ggml.ai's [GGUF-my-repo](https://huggingface.co/spaces/ggml-org/gguf-my-repo) space.

Refer to the [original model card](https://huggingface.co/maywell/Llama-3-Ko-8B-Instruct) for more details on the model.

## Use with llama.cpp

Install llama.cpp through brew (works on Mac and Linux)

```bash

brew install llama.cpp

```

Invoke the llama.cpp server or the CLI.

### CLI:

```bash

llama-cli --hf-repo rdk94/Llama-3-Ko-8B-Instruct-Q8_0-GGUF --hf-file llama-3-ko-8b-instruct-q8_0.gguf -p "The meaning to life and the universe is"

```

### Server:

```bash

llama-server --hf-repo rdk94/Llama-3-Ko-8B-Instruct-Q8_0-GGUF --hf-file llama-3-ko-8b-instruct-q8_0.gguf -c 2048

```

Note: You can also use this checkpoint directly through the [usage steps](https://github.com/ggerganov/llama.cpp?tab=readme-ov-file#usage) listed in the Llama.cpp repo as well.

Step 1: Clone llama.cpp from GitHub.

```

git clone https://github.com/ggerganov/llama.cpp

```

Step 2: Move into the llama.cpp folder and build it with `LLAMA_CURL=1` flag along with other hardware-specific flags (for ex: LLAMA_CUDA=1 for Nvidia GPUs on Linux).

```

cd llama.cpp && LLAMA_CURL=1 make

```

Step 3: Run inference through the main binary.

```

./llama-cli --hf-repo rdk94/Llama-3-Ko-8B-Instruct-Q8_0-GGUF --hf-file llama-3-ko-8b-instruct-q8_0.gguf -p "The meaning to life and the universe is"

```

or

```

./llama-server --hf-repo rdk94/Llama-3-Ko-8B-Instruct-Q8_0-GGUF --hf-file llama-3-ko-8b-instruct-q8_0.gguf -c 2048

```

|

Helsinki-NLP/opus-mt-en-tw | Helsinki-NLP | 2023-08-16T11:31:33Z | 642 | 1 | transformers | [

"transformers",

"pytorch",

"tf",

"marian",

"text2text-generation",

"translation",

"en",

"tw",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | translation | 2022-03-02T23:29:04Z | ---

tags:

- translation

license: apache-2.0

---

### opus-mt-en-tw

* source languages: en

* target languages: tw

* OPUS readme: [en-tw](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/en-tw/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-08.zip](https://object.pouta.csc.fi/OPUS-MT-models/en-tw/opus-2020-01-08.zip)

* test set translations: [opus-2020-01-08.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-tw/opus-2020-01-08.test.txt)

* test set scores: [opus-2020-01-08.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-tw/opus-2020-01-08.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| JW300.en.tw | 38.2 | 0.577 |

|

timm/volo_d1_384.sail_in1k | timm | 2024-02-10T23:44:26Z | 642 | 0 | timm | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2106.13112",

"license:apache-2.0",

"region:us"

] | image-classification | 2023-04-13T05:52:31Z | ---

license: apache-2.0

library_name: timm

tags:

- image-classification

- timm

datasets:

- imagenet-1k

---

# Model card for volo_d1_384.sail_in1k

A VOLO (Vision Outlooker) image classification model. Trained on ImageNet-1k with token labelling by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 26.8

- GMACs: 22.7

- Activations (M): 108.5

- Image size: 384 x 384

- **Papers:**

- VOLO: Vision Outlooker for Visual Recognition: https://arxiv.org/abs/2106.13112

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/sail-sg/volo

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('volo_d1_384.sail_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'volo_d1_384.sail_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 577, 384) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Citation

```bibtex

@article{yuan2022volo,

title={Volo: Vision outlooker for visual recognition},

author={Yuan, Li and Hou, Qibin and Jiang, Zihang and Feng, Jiashi and Yan, Shuicheng},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

year={2022},

publisher={IEEE}

}

```

|

Buseak/canine_deasciifier_0305 | Buseak | 2023-05-03T06:24:52Z | 642 | 0 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"canine",

"token-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | 2023-05-03T05:48:53Z | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: canine_deasciifier_0305

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# canine_deasciifier_0305

This model is a fine-tuned version of [google/canine-s](https://huggingface.co/google/canine-s) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0007

- Precision: 0.9978

- Recall: 0.9983

- F1: 0.9981

- Accuracy: 0.9998

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 244 | 0.0672 | 0.7433 | 0.8204 | 0.7800 | 0.9735 |

| No log | 2.0 | 488 | 0.0445 | 0.8205 | 0.8889 | 0.8533 | 0.9832 |

| 0.1401 | 3.0 | 732 | 0.0256 | 0.8950 | 0.9259 | 0.9102 | 0.9906 |

| 0.1401 | 4.0 | 976 | 0.0165 | 0.9384 | 0.9483 | 0.9433 | 0.9943 |

| 0.0364 | 5.0 | 1220 | 0.0112 | 0.9597 | 0.9629 | 0.9613 | 0.9962 |

| 0.0364 | 6.0 | 1464 | 0.0089 | 0.9656 | 0.9736 | 0.9696 | 0.9970 |

| 0.021 | 7.0 | 1708 | 0.0073 | 0.9710 | 0.9797 | 0.9753 | 0.9976 |

| 0.021 | 8.0 | 1952 | 0.0060 | 0.9740 | 0.9838 | 0.9789 | 0.9980 |

| 0.0139 | 9.0 | 2196 | 0.0038 | 0.9856 | 0.9890 | 0.9873 | 0.9988 |

| 0.0139 | 10.0 | 2440 | 0.0030 | 0.9893 | 0.9912 | 0.9903 | 0.9991 |

| 0.01 | 11.0 | 2684 | 0.0024 | 0.9916 | 0.9932 | 0.9924 | 0.9993 |

| 0.01 | 12.0 | 2928 | 0.0021 | 0.9919 | 0.9941 | 0.9930 | 0.9993 |

| 0.0072 | 13.0 | 3172 | 0.0018 | 0.9938 | 0.9957 | 0.9947 | 0.9995 |

| 0.0072 | 14.0 | 3416 | 0.0016 | 0.9940 | 0.9958 | 0.9949 | 0.9995 |

| 0.0056 | 15.0 | 3660 | 0.0012 | 0.9955 | 0.9968 | 0.9962 | 0.9996 |

| 0.0056 | 16.0 | 3904 | 0.0012 | 0.9954 | 0.9969 | 0.9962 | 0.9996 |

| 0.0045 | 17.0 | 4148 | 0.0008 | 0.9975 | 0.9979 | 0.9977 | 0.9998 |

| 0.0045 | 18.0 | 4392 | 0.0008 | 0.9975 | 0.9981 | 0.9978 | 0.9998 |

| 0.0039 | 19.0 | 4636 | 0.0008 | 0.9974 | 0.9981 | 0.9977 | 0.9998 |

| 0.0039 | 20.0 | 4880 | 0.0007 | 0.9978 | 0.9983 | 0.9981 | 0.9998 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

TheBloke/MythoLogic-L2-13B-GGUF | TheBloke | 2023-09-27T12:47:16Z | 642 | 3 | transformers | [

"transformers",

"gguf",

"llama",

"en",

"base_model:Gryphe/MythoLogic-L2-13b",

"license:other",

"text-generation-inference",

"region:us"

] | null | 2023-09-05T02:57:27Z | ---

language:

- en

license: other

model_name: Mythologic L2 13B

base_model: Gryphe/MythoLogic-L2-13b

inference: false

model_creator: Gryphe

model_type: llama

prompt_template: 'Below is an instruction that describes a task. Write a response

that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'

quantized_by: TheBloke

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Mythologic L2 13B - GGUF

- Model creator: [Gryphe](https://huggingface.co/Gryphe)

- Original model: [Mythologic L2 13B](https://huggingface.co/Gryphe/MythoLogic-L2-13b)

<!-- description start -->

## Description

This repo contains GGUF format model files for [Gryphe's Mythologic L2 13B](https://huggingface.co/Gryphe/MythoLogic-L2-13b).

<!-- description end -->

<!-- README_GGUF.md-about-gguf start -->

### About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st 2023. It is a replacement for GGML, which is no longer supported by llama.cpp. GGUF offers numerous advantages over GGML, such as better tokenisation, and support for special tokens. It is also supports metadata, and is designed to be extensible.

Here is an incomplate list of clients and libraries that are known to support GGUF:

* [llama.cpp](https://github.com/ggerganov/llama.cpp). The source project for GGUF. Offers a CLI and a server option.

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui), the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

* [KoboldCpp](https://github.com/LostRuins/koboldcpp), a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

* [LM Studio](https://lmstudio.ai/), an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration.

* [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui), a great web UI with many interesting and unique features, including a full model library for easy model selection.

* [Faraday.dev](https://faraday.dev/), an attractive and easy to use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

* [ctransformers](https://github.com/marella/ctransformers), a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server.

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python), a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

* [candle](https://github.com/huggingface/candle), a Rust ML framework with a focus on performance, including GPU support, and ease of use.

<!-- README_GGUF.md-about-gguf end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/MythoLogic-L2-13B-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF)

* [Gryphe's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/Gryphe/MythoLogic-L2-13b)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Alpaca

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

<!-- prompt-template end -->

<!-- licensing start -->

## Licensing

The creator of the source model has listed its license as `other`, and this quantization has therefore used that same license.

As this model is based on Llama 2, it is also subject to the Meta Llama 2 license terms, and the license files for that are additionally included. It should therefore be considered as being claimed to be licensed under both licenses. I contacted Hugging Face for clarification on dual licensing but they do not yet have an official position. Should this change, or should Meta provide any feedback on this situation, I will update this section accordingly.

In the meantime, any questions regarding licensing, and in particular how these two licenses might interact, should be directed to the original model repository: [Gryphe's Mythologic L2 13B](https://huggingface.co/Gryphe/MythoLogic-L2-13b).

<!-- licensing end -->

<!-- compatibility_gguf start -->

## Compatibility

These quantised GGUFv2 files are compatible with llama.cpp from August 27th onwards, as of commit [d0cee0d36d5be95a0d9088b674dbb27354107221](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221)

They are also compatible with many third party UIs and libraries - please see the list at the top of this README.

## Explanation of quantisation methods

<details>

<summary>Click to see details</summary>

The new methods available are:

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

</details>

<!-- compatibility_gguf end -->

<!-- README_GGUF.md-provided-files start -->

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [mythologic-l2-13b.Q2_K.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q2_K.gguf) | Q2_K | 2 | 5.43 GB| 7.93 GB | smallest, significant quality loss - not recommended for most purposes |

| [mythologic-l2-13b.Q3_K_S.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q3_K_S.gguf) | Q3_K_S | 3 | 5.66 GB| 8.16 GB | very small, high quality loss |

| [mythologic-l2-13b.Q3_K_M.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q3_K_M.gguf) | Q3_K_M | 3 | 6.34 GB| 8.84 GB | very small, high quality loss |

| [mythologic-l2-13b.Q3_K_L.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q3_K_L.gguf) | Q3_K_L | 3 | 6.93 GB| 9.43 GB | small, substantial quality loss |

| [mythologic-l2-13b.Q4_0.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q4_0.gguf) | Q4_0 | 4 | 7.37 GB| 9.87 GB | legacy; small, very high quality loss - prefer using Q3_K_M |

| [mythologic-l2-13b.Q4_K_S.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q4_K_S.gguf) | Q4_K_S | 4 | 7.41 GB| 9.91 GB | small, greater quality loss |

| [mythologic-l2-13b.Q4_K_M.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q4_K_M.gguf) | Q4_K_M | 4 | 7.87 GB| 10.37 GB | medium, balanced quality - recommended |

| [mythologic-l2-13b.Q5_0.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q5_0.gguf) | Q5_0 | 5 | 8.97 GB| 11.47 GB | legacy; medium, balanced quality - prefer using Q4_K_M |

| [mythologic-l2-13b.Q5_K_S.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q5_K_S.gguf) | Q5_K_S | 5 | 8.97 GB| 11.47 GB | large, low quality loss - recommended |

| [mythologic-l2-13b.Q5_K_M.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q5_K_M.gguf) | Q5_K_M | 5 | 9.23 GB| 11.73 GB | large, very low quality loss - recommended |

| [mythologic-l2-13b.Q6_K.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q6_K.gguf) | Q6_K | 6 | 10.68 GB| 13.18 GB | very large, extremely low quality loss |

| [mythologic-l2-13b.Q8_0.gguf](https://huggingface.co/TheBloke/MythoLogic-L2-13B-GGUF/blob/main/mythologic-l2-13b.Q8_0.gguf) | Q8_0 | 8 | 13.83 GB| 16.33 GB | very large, extremely low quality loss - not recommended |

**Note**: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

<!-- README_GGUF.md-provided-files end -->

<!-- README_GGUF.md-how-to-download start -->

## How to download GGUF files

**Note for manual downloaders:** You almost never want to clone the entire repo! Multiple different quantisation formats are provided, and most users only want to pick and download a single file.

The following clients/libraries will automatically download models for you, providing a list of available models to choose from:

- LM Studio

- LoLLMS Web UI

- Faraday.dev

### In `text-generation-webui`

Under Download Model, you can enter the model repo: TheBloke/MythoLogic-L2-13B-GGUF and below it, a specific filename to download, such as: mythologic-l2-13b.q4_K_M.gguf.

Then click Download.

### On the command line, including multiple files at once

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub>=0.17.1

```

Then you can download any individual model file to the current directory, at high speed, with a command like this:

```shell

huggingface-cli download TheBloke/MythoLogic-L2-13B-GGUF mythologic-l2-13b.q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

You can also download multiple files at once with a pattern:

```shell

huggingface-cli download TheBloke/MythoLogic-L2-13B-GGUF --local-dir . --local-dir-use-symlinks False --include='*Q4_K*gguf'

```

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

HUGGINGFACE_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/MythoLogic-L2-13B-GGUF mythologic-l2-13b.q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

Windows CLI users: Use `set HUGGINGFACE_HUB_ENABLE_HF_TRANSFER=1` before running the download command.

</details>

<!-- README_GGUF.md-how-to-download end -->

<!-- README_GGUF.md-how-to-run start -->

## Example `llama.cpp` command

Make sure you are using `llama.cpp` from commit [d0cee0d36d5be95a0d9088b674dbb27354107221](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221) or later.

```shell

./main -ngl 32 -m mythologic-l2-13b.q4_K_M.gguf --color -c 4096 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{prompt}\n\n### Response:"

```

Change `-ngl 32` to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

Change `-c 4096` to the desired sequence length. For extended sequence models - eg 8K, 16K, 32K - the necessary RoPE scaling parameters are read from the GGUF file and set by llama.cpp automatically.

If you want to have a chat-style conversation, replace the `-p <PROMPT>` argument with `-i -ins`