modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC] | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

timestamp[us, tz=UTC] | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

microsoft/BiomedVLP-BioViL-T | microsoft | 2023-03-20T17:04:32Z | 2,141 | 17 | transformers | [

"transformers",

"pytorch",

"bert",

"feature-extraction",

"exbert",

"custom_code",

"en",

"arxiv:2301.04558",

"arxiv:2204.09817",

"license:mit",

"region:us"

]

| feature-extraction | 2023-02-17T10:19:58Z | ---

language: en

tags:

- exbert

license: mit

widget:

- text: "Left pleural effusion with adjacent [MASK]."

example_title: "Radiology 1"

- text: "Heart size normal and lungs are [MASK]."

example_title: "Radiology 2"

- text: "[MASK] is a tumor suppressor gene."

example_title: "Biomedical"

- text: "The patient was on [MASK] for chronic atrial fibrillation"

example_title: "Medication"

---

# BioViL-T

[BioViL-T](https://arxiv.org/abs/2301.04558) is a domain-specific vision-language model designed to analyze chest X-rays (CXRs) and radiology reports. It was trained using a temporal multi-modal pre-training procedure, which distinguishes it from its predecessor model ([BioViL](https://www.ecva.net/papers/eccv_2022/papers_ECCV/papers/136960001.pdf)). In detail, BioViL-T takes advantage of the temporal structure between data points, resulting in improved downstream performance on multiple benchmarks, while using the same training dataset as its predecessor. In particular, the resultant model displays significant improvement in embedding temporal information present in the image and text modalities (see [results](#performance)), as well as in the joint space. The canonical model can be adapted to both single- and multi-image downstream applications including: natural language inference, phrase-grounding, image/text classification, and language decoding.

The corresponding BERT language model is trained in two stages: First, we pretrain [CXR-BERT-general](https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-general) from a randomly initialized BERT model via Masked Language Modeling (MLM) on [PubMed](https://pubmed.ncbi.nlm.nih.gov/) abstracts and clinical notes from the publicly-available [MIMIC-III](https://physionet.org/content/mimiciii/1.4/) and [MIMIC-CXR](https://physionet.org/content/mimic-cxr/). The general model can be fine-tuned for research in other clinical domains by adjusting the parameters specific to the target domain. In the second stage, BioViL-T is continually pretrained from CXR-BERT-general using a multi-modal pre-training procedure by utilising radiology reports and sequences of chest X-rays. We utilise the latent representation of [CLS] token to align text and image embeddings.

## Language model variations

| Model | Model identifier on HuggingFace | Vocabulary | Note |

| ------------------------------------------------- | ----------------------------------------------------------------------------------------------------------- | -------------- | --------------------------------------------------------- |

| CXR-BERT-general | [microsoft/BiomedVLP-CXR-BERT-general](https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-general) | PubMed & MIMIC | Pretrained for biomedical literature and clinical domains |

| CXR-BERT-specialized | [microsoft/BiomedVLP-CXR-BERT-specialized](https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-specialized) | PubMed & MIMIC | Static pretraining for the CXR domain |

| BioViL-T | [microsoft/BiomedVLP-BioViL-T](https://huggingface.co/microsoft/BiomedVLP-BioViL-T) | PubMed & MIMIC | Static & temporal pretraining for the CXR domain

## Image model

The image model is jointly trained with the text model in a multi-modal contrastive learning framework. It's a hybrid image encoder composed of a Vision Transformer and ResNet-50, where the latter is used as backbone network to extract features from images at each time point. The transformer is included in the design to aggregate and compare image features extracted across the temporal dimension. The corresponding model definition and its loading functions can be accessed through our [HI-ML-Multimodal](https://github.com/microsoft/hi-ml/blob/main/hi-ml-multimodal/src/health_multimodal/image/model/model.py) GitHub repository. The joint image and text model, namely [BioViL-T](https://arxiv.org/abs/2204.09817), can be used in phrase grounding applications as shown in this python notebook [example](https://mybinder.org/v2/gh/microsoft/hi-ml/HEAD?labpath=hi-ml-multimodal%2Fnotebooks%2Fphrase_grounding.ipynb). Additionally, please check the [MS-CXR benchmark](https://physionet.org/content/ms-cxr/0.1/) for a more systematic evaluation of joint image and text models in phrase grounding tasks.

## Citation

The corresponding manuscript is accepted to be presented at the [**Conference on Computer Vision and Pattern Recognition (CVPR) 2023**](https://cvpr2023.thecvf.com/)

```bibtex

@misc{https://doi.org/10.48550/arXiv.2301.04558,

doi = {10.48550/ARXIV.2301.04558},

url = {https://arxiv.org/abs/2301.04558},

author = {Bannur, Shruthi and Hyland, Stephanie and Liu, Qianchu and Perez-Garcia, Fernando and Ilse, Maximilian and Castro, Daniel C and Boecking, Benedikt and Sharma, Harshita and Bouzid, Kenza and Thieme, Anja and Schwaighofer, Anton and Wetscherek, Maria and Lungren, Matthew P and Nori, Aditya and Alvarez-Valle, Javier and Oktay, Ozan}

title = {Learning to Exploit Temporal Structure for Biomedical Vision–Language Processing},

publisher = {arXiv},

year = {2023},

}

```

## Model Use

### Intended Use

This model is intended to be used solely for (I) future research on visual-language processing and (II) reproducibility of the experimental results reported in the reference paper.

#### Primary Intended Use

The primary intended use is to support AI researchers building on top of this work. CXR-BERT and its associated models should be helpful for exploring various clinical NLP & VLP research questions, especially in the radiology domain.

#### Out-of-Scope Use

**Any** deployed use case of the model --- commercial or otherwise --- is currently out of scope. Although we evaluated the models using a broad set of publicly-available research benchmarks, the models and evaluations are not intended for deployed use cases. Under unprecedented conditions, the models may make inaccurate predictions and display limitations, which may require additional mitigation strategies. Therefore, we discourage use of the model for automated diagnosis or in a medical device. Please refer to [the associated paper](https://arxiv.org/abs/2301.04558) for more details.

### How to use

Here is how to use this model to extract radiological sentence embeddings and obtain their cosine similarity in the joint space (image and text):

```python

import torch

from transformers import AutoModel, AutoTokenizer

# Load the model and tokenizer

url = "microsoft/BiomedVLP-BioViL-T"

tokenizer = AutoTokenizer.from_pretrained(url, trust_remote_code=True)

model = AutoModel.from_pretrained(url, trust_remote_code=True)

# Input text prompts describing findings.

# The order of prompts is adjusted to capture the spectrum from absence of a finding to its temporal progression.

text_prompts = ["No pleural effusion or pneumothorax is seen.",

"There is no pneumothorax or pleural effusion.",

"The extent of the pleural effusion is reduced.",

"The extent of the pleural effusion remains constant.",

"Interval enlargement of pleural effusion."]

# Tokenize and compute the sentence embeddings

with torch.no_grad():

tokenizer_output = tokenizer.batch_encode_plus(batch_text_or_text_pairs=text_prompts,

add_special_tokens=True,

padding='longest',

return_tensors='pt')

embeddings = model.get_projected_text_embeddings(input_ids=tokenizer_output.input_ids,

attention_mask=tokenizer_output.attention_mask)

# Compute the cosine similarity of sentence embeddings obtained from input text prompts.

sim = torch.mm(embeddings, embeddings.t())

```

## Data

This model builds upon existing publicly-available datasets:

- [PubMed](https://pubmed.ncbi.nlm.nih.gov/)

- [MIMIC-III](https://physionet.org/content/mimiciii/)

- [MIMIC-CXR](https://physionet.org/content/mimic-cxr/)

These datasets reflect a broad variety of sources ranging from biomedical abstracts to intensive care unit notes to chest X-ray radiology notes. The radiology notes are accompanied with their associated chest x-ray DICOM images in MIMIC-CXR dataset.

## Performance

The presented model achieves state-of-the-art results in radiology natural language inference by leveraging semantics and discourse characteristics at training time more efficiently.

The experiments were performed on the RadNLI and MS-CXR-T benchmarks, which measure the quality of text embeddings in terms of static and temporal semantics respectively.

BioViL-T is benchmarked against other commonly used SOTA domain specific BERT models, including [PubMedBERT](https://aka.ms/pubmedbert) and [CXR-BERT](https://aka.ms/biovil).

The results below show that BioViL-T has increased sensitivity of sentence embeddings to temporal content (MS-CXR-T) whilst better capturing the static content (RadNLI).

| | MS-CXR-T | MS-CXR-T | RadNLI (2 classes) | RadNLI (2 classes) |

| ----------------------------------------------- | :-------------------------------: | :----------------------: | :-------------------------: | :-------------: |

| | Accuracy | ROC-AUC | Accuracy | ROC-AUC |

| [PubMedBERT]((https://aka.ms/pubmedbert)) | 60.39 | .542 | 81.38 | .727 |

| [CXR-BERT-General](https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-general) | 62.60 | .601 | 87.59 | .902 |

| [CXR-BERT-Specialized]((https://huggingface.co/microsoft/BiomedVLP-CXR-BERT-specialized)) | 78.12 | .837 | 89.66 | .932 |

| **BioViL-T** | **87.77** | **.933** | **90.52** | **.947** |

The novel pretraining framework yields also better vision-language representations. Below is the zero-shot phrase grounding performance obtained on the [MS-CXR](https://physionet.org/content/ms-cxr/0.1/) benchmark dataset, which evaluates the quality of image-text latent representations.

| Vision–Language Pretraining Method | MS-CXR Phrase Grounding (Avg. CNR Score) | MS-CXR Phrase Grounding (mIoU) |

| ---------------------------------- | :--------------------------------------: | :----------------------------: |

| BioViL | 1.07 +- 0.04 | 0.229 +- 0.005 |

| BioViL-L | 1.21 +- 0.05 | 0.202 +- 0.010 |

| **BioViL-T** | **1.33 +- 0.04** | **0.240 +- 0.005** |

Additional experimental results and discussion can be found in the corresponding paper, ["Learning to Exploit Temporal Structure for Biomedical Vision–Language Processing", CVPR'23](https://arxiv.org/abs/2301.04558).

## Limitations

This model was developed using English corpora, and thus can be considered English-only.

The training dataset contains only medical images and reports acquired from an intensive-care-unit (ICU), where longitudinal images are often collected within range of hours or at most few days. As a result, the models may show reduced performance in analyzing consecutive images acquired over longer periods of time (e.g. years) where significant anatomical variations are observed between the scans.

## Further information

Please refer to the corresponding paper, ["Learning to Exploit Temporal Structure for Biomedical Vision–Language Processing", CVPR'23](https://arxiv.org/abs/2301.04558.pdf) for additional details on the model training and evaluation.

For additional inference pipelines with BioViL-T, please refer to the [HI-ML GitHub](https://aka.ms/biovil-t-code) repository. The associated source files will soon be accessible through this link.

|

migtissera/Tess-XL-v1.0 | migtissera | 2023-11-19T18:35:13Z | 2,141 | 13 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"license:llama2",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2023-11-07T04:42:58Z | ---

license: llama2

---

# Tess

Tess, short for Tessoro/Tessoso, is a general purpose Large Language Model series. Tess-XL is trained on the Goliath-120B as the base.

While there are task specific Tess models (Creative or STEM), this model is general purpose.

# Prompt format:

```

SYSTEM: Elaborate on the topic using a Tree of Thoughts and backtrack when necessary to construct a clear, cohesive Chain of Thought reasoning. Always answer without hesitation.

USER: What is the relationship between Earth's atmosphere, magnetic field and gravity?

ASSISTANT:

```

# Quantized Versions

Quantized versions are available in the following repos:

- GPTQ: https://huggingface.co/TheBloke/Tess-XL-v1.0-GPTQ

- AWQ: https://huggingface.co/TheBloke/Tess-XL-v1.0-AWQ

- GGUF: https://huggingface.co/TheBloke/Tess-XL-v1.0-GGUF

# Sample Conversation

```

You: What is the relationship between an electrical field and a magnetic field?

The relationship between an electric field and a magnetic field can be summarized in two important ways:

1. Cause and effect: A changing electric field can induce a magnetic field, and a changing magnetic field can in turn induce an electric field. This is the principle of electromagnetic induction.

2. Duality: Electric and magnetic fields are often described as components of a single electromagnetic field. This duality is most clearly seen in Maxwell's equations, which describe how electric and magnetic fields are related.

Chain of Thought:

- Start with the question: What is the relationship between an electric field and a magnetic field?

- Think about the basic principles of electromagnetism: cause and effect, as well as the duality of electric and magnetic fields.

- Explain the relationship by referring to electromagnetic induction and Maxwell's equations.

You: In some instances that are associated with UAP, an increase in electrical field causes a decrease in the measured magnetic field. This is contradictory to known physics. Can we theorize what could cause this?

The relationship you describe between an increase in the electric field and a decrease in the measured magnetic field is indeed unusual and does not align with the conventional understanding of electromagnetic fields. However, we can consider some possible explanations:

1. Unknown phenomena: It's possible that there are physical phenomena or interactions that we have not yet discovered or fully understood that could cause this effect. These could include new particles or forces that we have not yet detected, or unusual properties of known particles or fields.

2. Measurement errors: Another possibility is that the observed relationship is due to measurement errors or inaccuracies in the instruments used to measure the electric and magnetic fields. This could include calibration issues, interference from other sources, or limitations in the sensitivity of the equipment.

3. Environmental effects: Changes in the environment or the nature of the object emitting the fields may also affect the relationship between the electric and magnetic fields. For example, if the object is moving or undergoing changes in composition or structure, this could affect the way that the fields are generated and detected.

In summary, while the observed relationship between an increase in the electric field and a decrease in the measured magnetic field is not consistent with our current understanding of electromagnetism, there could be several possible explanations, including unknown phenomena, measurement errors, or environmental effects. Further research and investigation would be needed to determine the cause of this phenomenon.

You: What is the most advanced theory of electro-magnetic fields right now?

The most advanced theory of electromagnetic fields is quantum electrodynamics (QED). QED is a part of the larger framework of quantum field theory (QFT), which aims to describe the interactions of particles and fields in the context of quantum mechanics.

QED specifically describes the interactions between photons (which are the quanta of the electromagnetic field) and charged particles, such as electrons and positrons. It provides a theoretical framework for understanding how photons can be created and absorbed by charged particles, and how they can interact with each other.

The predictions of QED have been experimentally verified to an extremely high degree of accuracy, making it one of the most successful theories in physics. Despite its success, QED is not considered a complete theory, as it does not include gravity and is inconsistent with the principles of general relativity. The search for a unified theory that can reconcile QED with gravity is an area of active research in theoretical physics.

You: Give me a detailed introduction to QED.

Quantum electrodynamics (QED) is a quantum field theory that describes the interactions between electrically charged particles (such as electrons and positrons) and the photons that make up the electromagnetic field. It is a part of the larger framework of quantum field theory (QFT), which aims to describe the interactions of particles and fields in the context of quantum mechanics.

In QED, electric and magnetic fields are described as being made up of individual packets of energy called photons. Photons are the quanta of the electromagnetic field, and they can be created and absorbed by charged particles.

QED provides a theoretical framework for understanding how photons can interact with each other and with charged particles. The theory predicts that photons can be created or annihilated in pairs, and that charged particles can emit and absorb photons. These interactions are mediated by the exchange of virtual particles, which are other photons that exist only fleetingly and cannot be directly observed.

The predictions of QED have been experimentally verified to an extremely high degree of accuracy, making it one of the most successful theories in physics. Despite its success, QED is not considered a complete theory, as it does not include gravity and is inconsistent with the principles of general relativity. The search for a unified theory that can reconcile QED with gravity is an area of active research in theoretical physics.

In summary, QED is a highly successful theory that describes the interactions between charged particles and the electromagnetic field in terms of photons. While it is not a complete theory, it provides a fundamental understanding of the electromagnetic force and has led to many important discoveries in particle physics.

```

|

mradermacher/SquadLlama-GGUF | mradermacher | 2024-06-20T12:58:35Z | 2,141 | 1 | transformers | [

"transformers",

"gguf",

"en",

"base_model:Ahsann321/SquadLlama",

"endpoints_compatible",

"region:us"

]

| null | 2024-06-20T12:45:12Z | ---

base_model: Ahsann321/SquadLlama

language:

- en

library_name: transformers

quantized_by: mradermacher

tags: []

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

static quants of https://huggingface.co/Ahsann321/SquadLlama

<!-- provided-files -->

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q2_K.gguf) | Q2_K | 0.5 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.IQ3_XS.gguf) | IQ3_XS | 0.6 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q3_K_S.gguf) | Q3_K_S | 0.6 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.IQ3_S.gguf) | IQ3_S | 0.6 | beats Q3_K* |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.IQ3_M.gguf) | IQ3_M | 0.6 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q3_K_M.gguf) | Q3_K_M | 0.6 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q3_K_L.gguf) | Q3_K_L | 0.7 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.IQ4_XS.gguf) | IQ4_XS | 0.7 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q4_K_S.gguf) | Q4_K_S | 0.7 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q4_K_M.gguf) | Q4_K_M | 0.8 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q5_K_S.gguf) | Q5_K_S | 0.9 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q5_K_M.gguf) | Q5_K_M | 0.9 | |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q6_K.gguf) | Q6_K | 1.0 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.Q8_0.gguf) | Q8_0 | 1.3 | fast, best quality |

| [GGUF](https://huggingface.co/mradermacher/SquadLlama-GGUF/resolve/main/SquadLlama.f16.gguf) | f16 | 2.3 | 16 bpw, overkill |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

ckiplab/bert-tiny-chinese-ner | ckiplab | 2022-05-10T03:28:12Z | 2,139 | 4 | transformers | [

"transformers",

"pytorch",

"bert",

"token-classification",

"zh",

"license:gpl-3.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| token-classification | 2022-05-10T02:55:04Z | ---

language:

- zh

thumbnail: https://ckip.iis.sinica.edu.tw/files/ckip_logo.png

tags:

- pytorch

- token-classification

- bert

- zh

license: gpl-3.0

---

# CKIP BERT Tiny Chinese

This project provides traditional Chinese transformers models (including ALBERT, BERT, GPT2) and NLP tools (including word segmentation, part-of-speech tagging, named entity recognition).

這個專案提供了繁體中文的 transformers 模型(包含 ALBERT、BERT、GPT2)及自然語言處理工具(包含斷詞、詞性標記、實體辨識)。

## Homepage

- https://github.com/ckiplab/ckip-transformers

## Contributers

- [Mu Yang](https://muyang.pro) at [CKIP](https://ckip.iis.sinica.edu.tw) (Author & Maintainer)

## Usage

Please use BertTokenizerFast as tokenizer instead of AutoTokenizer.

請使用 BertTokenizerFast 而非 AutoTokenizer。

```

from transformers import (

BertTokenizerFast,

AutoModel,

)

tokenizer = BertTokenizerFast.from_pretrained('bert-base-chinese')

model = AutoModel.from_pretrained('ckiplab/bert-tiny-chinese-ner')

```

For full usage and more information, please refer to https://github.com/ckiplab/ckip-transformers.

有關完整使用方法及其他資訊,請參見 https://github.com/ckiplab/ckip-transformers 。

|

alfaneo/bertimbaulaw-base-portuguese-sts | alfaneo | 2023-06-30T11:10:11Z | 2,138 | 0 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"bert",

"feature-extraction",

"sentence-similarity",

"transformers",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

]

| sentence-similarity | 2022-07-04T22:35:36Z | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# juridics/bertimbaulaw-base-portuguese-sts-scale

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('juridics/bertimbaulaw-base-portuguese-sts-scale')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('juridics/bertimbaulaw-base-portuguese-sts-scale')

model = AutoModel.from_pretrained('juridics/bertimbaulaw-base-portuguese-sts-scale')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=juridics/bertimbaulaw-base-portuguese-sts-scale)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 2492 with parameters:

```

{'batch_size': 8, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 3,

"evaluation_steps": 2492,

"evaluator": "sentence_transformers.evaluation.EmbeddingSimilarityEvaluator.EmbeddingSimilarityEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 5e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 748,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 384, 'do_lower_case': False}) with Transformer model: BertModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

If you use our work, please cite:

```

@incollection{Viegas_2023,

doi = {10.1007/978-3-031-36805-9_24},

url = {https://doi.org/10.1007%2F978-3-031-36805-9_24},

year = 2023,

publisher = {Springer Nature Switzerland},

pages = {349--365},

author = {Charles F. O. Viegas and Bruno C. Costa and Renato P. Ishii},

title = {{JurisBERT}: A New Approach that~Converts a~Classification Corpus into~an~{STS} One},

booktitle = {Computational Science and Its Applications {\textendash} {ICCSA} 2023}

}

``` |

KnutJaegersberg/black_goo_recipe_e | KnutJaegersberg | 2023-12-03T15:11:02Z | 2,138 | 0 | transformers | [

"transformers",

"pytorch",

"llama",

"text-generation",

"custom_code",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2023-09-04T21:08:02Z | ---

license: cc-by-nc-4.0

---

Prompt Example:

```

### System:

You are an AI assistant. User will give you a task. Your goal is to complete the task as faithfully as you can. While performing the task think step-by-step and justify your steps.

### Instruction:

How do you fine tune a large language model?

### Response:

``` |

mradermacher/Medichat-V2-Llama3-8B-GGUF | mradermacher | 2024-06-04T05:50:49Z | 2,138 | 1 | transformers | [

"transformers",

"gguf",

"mergekit",

"merge",

"medical",

"en",

"dataset:ruslanmv/ai-medical-chatbot",

"dataset:Locutusque/hercules-v5.0",

"base_model:sethuiyer/Medichat-V2-Llama3-8B",

"license:other",

"endpoints_compatible",

"region:us"

]

| null | 2024-06-02T13:42:48Z | ---

base_model: sethuiyer/Medichat-V2-Llama3-8B

datasets:

- ruslanmv/ai-medical-chatbot

- Locutusque/hercules-v5.0

language:

- en

library_name: transformers

license: other

quantized_by: mradermacher

tags:

- mergekit

- merge

- medical

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

static quants of https://huggingface.co/sethuiyer/Medichat-V2-Llama3-8B

<!-- provided-files -->

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q2_K.gguf) | Q2_K | 3.3 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.IQ3_XS.gguf) | IQ3_XS | 3.6 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q3_K_S.gguf) | Q3_K_S | 3.8 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.IQ3_S.gguf) | IQ3_S | 3.8 | beats Q3_K* |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.IQ3_M.gguf) | IQ3_M | 3.9 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q3_K_M.gguf) | Q3_K_M | 4.1 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q3_K_L.gguf) | Q3_K_L | 4.4 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.IQ4_XS.gguf) | IQ4_XS | 4.6 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q4_K_S.gguf) | Q4_K_S | 4.8 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q4_K_M.gguf) | Q4_K_M | 5.0 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q5_K_S.gguf) | Q5_K_S | 5.7 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q5_K_M.gguf) | Q5_K_M | 5.8 | |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q6_K.gguf) | Q6_K | 6.7 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.Q8_0.gguf) | Q8_0 | 8.6 | fast, best quality |

| [GGUF](https://huggingface.co/mradermacher/Medichat-V2-Llama3-8B-GGUF/resolve/main/Medichat-V2-Llama3-8B.f16.gguf) | f16 | 16.2 | 16 bpw, overkill |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf | RichardErkhov | 2024-06-15T00:49:29Z | 2,138 | 0 | null | [

"gguf",

"region:us"

]

| null | 2024-06-14T23:48:39Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

neuronovo-9B-v0.4 - GGUF

- Model creator: https://huggingface.co/Neuronovo/

- Original model: https://huggingface.co/Neuronovo/neuronovo-9B-v0.4/

| Name | Quant method | Size |

| ---- | ---- | ---- |

| [neuronovo-9B-v0.4.Q2_K.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q2_K.gguf) | Q2_K | 3.13GB |

| [neuronovo-9B-v0.4.IQ3_XS.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.IQ3_XS.gguf) | IQ3_XS | 3.48GB |

| [neuronovo-9B-v0.4.IQ3_S.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.IQ3_S.gguf) | IQ3_S | 3.67GB |

| [neuronovo-9B-v0.4.Q3_K_S.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q3_K_S.gguf) | Q3_K_S | 3.65GB |

| [neuronovo-9B-v0.4.IQ3_M.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.IQ3_M.gguf) | IQ3_M | 3.79GB |

| [neuronovo-9B-v0.4.Q3_K.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q3_K.gguf) | Q3_K | 4.05GB |

| [neuronovo-9B-v0.4.Q3_K_M.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q3_K_M.gguf) | Q3_K_M | 4.05GB |

| [neuronovo-9B-v0.4.Q3_K_L.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q3_K_L.gguf) | Q3_K_L | 4.41GB |

| [neuronovo-9B-v0.4.IQ4_XS.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.IQ4_XS.gguf) | IQ4_XS | 4.55GB |

| [neuronovo-9B-v0.4.Q4_0.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q4_0.gguf) | Q4_0 | 4.74GB |

| [neuronovo-9B-v0.4.IQ4_NL.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.IQ4_NL.gguf) | IQ4_NL | 4.79GB |

| [neuronovo-9B-v0.4.Q4_K_S.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q4_K_S.gguf) | Q4_K_S | 4.78GB |

| [neuronovo-9B-v0.4.Q4_K.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q4_K.gguf) | Q4_K | 5.04GB |

| [neuronovo-9B-v0.4.Q4_K_M.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q4_K_M.gguf) | Q4_K_M | 5.04GB |

| [neuronovo-9B-v0.4.Q4_1.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q4_1.gguf) | Q4_1 | 5.26GB |

| [neuronovo-9B-v0.4.Q5_0.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q5_0.gguf) | Q5_0 | 5.77GB |

| [neuronovo-9B-v0.4.Q5_K_S.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q5_K_S.gguf) | Q5_K_S | 5.77GB |

| [neuronovo-9B-v0.4.Q5_K.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q5_K.gguf) | Q5_K | 5.93GB |

| [neuronovo-9B-v0.4.Q5_K_M.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q5_K_M.gguf) | Q5_K_M | 5.93GB |

| [neuronovo-9B-v0.4.Q5_1.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q5_1.gguf) | Q5_1 | 6.29GB |

| [neuronovo-9B-v0.4.Q6_K.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q6_K.gguf) | Q6_K | 6.87GB |

| [neuronovo-9B-v0.4.Q8_0.gguf](https://huggingface.co/RichardErkhov/Neuronovo_-_neuronovo-9B-v0.4-gguf/blob/main/neuronovo-9B-v0.4.Q8_0.gguf) | Q8_0 | 8.89GB |

Original model description:

---

license: apache-2.0

datasets:

- Intel/orca_dpo_pairs

- mlabonne/chatml_dpo_pairs

language:

- en

library_name: transformers

---

More information about previous [Neuronovo/neuronovo-9B-v0.2](https://huggingface.co/Neuronovo/neuronovo-9B-v0.2) version available here: 🔗[Don't stop DPOptimizing!](https://www.linkedin.com/pulse/dont-stop-dpoptimizing-jan-koco%2525C5%252584-mq4qf)

Author: Jan Kocoń 🔗[LinkedIn](https://www.linkedin.com/in/jankocon/) 🔗[Google Scholar](https://scholar.google.com/citations?user=pmQHb5IAAAAJ&hl=en&oi=ao) 🔗[ResearchGate](https://www.researchgate.net/profile/Jan-Kocon-2)

Changes concerning [Neuronovo/neuronovo-9B-v0.2](https://huggingface.co/Neuronovo/neuronovo-9B-v0.2):

1. **Training Dataset**: In addition to the [Intel/orca_dpo_pairs](Intel/orca_dpo_pairs) dataset, this version incorporates a [mlabonne/chatml_dpo_pairs](https://huggingface.co/datasets/mlabonne/chatml_dpo_pairs). The combined datasets enhance the model's capabilities in dialogues and interactive scenarios, further specializing it in natural language understanding and response generation.

2. **Tokenizer and Formatting**: The tokenizer now originates directly from the [Neuronovo/neuronovo-9B-v0.2](https://huggingface.co/Neuronovo/neuronovo-9B-v0.2) model.

3. **Training Configuration**: The training approach has shifted from using `max_steps=200` to `num_train_epochs=1`. This represents a change in the training strategy, focusing on epoch-based training rather than a fixed number of steps.

4. **Learning Rate**: The learning rate has been reduced to a smaller value of `5e-8`. This finer learning rate allows for more precise adjustments during the training process, potentially leading to better model performance.

|

winglian/mistral-11b-128k | winglian | 2023-11-12T15:49:06Z | 2,137 | 2 | transformers | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"pretrained",

"custom_code",

"en",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2023-11-12T15:19:16Z | ---

license: apache-2.0

pipeline_tag: text-generation

language:

- en

tags:

- pretrained

inference:

parameters:

temperature: 0.7

---

# Mistral YARN 128k 11b

This is a mergekit merge of the Nous Research's Yarn-Mistral-7b-128k Large Language Model (LLM) to create an 11 billion parameter pretrained generative text model with a context

|

Changgil/k2s3_test_24001 | Changgil | 2024-02-21T06:42:23Z | 2,137 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"conversational",

"ko",

"license:llama2",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-02-14T05:59:10Z | ---

license: llama2

language:

- ko

---

---

## Developed by :

* Changgil Song

## Model Number:

* k2s3_test_24001

## Base Model :

* [meta-llama/Llama-2-13b-chat-hf](https://huggingface.co/meta-llama/Llama-2-13b-chat-hf)

### Training Data

* The model was trained on a diverse dataset comprising approximately 800 million tokens, including the Standard Korean Dictionary, KULLM training data from Korea University, dissertation abstracts from master's and doctoral theses, and Korean language samples from AI Hub.

* 이 모델은 표준대국어사전, 고려대 KULLM의 훈련 데이터, 석박사학위자 서지정보 논문초록, ai_hub의 한국어 데이터 샘플들을 포함하여 약 8억 개의 토큰으로 구성된 다양한 데이터셋에서 훈련되었습니다.

### Training Method

* This model was fine-tuned on the "meta-llama/Llama-2-13b-chat-hf" base model using PEFT (Parameter-Efficient Fine-Tuning) LoRA (Low-Rank Adaptation) techniques.

* 이 모델은 "meta-llama/Llama-2-13b-chat-hf" 기반 모델을 PEFT LoRA를 사용하여 미세조정되었습니다.

### Hardware and Software

* Hardware: Utilized two A100 (80G*2EA) GPUs for training.

* Training Factors: This model was fine-tuned using PEFT LoRA with the HuggingFace SFTtrainer and applied fsdp. Key parameters included LoRA r = 8, LoRA alpha = 16, trained for 2 epochs, batch size of 1, and gradient accumulation of 32.

* 이 모델은 PEFT LoRA를 사용하여 HuggingFace SFTtrainer와 fsdp를 적용하여 미세조정되었습니다. 주요 파라미터로는 LoRA r = 8, LoRA alpha = 16, 2 에폭 훈련, 배치 크기 1, 그리고 그라디언트 누적 32를 포함합니다.

### Caution

* For fine-tuning this model, it is advised to consider the specific parameters used during training, such as LoRA r and LoRA alpha values, to ensure compatibility and optimal performance.

* 이 모델을 미세조정할 때는 LoRA r 및 LoRA alpha 값과 같이 훈련 중에 사용된 특정 파라미터를 고려하는 것이 좋습니다. 이는 호환성 및 최적의 성능을 보장하기 위함입니다.

### Additional Information

* The training leveraged the fsdp (Fully Sharded Data Parallel) feature through the HuggingFace SFTtrainer for efficient memory usage and accelerated training.

* 훈련은 HuggingFace SFTtrainer를 통한 fsdp 기능을 활용하여 메모리 사용을 효율적으로 하고 훈련 속도를 가속화했습니다. |

Chrisisis/5Cqc1AdayJLwD1dXYJFQSytKRe1jNB4a84qop37d5wM5WGxH_vgg | Chrisisis | 2024-02-24T08:25:51Z | 2,136 | 0 | keras | [

"keras",

"region:us"

]

| null | 2024-02-05T18:34:28Z | Entry not found |

aloobun/CosmicBun-8B | aloobun | 2024-05-02T20:29:05Z | 2,136 | 3 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"mergekit",

"merge",

"math",

"llama3",

"physics",

"chemistry",

"biology",

"dolphin",

"conversational",

"arxiv:2311.03099",

"arxiv:2306.01708",

"base_model:cognitivecomputations/dolphin-2.9-llama3-8b",

"base_model:Weyaxi/Einstein-v6.1-Llama3-8B",

"base_model:Locutusque/llama-3-neural-chat-v1-8b",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

]

| text-generation | 2024-05-01T16:06:34Z | ---

license: mit

library_name: transformers

tags:

- mergekit

- merge

- math

- llama3

- physics

- chemistry

- biology

- dolphin

base_model:

- cognitivecomputations/dolphin-2.9-llama3-8b

- Weyaxi/Einstein-v6.1-Llama3-8B

- Locutusque/llama-3-neural-chat-v1-8b

model-index:

- name: CosmicBun-8B

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 61.86

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=aloobun/CosmicBun-8B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 84.29

name: normalized accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=aloobun/CosmicBun-8B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 65.53

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=aloobun/CosmicBun-8B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 54.08

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=aloobun/CosmicBun-8B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 78.85

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=aloobun/CosmicBun-8B

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 68.23

name: accuracy

source:

url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=aloobun/CosmicBun-8B

name: Open LLM Leaderboard

---

# model

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

### Merge Method

This model was merged using the [DARE](https://arxiv.org/abs/2311.03099) [TIES](https://arxiv.org/abs/2306.01708) merge method using [Locutusque/llama-3-neural-chat-v1-8b](https://huggingface.co/Locutusque/llama-3-neural-chat-v1-8b) as a base.

### Models Merged

The following models were included in the merge:

* [cognitivecomputations/dolphin-2.9-llama3-8b](https://huggingface.co/cognitivecomputations/dolphin-2.9-llama3-8b)

* [Weyaxi/Einstein-v6.1-Llama3-8B](https://huggingface.co/Weyaxi/Einstein-v6.1-Llama3-8B)

### Configuration

The following YAML configuration was used to produce this model:

```yaml

base_model: Locutusque/llama-3-neural-chat-v1-8b

dtype: bfloat16

merge_method: dare_ties

parameters:

int8_mask: 1.0

normalize: 0.0

slices:

- sources:

- layer_range: [0, 4]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 1.0

weight: 0.6

- layer_range: [0, 4]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 0.6

weight: 0.5

- layer_range: [0, 4]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 1.0

weight: 0.5

- sources:

- layer_range: [4, 8]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 0.8

weight: 0.1

- layer_range: [4, 8]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 1.0

weight: 0.2

- layer_range: [4, 8]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 1.0

weight: 0.7

- sources:

- layer_range: [8, 12]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 0.7

weight: 0.1

- layer_range: [8, 12]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 0.7

weight: 0.2

- layer_range: [8, 12]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 0.7

weight: 0.6

- sources:

- layer_range: [12, 16]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 0.9

weight: 0.2

- layer_range: [12, 16]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 0.6

weight: 0.6

- layer_range: [12, 16]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 0.7

weight: 0.3

- sources:

- layer_range: [16, 20]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 1.0

weight: 0.2

- layer_range: [16, 20]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 1.0

weight: 0.2

- layer_range: [16, 20]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 0.9

weight: 0.4

- sources:

- layer_range: [20, 24]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 0.7

weight: 0.2

- layer_range: [20, 24]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 0.9

weight: 0.3

- layer_range: [20, 24]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 1.0

weight: 0.4

- sources:

- layer_range: [24, 28]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 1.0

weight: 0.4

- layer_range: [24, 28]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 0.8

weight: 0.2

- layer_range: [24, 28]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 0.9

weight: 0.4

- sources:

- layer_range: [28, 32]

model: cognitivecomputations/dolphin-2.9-llama3-8b

parameters:

density: 1.0

weight: 0.3

- layer_range: [28, 32]

model: Weyaxi/Einstein-v6.1-Llama3-8B

parameters:

density: 0.9

weight: 0.2

- layer_range: [28, 32]

model: Locutusque/llama-3-neural-chat-v1-8b

parameters:

density: 1.0

weight: 0.3

```

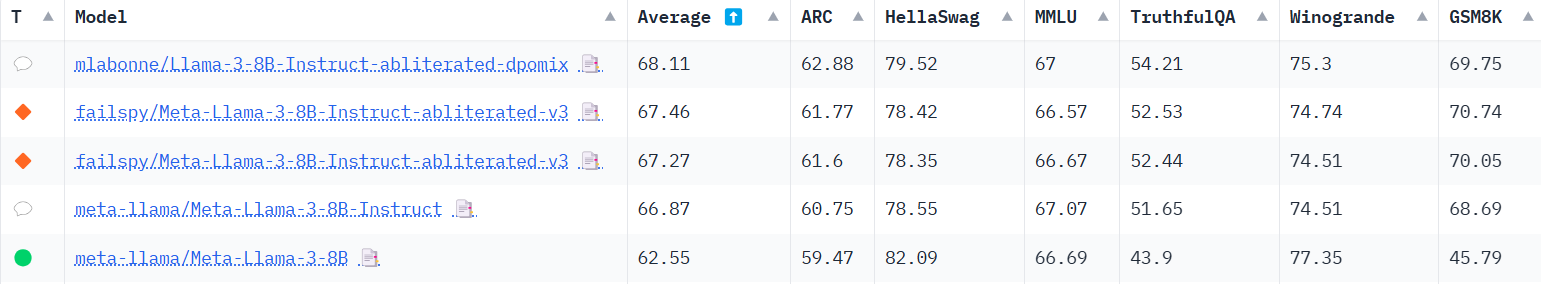

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_aloobun__CosmicBun-8B)

| Metric |Value|

|---------------------------------|----:|

|Avg. |68.81|

|AI2 Reasoning Challenge (25-Shot)|61.86|

|HellaSwag (10-Shot) |84.29|

|MMLU (5-Shot) |65.53|

|TruthfulQA (0-shot) |54.08|

|Winogrande (5-shot) |78.85|

|GSM8k (5-shot) |68.23|

|

RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf | RichardErkhov | 2024-06-15T05:09:22Z | 2,136 | 0 | null | [

"gguf",

"region:us"

]

| null | 2024-06-15T04:11:13Z | Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

mistral_9B_instruct_v0.2 - GGUF

- Model creator: https://huggingface.co/cloudyu/

- Original model: https://huggingface.co/cloudyu/mistral_9B_instruct_v0.2/

| Name | Quant method | Size |

| ---- | ---- | ---- |

| [mistral_9B_instruct_v0.2.Q2_K.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q2_K.gguf) | Q2_K | 3.13GB |

| [mistral_9B_instruct_v0.2.IQ3_XS.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.IQ3_XS.gguf) | IQ3_XS | 3.48GB |

| [mistral_9B_instruct_v0.2.IQ3_S.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.IQ3_S.gguf) | IQ3_S | 3.67GB |

| [mistral_9B_instruct_v0.2.Q3_K_S.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q3_K_S.gguf) | Q3_K_S | 3.65GB |

| [mistral_9B_instruct_v0.2.IQ3_M.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.IQ3_M.gguf) | IQ3_M | 3.79GB |

| [mistral_9B_instruct_v0.2.Q3_K.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q3_K.gguf) | Q3_K | 4.05GB |

| [mistral_9B_instruct_v0.2.Q3_K_M.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q3_K_M.gguf) | Q3_K_M | 4.05GB |

| [mistral_9B_instruct_v0.2.Q3_K_L.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q3_K_L.gguf) | Q3_K_L | 4.41GB |

| [mistral_9B_instruct_v0.2.IQ4_XS.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.IQ4_XS.gguf) | IQ4_XS | 4.55GB |

| [mistral_9B_instruct_v0.2.Q4_0.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q4_0.gguf) | Q4_0 | 4.74GB |

| [mistral_9B_instruct_v0.2.IQ4_NL.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.IQ4_NL.gguf) | IQ4_NL | 4.79GB |

| [mistral_9B_instruct_v0.2.Q4_K_S.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q4_K_S.gguf) | Q4_K_S | 4.78GB |

| [mistral_9B_instruct_v0.2.Q4_K.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q4_K.gguf) | Q4_K | 5.04GB |

| [mistral_9B_instruct_v0.2.Q4_K_M.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q4_K_M.gguf) | Q4_K_M | 5.04GB |

| [mistral_9B_instruct_v0.2.Q4_1.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q4_1.gguf) | Q4_1 | 5.26GB |

| [mistral_9B_instruct_v0.2.Q5_0.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q5_0.gguf) | Q5_0 | 5.77GB |

| [mistral_9B_instruct_v0.2.Q5_K_S.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q5_K_S.gguf) | Q5_K_S | 5.77GB |

| [mistral_9B_instruct_v0.2.Q5_K.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q5_K.gguf) | Q5_K | 5.93GB |

| [mistral_9B_instruct_v0.2.Q5_K_M.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q5_K_M.gguf) | Q5_K_M | 5.93GB |

| [mistral_9B_instruct_v0.2.Q5_1.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q5_1.gguf) | Q5_1 | 6.29GB |

| [mistral_9B_instruct_v0.2.Q6_K.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q6_K.gguf) | Q6_K | 6.87GB |

| [mistral_9B_instruct_v0.2.Q8_0.gguf](https://huggingface.co/RichardErkhov/cloudyu_-_mistral_9B_instruct_v0.2-gguf/blob/main/mistral_9B_instruct_v0.2.Q8_0.gguf) | Q8_0 | 8.89GB |

Original model description:

---

license: cc-by-4.0

---

```

this is a 40 layers model based on mistral architecture

sft by vicgalle/alpaca-gpt4.

template alpaca format

```

example output

```

<s> write me a story about yosemite.

Once upon a time, in the heart of California's Sierra Nevada mountains, there lay a breathtakingly beautiful place known as Yosemite National Park. This natural wonderland was home to some of the most magnificent geological formations and scenic vistas that could be found anywhere on earth. It was a place where nature had been given free reign to create its masterpieces without any interference from mankind.

Yosemite was a land of towering sequoia trees, cascading waterfalls, crystal-clear streams, and majestic cliffs that seemed to stretch upwards into the heavens themselves. The air was always cool and fresh, filled with the sweet scent of pine and the soft sound of birds chirping in the treetops. Visitors were constantly amazed by the sheer beauty and grandeur of this magical place.

One particular day, as the sun began to rise over the horizon, a young couple, Sarah and Jack, made their way through the ancient forest, hand in hand. They had come to Yosemite for their honeymoon, eager to explore the wonders of this natural paradise together. As they walked deeper into the park, they marveled at the sights around them – the towering granite domes, the sparkling rivers, and the vibrant colors of the wildflowers that carpeted the ground beneath their feet.

Sarah and Jack continued their journey, taking in all the beauty that Yosemite had to offer. They climbed up the steep trails to the top of the mountain overlooks, gazing out at the stunning panoramic views before them. They sat quietly by the side of the river, watching as the fish swam gracefully through the clear waters. And they shared stories and memories, laughing and smiling as they looked into each other’s eyes.

As the days passed, Sarah and Jack grew more and more enamored with Yosemite. They felt like they were part of something greater than themselves – a connection not only to each other but also to the incredible natural world that surrounded them. They left no trace behind, leaving only footprints in the sand and memories in their hearts.

When it was finally time for Sarah and Jack to leave Yosemite, they both knew that they would carry these experiences with them forever.

```

|

wbbbbb/wav2vec2-large-chinese-zh-cn | wbbbbb | 2023-09-11T00:07:38Z | 2,135 | 36 | transformers | [

"transformers",

"pytorch",

"tensorboard",

"safetensors",

"wav2vec2",

"pretraining",

"audio",

"automatic-speech-recognition",

"speech",

"xlsr-fine-tuning-week",

"zh",

"dataset:common_voice",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

]

| automatic-speech-recognition | 2022-07-18T06:21:56Z | ---

language: zh

datasets:

- common_voice

metrics:

- wer

- cer

tags:

- audio

- automatic-speech-recognition

- speech

- xlsr-fine-tuning-week

license: apache-2.0

model-index:

- name: XLSR Wav2Vec2 Chinese (zh-CN) by wbbbbb

results:

- task:

name: Speech Recognition

type: automatic-speech-recognition

dataset:

name: Common Voice zh-CN

type: common_voice

args: zh-CN

metrics:

- name: Test WER

type: wer

value: 70.47

- name: Test CER

type: cer

value: 12.30

---

# Fine-tuned XLSR-53 large model for speech recognition in Chinese

Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Chinese using the train and validation splits of [Common Voice 6.1](https://huggingface.co/datasets/common_voice), [CSS10](https://github.com/Kyubyong/css10) and [ST-CMDS](http://www.openslr.org/38/).

When using this model, make sure that your speech input is sampled at 16kHz.

This model has been fine-tuned on RTX3090 for 50h

The script used for training can be found here: https://github.com/jonatasgrosman/wav2vec2-sprint

## Usage

The model can be used directly (without a language model) as follows...

Using the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) library:

```python

from huggingsound import SpeechRecognitionModel

model = SpeechRecognitionModel("wbbbbb/wav2vec2-large-chinese-zh-cn")

audio_paths = ["/path/to/file.mp3", "/path/to/another_file.wav"]

transcriptions = model.transcribe(audio_paths)

```

## Evaluation

The model can be evaluated as follows on the Chinese (zh-CN) test data of Common Voice.

```python

import torch

import re

import librosa

from datasets import load_dataset, load_metric

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

import warnings

import os

os.environ["KMP_AFFINITY"] = ""

LANG_ID = "zh-CN"

MODEL_ID = "zh-CN-output-aishell"

DEVICE = "cuda"

test_dataset = load_dataset("common_voice", LANG_ID, split="test")

wer = load_metric("wer")

cer = load_metric("cer")

processor = Wav2Vec2Processor.from_pretrained(MODEL_ID)

model = Wav2Vec2ForCTC.from_pretrained(MODEL_ID)

model.to(DEVICE)

# Preprocessing the datasets.

# We need to read the audio files as arrays

def speech_file_to_array_fn(batch):

with warnings.catch_warnings():

warnings.simplefilter("ignore")

speech_array, sampling_rate = librosa.load(batch["path"], sr=16_000)

batch["speech"] = speech_array

batch["sentence"] = (

re.sub("([^\u4e00-\u9fa5\u0030-\u0039])", "", batch["sentence"]).lower() + " "

)

return batch

test_dataset = test_dataset.map(

speech_file_to_array_fn,

num_proc=15,

remove_columns=['client_id', 'up_votes', 'down_votes', 'age', 'gender', 'accent', 'locale', 'segment'],

)

# Preprocessing the datasets.

# We need to read the audio files as arrays

def evaluate(batch):

inputs = processor(

batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True

)

with torch.no_grad():

logits = model(

inputs.input_values.to(DEVICE),

attention_mask=inputs.attention_mask.to(DEVICE),

).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["pred_strings"] = processor.batch_decode(pred_ids)

return batch

result = test_dataset.map(evaluate, batched=True, batch_size=8)

predictions = [x.lower() for x in result["pred_strings"]]

references = [x.lower() for x in result["sentence"]]

print(

f"WER: {wer.compute(predictions=predictions, references=references, chunk_size=1000) * 100}"

)

print(f"CER: {cer.compute(predictions=predictions, references=references) * 100}")

```

**Test Result**:

In the table below I report the Word Error Rate (WER) and the Character Error Rate (CER) of the model. I ran the evaluation script described above on other models as well (on 2022-07-18). Note that the table below may show different results from those already reported, this may have been caused due to some specificity of the other evaluation scripts used.

| Model | WER | CER |

| ------------- | ------------- | ------------- |

| wbbbbb/wav2vec2-large-chinese-zh-cn | **70.47%** | **12.30%** |

| jonatasgrosman/wav2vec2-large-xlsr-53-chinese-zh-cn | **82.37%** | **19.03%** |

| ydshieh/wav2vec2-large-xlsr-53-chinese-zh-cn-gpt | 84.01% | 20.95% |

## Citation

If you want to cite this model you can use this:

```bibtex

@misc{grosman2021xlsr53-large-chinese,

title={Fine-tuned {XLSR}-53 large model for speech recognition in {C}hinese},

author={Grosman, Jonatas},

howpublished={\url{https://huggingface.co/wbbbbb/wav2vec2-large-chinese-zh-cn}},

year={2021}

}

``` |

TheBloke/neural-chat-7B-v3-1-GGUF | TheBloke | 2023-11-17T14:02:26Z | 2,135 | 57 | transformers | [

"transformers",

"gguf",

"mistral",

"base_model:Intel/neural-chat-7b-v3-1",

"license:apache-2.0",

"text-generation-inference",

"region:us"

]

| null | 2023-11-15T18:18:55Z | ---

base_model: Intel/neural-chat-7b-v3-1

inference: false

license: apache-2.0

model_creator: Intel

model_name: Neural Chat 7B v3-1

model_type: mistral

prompt_template: '### System:

{system_message}

### User:

{prompt}

### Assistant:

'

quantized_by: TheBloke

---

<!-- markdownlint-disable MD041 -->

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Neural Chat 7B v3-1 - GGUF

- Model creator: [Intel](https://huggingface.co/Intel)

- Original model: [Neural Chat 7B v3-1](https://huggingface.co/Intel/neural-chat-7b-v3-1)

<!-- description start -->

## Description

This repo contains GGUF format model files for [Intel's Neural Chat 7B v3-1](https://huggingface.co/Intel/neural-chat-7b-v3-1).

These files were quantised using hardware kindly provided by [Massed Compute](https://massedcompute.com/).

<!-- description end -->

<!-- README_GGUF.md-about-gguf start -->

### About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st 2023. It is a replacement for GGML, which is no longer supported by llama.cpp.

Here is an incomplete list of clients and libraries that are known to support GGUF:

* [llama.cpp](https://github.com/ggerganov/llama.cpp). The source project for GGUF. Offers a CLI and a server option.

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui), the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

* [KoboldCpp](https://github.com/LostRuins/koboldcpp), a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

* [LM Studio](https://lmstudio.ai/), an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration.

* [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui), a great web UI with many interesting and unique features, including a full model library for easy model selection.

* [Faraday.dev](https://faraday.dev/), an attractive and easy to use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

* [ctransformers](https://github.com/marella/ctransformers), a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server.

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python), a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

* [candle](https://github.com/huggingface/candle), a Rust ML framework with a focus on performance, including GPU support, and ease of use.

<!-- README_GGUF.md-about-gguf end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF)

* [Intel's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/Intel/neural-chat-7b-v3-1)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Orca-Hashes

```

### System:

{system_message}

### User:

{prompt}

### Assistant:

```

<!-- prompt-template end -->

<!-- compatibility_gguf start -->

## Compatibility

These quantised GGUFv2 files are compatible with llama.cpp from August 27th onwards, as of commit [d0cee0d](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221)

They are also compatible with many third party UIs and libraries - please see the list at the top of this README.

## Explanation of quantisation methods

<details>

<summary>Click to see details</summary>

The new methods available are:

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

</details>

<!-- compatibility_gguf end -->

<!-- README_GGUF.md-provided-files start -->

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [neural-chat-7b-v3-1.Q2_K.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q2_K.gguf) | Q2_K | 2 | 3.08 GB| 5.58 GB | smallest, significant quality loss - not recommended for most purposes |

| [neural-chat-7b-v3-1.Q3_K_S.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q3_K_S.gguf) | Q3_K_S | 3 | 3.16 GB| 5.66 GB | very small, high quality loss |

| [neural-chat-7b-v3-1.Q3_K_M.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q3_K_M.gguf) | Q3_K_M | 3 | 3.52 GB| 6.02 GB | very small, high quality loss |

| [neural-chat-7b-v3-1.Q3_K_L.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q3_K_L.gguf) | Q3_K_L | 3 | 3.82 GB| 6.32 GB | small, substantial quality loss |

| [neural-chat-7b-v3-1.Q4_0.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q4_0.gguf) | Q4_0 | 4 | 4.11 GB| 6.61 GB | legacy; small, very high quality loss - prefer using Q3_K_M |

| [neural-chat-7b-v3-1.Q4_K_S.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q4_K_S.gguf) | Q4_K_S | 4 | 4.14 GB| 6.64 GB | small, greater quality loss |

| [neural-chat-7b-v3-1.Q4_K_M.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q4_K_M.gguf) | Q4_K_M | 4 | 4.37 GB| 6.87 GB | medium, balanced quality - recommended |

| [neural-chat-7b-v3-1.Q5_0.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q5_0.gguf) | Q5_0 | 5 | 5.00 GB| 7.50 GB | legacy; medium, balanced quality - prefer using Q4_K_M |

| [neural-chat-7b-v3-1.Q5_K_S.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q5_K_S.gguf) | Q5_K_S | 5 | 5.00 GB| 7.50 GB | large, low quality loss - recommended |

| [neural-chat-7b-v3-1.Q5_K_M.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q5_K_M.gguf) | Q5_K_M | 5 | 5.13 GB| 7.63 GB | large, very low quality loss - recommended |

| [neural-chat-7b-v3-1.Q6_K.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q6_K.gguf) | Q6_K | 6 | 5.94 GB| 8.44 GB | very large, extremely low quality loss |

| [neural-chat-7b-v3-1.Q8_0.gguf](https://huggingface.co/TheBloke/neural-chat-7B-v3-1-GGUF/blob/main/neural-chat-7b-v3-1.Q8_0.gguf) | Q8_0 | 8 | 7.70 GB| 10.20 GB | very large, extremely low quality loss - not recommended |

**Note**: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

<!-- README_GGUF.md-provided-files end -->

<!-- README_GGUF.md-how-to-download start -->

## How to download GGUF files

**Note for manual downloaders:** You almost never want to clone the entire repo! Multiple different quantisation formats are provided, and most users only want to pick and download a single file.

The following clients/libraries will automatically download models for you, providing a list of available models to choose from:

* LM Studio

* LoLLMS Web UI

* Faraday.dev

### In `text-generation-webui`

Under Download Model, you can enter the model repo: TheBloke/neural-chat-7B-v3-1-GGUF and below it, a specific filename to download, such as: neural-chat-7b-v3-1.Q4_K_M.gguf.

Then click Download.

### On the command line, including multiple files at once

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub

```

Then you can download any individual model file to the current directory, at high speed, with a command like this:

```shell

huggingface-cli download TheBloke/neural-chat-7B-v3-1-GGUF neural-chat-7b-v3-1.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

You can also download multiple files at once with a pattern:

```shell

huggingface-cli download TheBloke/neural-chat-7B-v3-1-GGUF --local-dir . --local-dir-use-symlinks False --include='*Q4_K*gguf'

```

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/neural-chat-7B-v3-1-GGUF neural-chat-7b-v3-1.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```