modelId

stringlengths 4

112

| lastModified

stringlengths 24

24

| tags

list | pipeline_tag

stringclasses 21

values | files

list | publishedBy

stringlengths 2

37

| downloads_last_month

int32 0

9.44M

| library

stringclasses 15

values | modelCard

large_stringlengths 0

100k

|

|---|---|---|---|---|---|---|---|---|

facebook/wav2vec2-large-xlsr-53-polish | 2021-03-17T07:54:08.000Z | [

"pytorch",

"wav2vec2",

"nl",

"dataset:common_voice",

"transformers",

"speech",

"audio",

"automatic-speech-recognition",

"license:apache-2.0"

]

| automatic-speech-recognition | [

".gitattributes",

"README.md",

"config.json",

"preprocessor_config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.json"

]

| facebook | 7 | transformers | ---

language: nl

datasets:

- common_voice

tags:

- speech

- audio

- automatic-speech-recognition

license: apache-2.0

---

## Evaluation on Common Voice PL Test

```python

import torchaudio

from datasets import load_dataset, load_metric

from transformers import (

Wav2Vec2ForCTC,

Wav2Vec2Processor,

)

import torch

import re

import sys

model_name = "facebook/wav2vec2-large-xlsr-53-polish"

device = "cuda"

chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"]' # noqa: W605

model = Wav2Vec2ForCTC.from_pretrained(model_name).to(device)

processor = Wav2Vec2Processor.from_pretrained(model_name)

ds = load_dataset("common_voice", "pl", split="test", data_dir="./cv-corpus-6.1-2020-12-11")

resampler = torchaudio.transforms.Resample(orig_freq=48_000, new_freq=16_000)

def map_to_array(batch):

speech, _ = torchaudio.load(batch["path"])

batch["speech"] = resampler.forward(speech.squeeze(0)).numpy()

batch["sampling_rate"] = resampler.new_freq

batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower().replace("’", "'")

return batch

ds = ds.map(map_to_array)

def map_to_pred(batch):

features = processor(batch["speech"], sampling_rate=batch["sampling_rate"][0], padding=True, return_tensors="pt")

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)

batch["target"] = batch["sentence"]

return batch

result = ds.map(map_to_pred, batched=True, batch_size=16, remove_columns=list(ds.features.keys()))

wer = load_metric("wer")

print(wer.compute(predictions=result["predicted"], references=result["target"]))

```

**Result**: 24.6 % |

facebook/wav2vec2-large-xlsr-53-portuguese | 2021-03-11T11:44:02.000Z | [

"pytorch",

"wav2vec2",

"pt",

"dataset:common_voice",

"transformers",

"speech",

"audio",

"automatic-speech-recognition",

"license:apache-2.0"

]

| automatic-speech-recognition | [

".gitattributes",

"README.md",

"config.json",

"preprocessor_config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.json"

]

| facebook | 2,125 | transformers | ---

language: pt

datasets:

- common_voice

tags:

- speech

- audio

- automatic-speech-recognition

license: apache-2.0

---

## Evaluation on Common Voice PT Test

```python

import torchaudio

from datasets import load_dataset, load_metric

from transformers import (

Wav2Vec2ForCTC,

Wav2Vec2Processor,

)

import torch

import re

import sys

model_name = "facebook/wav2vec2-large-xlsr-53-portuguese"

device = "cuda"

chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"]' # noqa: W605

model = Wav2Vec2ForCTC.from_pretrained(model_name).to(device)

processor = Wav2Vec2Processor.from_pretrained(model_name)

ds = load_dataset("common_voice", "pt", split="test", data_dir="./cv-corpus-6.1-2020-12-11")

resampler = torchaudio.transforms.Resample(orig_freq=48_000, new_freq=16_000)

def map_to_array(batch):

speech, _ = torchaudio.load(batch["path"])

batch["speech"] = resampler.forward(speech.squeeze(0)).numpy()

batch["sampling_rate"] = resampler.new_freq

batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower().replace("’", "'")

return batch

ds = ds.map(map_to_array)

def map_to_pred(batch):

features = processor(batch["speech"], sampling_rate=batch["sampling_rate"][0], padding=True, return_tensors="pt")

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)

batch["target"] = batch["sentence"]

return batch

result = ds.map(map_to_pred, batched=True, batch_size=16, remove_columns=list(ds.features.keys()))

wer = load_metric("wer")

print(wer.compute(predictions=result["predicted"], references=result["target"]))

```

**Result**: 27.1 % |

facebook/wav2vec2-large-xlsr-53-spanish | 2021-03-11T11:35:42.000Z | [

"pytorch",

"wav2vec2",

"es",

"dataset:common_voice",

"transformers",

"speech",

"audio",

"automatic-speech-recognition",

"license:apache-2.0"

]

| automatic-speech-recognition | [

".gitattributes",

"README.md",

"config.json",

"preprocessor_config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.json"

]

| facebook | 8,803 | transformers | ---

language: es

datasets:

- common_voice

tags:

- speech

- audio

- automatic-speech-recognition

license: apache-2.0

---

## Evaluation on Common Voice ES Test

```python

import torchaudio

from datasets import load_dataset, load_metric

from transformers import (

Wav2Vec2ForCTC,

Wav2Vec2Processor,

)

import torch

import re

import sys

model_name = "facebook/wav2vec2-large-xlsr-53-spanish"

device = "cuda"

chars_to_ignore_regex = '[\,\?\.\!\-\;\:\"]' # noqa: W605

model = Wav2Vec2ForCTC.from_pretrained(model_name).to(device)

processor = Wav2Vec2Processor.from_pretrained(model_name)

ds = load_dataset("common_voice", "es", split="test", data_dir="./cv-corpus-6.1-2020-12-11")

resampler = torchaudio.transforms.Resample(orig_freq=48_000, new_freq=16_000)

def map_to_array(batch):

speech, _ = torchaudio.load(batch["path"])

batch["speech"] = resampler.forward(speech.squeeze(0)).numpy()

batch["sampling_rate"] = resampler.new_freq

batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower().replace("’", "'")

return batch

ds = ds.map(map_to_array)

def map_to_pred(batch):

features = processor(batch["speech"], sampling_rate=batch["sampling_rate"][0], padding=True, return_tensors="pt")

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)

batch["target"] = batch["sentence"]

return batch

result = ds.map(map_to_pred, batched=True, batch_size=16, remove_columns=list(ds.features.keys()))

wer = load_metric("wer")

print(wer.compute(predictions=result["predicted"], references=result["target"]))

```

**Result**: 17.6 % |

facebook/wav2vec2-large-xlsr-53 | 2021-06-09T18:53:03.000Z | [

"pytorch",

"wav2vec2",

"pretraining",

"multilingual",

"dataset:common_voice",

"arxiv:2006.13979",

"transformers",

"speech",

"license:apache-2.0"

]

| [

".gitattributes",

"README.md",

"config.json",

"preprocessor_config.json",

"pytorch_model.bin"

]

| facebook | 14,492 | transformers | ---

language: multilingual

datasets:

- common_voice

tags:

- speech

license: apache-2.0

---

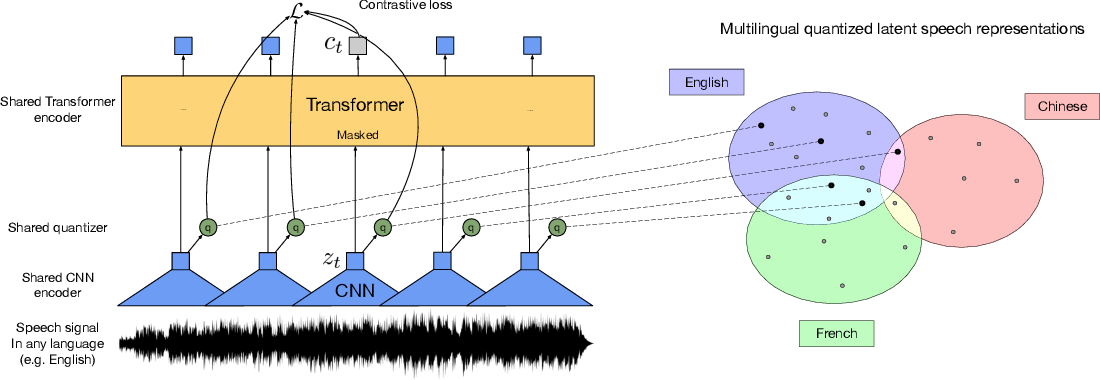

# Wav2Vec2-XLSR-53

[Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

[Paper](https://arxiv.org/abs/2006.13979)

Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli

**Abstract**

This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages.

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

# Usage

See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.

|

|

facebook/wav2vec2-large | 2021-06-09T18:47:33.000Z | [

"pytorch",

"wav2vec2",

"pretraining",

"en",

"dataset:librispeech_asr",

"arxiv:2006.11477",

"transformers",

"speech",

"license:apache-2.0"

]

| [

".gitattributes",

"README.md",

"config.json",

"pytorch_model.bin"

]

| facebook | 671 | transformers | ---

language: en

datasets:

- librispeech_asr

tags:

- speech

license: apache-2.0

---

# Wav2Vec2-Large

[Facebook's Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

[Paper](https://arxiv.org/abs/2006.11477)

Authors: Alexei Baevski, Henry Zhou, Abdelrahman Mohamed, Michael Auli

**Abstract**

We show for the first time that learning powerful representations from speech audio alone followed by fine-tuning on transcribed speech can outperform the best semi-supervised methods while being conceptually simpler. wav2vec 2.0 masks the speech input in the latent space and solves a contrastive task defined over a quantization of the latent representations which are jointly learned. Experiments using all labeled data of Librispeech achieve 1.8/3.3 WER on the clean/other test sets. When lowering the amount of labeled data to one hour, wav2vec 2.0 outperforms the previous state of the art on the 100 hour subset while using 100 times less labeled data. Using just ten minutes of labeled data and pre-training on 53k hours of unlabeled data still achieves 4.8/8.2 WER. This demonstrates the feasibility of speech recognition with limited amounts of labeled data.

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

# Usage

See [this notebook](https://colab.research.google.com/drive/1FjTsqbYKphl9kL-eILgUc-bl4zVThL8F?usp=sharing) for more information on how to fine-tune the model. |

|

facebook/wmt19-de-en | 2020-12-11T21:39:51.000Z | [

"pytorch",

"fsmt",

"seq2seq",

"de",

"en",

"dataset:wmt19",

"arxiv:1907.06616",

"transformers",

"translation",

"wmt19",

"facebook",

"license:apache-2.0",

"text2text-generation"

]

| translation | [

".gitattributes",

"README.md",

"config.json",

"merges.txt",

"pytorch_model.bin",

"tokenizer_config.json",

"vocab-src.json",

"vocab-tgt.json"

]

| facebook | 4,223 | transformers | ---

language:

- de

- en

tags:

- translation

- wmt19

- facebook

license: apache-2.0

datasets:

- wmt19

metrics:

- bleu

thumbnail: https://huggingface.co/front/thumbnails/facebook.png

---

# FSMT

## Model description

This is a ported version of [fairseq wmt19 transformer](https://github.com/pytorch/fairseq/blob/master/examples/wmt19/README.md) for de-en.

For more details, please see, [Facebook FAIR's WMT19 News Translation Task Submission](https://arxiv.org/abs/1907.06616).

The abbreviation FSMT stands for FairSeqMachineTranslation

All four models are available:

* [wmt19-en-ru](https://huggingface.co/facebook/wmt19-en-ru)

* [wmt19-ru-en](https://huggingface.co/facebook/wmt19-ru-en)

* [wmt19-en-de](https://huggingface.co/facebook/wmt19-en-de)

* [wmt19-de-en](https://huggingface.co/facebook/wmt19-de-en)

## Intended uses & limitations

#### How to use

```python

from transformers import FSMTForConditionalGeneration, FSMTTokenizer

mname = "facebook/wmt19-de-en"

tokenizer = FSMTTokenizer.from_pretrained(mname)

model = FSMTForConditionalGeneration.from_pretrained(mname)

input = "Maschinelles Lernen ist großartig, oder?"

input_ids = tokenizer.encode(input, return_tensors="pt")

outputs = model.generate(input_ids)

decoded = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(decoded) # Machine learning is great, isn't it?

```

#### Limitations and bias

- The original (and this ported model) doesn't seem to handle well inputs with repeated sub-phrases, [content gets truncated](https://discuss.huggingface.co/t/issues-with-translating-inputs-containing-repeated-phrases/981)

## Training data

Pretrained weights were left identical to the original model released by fairseq. For more details, please, see the [paper](https://arxiv.org/abs/1907.06616).

## Eval results

pair | fairseq | transformers

-------|---------|----------

de-en | [42.3](http://matrix.statmt.org/matrix/output/1902?run_id=6750) | 41.35

The score is slightly below the score reported by `fairseq`, since `transformers`` currently doesn't support:

- model ensemble, therefore the best performing checkpoint was ported (``model4.pt``).

- re-ranking

The score was calculated using this code:

```bash

git clone https://github.com/huggingface/transformers

cd transformers

export PAIR=de-en

export DATA_DIR=data/$PAIR

export SAVE_DIR=data/$PAIR

export BS=8

export NUM_BEAMS=15

mkdir -p $DATA_DIR

sacrebleu -t wmt19 -l $PAIR --echo src > $DATA_DIR/val.source

sacrebleu -t wmt19 -l $PAIR --echo ref > $DATA_DIR/val.target

echo $PAIR

PYTHONPATH="src:examples/seq2seq" python examples/seq2seq/run_eval.py facebook/wmt19-$PAIR $DATA_DIR/val.source $SAVE_DIR/test_translations.txt --reference_path $DATA_DIR/val.target --score_path $SAVE_DIR/test_bleu.json --bs $BS --task translation --num_beams $NUM_BEAMS

```

note: fairseq reports using a beam of 50, so you should get a slightly higher score if re-run with `--num_beams 50`.

## Data Sources

- [training, etc.](http://www.statmt.org/wmt19/)

- [test set](http://matrix.statmt.org/test_sets/newstest2019.tgz?1556572561)

### BibTeX entry and citation info

```bibtex

@inproceedings{...,

year={2020},

title={Facebook FAIR's WMT19 News Translation Task Submission},

author={Ng, Nathan and Yee, Kyra and Baevski, Alexei and Ott, Myle and Auli, Michael and Edunov, Sergey},

booktitle={Proc. of WMT},

}

```

## TODO

- port model ensemble (fairseq uses 4 model checkpoints)

|

facebook/wmt19-en-de | 2020-12-11T21:39:55.000Z | [

"pytorch",

"fsmt",

"seq2seq",

"en",

"de",

"dataset:wmt19",

"arxiv:1907.06616",

"transformers",

"translation",

"wmt19",

"facebook",

"license:apache-2.0",

"text2text-generation"

]

| translation | [

".gitattributes",

"README.md",

"config.json",

"merges.txt",

"pytorch_model.bin",

"tokenizer_config.json",

"vocab-src.json",

"vocab-tgt.json"

]

| facebook | 4,230 | transformers | ---

language:

- en

- de

tags:

- translation

- wmt19

- facebook

license: apache-2.0

datasets:

- wmt19

metrics:

- bleu

thumbnail: https://huggingface.co/front/thumbnails/facebook.png

---

# FSMT

## Model description

This is a ported version of [fairseq wmt19 transformer](https://github.com/pytorch/fairseq/blob/master/examples/wmt19/README.md) for en-de.

For more details, please see, [Facebook FAIR's WMT19 News Translation Task Submission](https://arxiv.org/abs/1907.06616).

The abbreviation FSMT stands for FairSeqMachineTranslation

All four models are available:

* [wmt19-en-ru](https://huggingface.co/facebook/wmt19-en-ru)

* [wmt19-ru-en](https://huggingface.co/facebook/wmt19-ru-en)

* [wmt19-en-de](https://huggingface.co/facebook/wmt19-en-de)

* [wmt19-de-en](https://huggingface.co/facebook/wmt19-de-en)

## Intended uses & limitations

#### How to use

```python

from transformers import FSMTForConditionalGeneration, FSMTTokenizer

mname = "facebook/wmt19-en-de"

tokenizer = FSMTTokenizer.from_pretrained(mname)

model = FSMTForConditionalGeneration.from_pretrained(mname)

input = "Machine learning is great, isn't it?"

input_ids = tokenizer.encode(input, return_tensors="pt")

outputs = model.generate(input_ids)

decoded = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(decoded) # Maschinelles Lernen ist großartig, oder?

```

#### Limitations and bias

- The original (and this ported model) doesn't seem to handle well inputs with repeated sub-phrases, [content gets truncated](https://discuss.huggingface.co/t/issues-with-translating-inputs-containing-repeated-phrases/981)

## Training data

Pretrained weights were left identical to the original model released by fairseq. For more details, please, see the [paper](https://arxiv.org/abs/1907.06616).

## Eval results

pair | fairseq | transformers

-------|---------|----------

en-de | [43.1](http://matrix.statmt.org/matrix/output/1909?run_id=6862) | 42.83

The score is slightly below the score reported by `fairseq`, since `transformers`` currently doesn't support:

- model ensemble, therefore the best performing checkpoint was ported (``model4.pt``).

- re-ranking

The score was calculated using this code:

```bash

git clone https://github.com/huggingface/transformers

cd transformers

export PAIR=en-de

export DATA_DIR=data/$PAIR

export SAVE_DIR=data/$PAIR

export BS=8

export NUM_BEAMS=15

mkdir -p $DATA_DIR

sacrebleu -t wmt19 -l $PAIR --echo src > $DATA_DIR/val.source

sacrebleu -t wmt19 -l $PAIR --echo ref > $DATA_DIR/val.target

echo $PAIR

PYTHONPATH="src:examples/seq2seq" python examples/seq2seq/run_eval.py facebook/wmt19-$PAIR $DATA_DIR/val.source $SAVE_DIR/test_translations.txt --reference_path $DATA_DIR/val.target --score_path $SAVE_DIR/test_bleu.json --bs $BS --task translation --num_beams $NUM_BEAMS

```

note: fairseq reports using a beam of 50, so you should get a slightly higher score if re-run with `--num_beams 50`.

## Data Sources

- [training, etc.](http://www.statmt.org/wmt19/)

- [test set](http://matrix.statmt.org/test_sets/newstest2019.tgz?1556572561)

### BibTeX entry and citation info

```bibtex

@inproceedings{...,

year={2020},

title={Facebook FAIR's WMT19 News Translation Task Submission},

author={Ng, Nathan and Yee, Kyra and Baevski, Alexei and Ott, Myle and Auli, Michael and Edunov, Sergey},

booktitle={Proc. of WMT},

}

```

## TODO

- port model ensemble (fairseq uses 4 model checkpoints)

|

facebook/wmt19-en-ru | 2020-12-11T21:39:58.000Z | [

"pytorch",

"fsmt",

"seq2seq",

"en",

"ru",

"dataset:wmt19",

"arxiv:1907.06616",

"transformers",

"translation",

"wmt19",

"facebook",

"license:apache-2.0",

"text2text-generation"

]

| translation | [

".gitattributes",

"README.md",

"config.json",

"merges.txt",

"pytorch_model.bin",

"tokenizer_config.json",

"vocab-src.json",

"vocab-tgt.json"

]

| facebook | 8,755 | transformers | ---

language:

- en

- ru

tags:

- translation

- wmt19

- facebook

license: apache-2.0

datasets:

- wmt19

metrics:

- bleu

thumbnail: https://huggingface.co/front/thumbnails/facebook.png

---

# FSMT

## Model description

This is a ported version of [fairseq wmt19 transformer](https://github.com/pytorch/fairseq/blob/master/examples/wmt19/README.md) for en-ru.

For more details, please see, [Facebook FAIR's WMT19 News Translation Task Submission](https://arxiv.org/abs/1907.06616).

The abbreviation FSMT stands for FairSeqMachineTranslation

All four models are available:

* [wmt19-en-ru](https://huggingface.co/facebook/wmt19-en-ru)

* [wmt19-ru-en](https://huggingface.co/facebook/wmt19-ru-en)

* [wmt19-en-de](https://huggingface.co/facebook/wmt19-en-de)

* [wmt19-de-en](https://huggingface.co/facebook/wmt19-de-en)

## Intended uses & limitations

#### How to use

```python

from transformers import FSMTForConditionalGeneration, FSMTTokenizer

mname = "facebook/wmt19-en-ru"

tokenizer = FSMTTokenizer.from_pretrained(mname)

model = FSMTForConditionalGeneration.from_pretrained(mname)

input = "Machine learning is great, isn't it?"

input_ids = tokenizer.encode(input, return_tensors="pt")

outputs = model.generate(input_ids)

decoded = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(decoded) # Машинное обучение - это здорово, не так ли?

```

#### Limitations and bias

- The original (and this ported model) doesn't seem to handle well inputs with repeated sub-phrases, [content gets truncated](https://discuss.huggingface.co/t/issues-with-translating-inputs-containing-repeated-phrases/981)

## Training data

Pretrained weights were left identical to the original model released by fairseq. For more details, please, see the [paper](https://arxiv.org/abs/1907.06616).

## Eval results

pair | fairseq | transformers

-------|---------|----------

en-ru | [36.4](http://matrix.statmt.org/matrix/output/1914?run_id=6724) | 33.47

The score is slightly below the score reported by `fairseq`, since `transformers`` currently doesn't support:

- model ensemble, therefore the best performing checkpoint was ported (``model4.pt``).

- re-ranking

The score was calculated using this code:

```bash

git clone https://github.com/huggingface/transformers

cd transformers

export PAIR=en-ru

export DATA_DIR=data/$PAIR

export SAVE_DIR=data/$PAIR

export BS=8

export NUM_BEAMS=15

mkdir -p $DATA_DIR

sacrebleu -t wmt19 -l $PAIR --echo src > $DATA_DIR/val.source

sacrebleu -t wmt19 -l $PAIR --echo ref > $DATA_DIR/val.target

echo $PAIR

PYTHONPATH="src:examples/seq2seq" python examples/seq2seq/run_eval.py facebook/wmt19-$PAIR $DATA_DIR/val.source $SAVE_DIR/test_translations.txt --reference_path $DATA_DIR/val.target --score_path $SAVE_DIR/test_bleu.json --bs $BS --task translation --num_beams $NUM_BEAMS

```

note: fairseq reports using a beam of 50, so you should get a slightly higher score if re-run with `--num_beams 50`.

## Data Sources

- [training, etc.](http://www.statmt.org/wmt19/)

- [test set](http://matrix.statmt.org/test_sets/newstest2019.tgz?1556572561)

### BibTeX entry and citation info

```bibtex

@inproceedings{...,

year={2020},

title={Facebook FAIR's WMT19 News Translation Task Submission},

author={Ng, Nathan and Yee, Kyra and Baevski, Alexei and Ott, Myle and Auli, Michael and Edunov, Sergey},

booktitle={Proc. of WMT},

}

```

## TODO

- port model ensemble (fairseq uses 4 model checkpoints)

|

facebook/wmt19-ru-en | 2020-12-11T21:40:01.000Z | [

"pytorch",

"fsmt",

"seq2seq",

"ru",

"en",

"dataset:wmt19",

"arxiv:1907.06616",

"transformers",

"translation",

"wmt19",

"facebook",

"license:apache-2.0",

"text2text-generation"

]

| translation | [

".gitattributes",

"README.md",

"config.json",

"merges.txt",

"pytorch_model.bin",

"tokenizer_config.json",

"vocab-src.json",

"vocab-tgt.json"

]

| facebook | 783 | transformers | ---

language:

- ru

- en

tags:

- translation

- wmt19

- facebook

license: apache-2.0

datasets:

- wmt19

metrics:

- bleu

thumbnail: https://huggingface.co/front/thumbnails/facebook.png

---

# FSMT

## Model description

This is a ported version of [fairseq wmt19 transformer](https://github.com/pytorch/fairseq/blob/master/examples/wmt19/README.md) for ru-en.

For more details, please see, [Facebook FAIR's WMT19 News Translation Task Submission](https://arxiv.org/abs/1907.06616).

The abbreviation FSMT stands for FairSeqMachineTranslation

All four models are available:

* [wmt19-en-ru](https://huggingface.co/facebook/wmt19-en-ru)

* [wmt19-ru-en](https://huggingface.co/facebook/wmt19-ru-en)

* [wmt19-en-de](https://huggingface.co/facebook/wmt19-en-de)

* [wmt19-de-en](https://huggingface.co/facebook/wmt19-de-en)

## Intended uses & limitations

#### How to use

```python

from transformers import FSMTForConditionalGeneration, FSMTTokenizer

mname = "facebook/wmt19-ru-en"

tokenizer = FSMTTokenizer.from_pretrained(mname)

model = FSMTForConditionalGeneration.from_pretrained(mname)

input = "Машинное обучение - это здорово, не так ли?"

input_ids = tokenizer.encode(input, return_tensors="pt")

outputs = model.generate(input_ids)

decoded = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(decoded) # Machine learning is great, isn't it?

```

#### Limitations and bias

- The original (and this ported model) doesn't seem to handle well inputs with repeated sub-phrases, [content gets truncated](https://discuss.huggingface.co/t/issues-with-translating-inputs-containing-repeated-phrases/981)

## Training data

Pretrained weights were left identical to the original model released by fairseq. For more details, please, see the [paper](https://arxiv.org/abs/1907.06616).

## Eval results

pair | fairseq | transformers

-------|---------|----------

ru-en | [41.3](http://matrix.statmt.org/matrix/output/1907?run_id=6937) | 39.20

The score is slightly below the score reported by `fairseq`, since `transformers`` currently doesn't support:

- model ensemble, therefore the best performing checkpoint was ported (``model4.pt``).

- re-ranking

The score was calculated using this code:

```bash

git clone https://github.com/huggingface/transformers

cd transformers

export PAIR=ru-en

export DATA_DIR=data/$PAIR

export SAVE_DIR=data/$PAIR

export BS=8

export NUM_BEAMS=15

mkdir -p $DATA_DIR

sacrebleu -t wmt19 -l $PAIR --echo src > $DATA_DIR/val.source

sacrebleu -t wmt19 -l $PAIR --echo ref > $DATA_DIR/val.target

echo $PAIR

PYTHONPATH="src:examples/seq2seq" python examples/seq2seq/run_eval.py facebook/wmt19-$PAIR $DATA_DIR/val.source $SAVE_DIR/test_translations.txt --reference_path $DATA_DIR/val.target --score_path $SAVE_DIR/test_bleu.json --bs $BS --task translation --num_beams $NUM_BEAMS

```

note: fairseq reports using a beam of 50, so you should get a slightly higher score if re-run with `--num_beams 50`.

## Data Sources

- [training, etc.](http://www.statmt.org/wmt19/)

- [test set](http://matrix.statmt.org/test_sets/newstest2019.tgz?1556572561)

### BibTeX entry and citation info

```bibtex

@inproceedings{...,

year={2020},

title={Facebook FAIR's WMT19 News Translation Task Submission},

author={Ng, Nathan and Yee, Kyra and Baevski, Alexei and Ott, Myle and Auli, Michael and Edunov, Sergey},

booktitle={Proc. of WMT},

}

```

## TODO

- port model ensemble (fairseq uses 4 model checkpoints)

|

faizank2/bhand | 2021-03-04T21:56:30.000Z | []

| [

".gitattributes"

]

| faizank2 | 0 | |||

faizank2/legal-ner | 2021-03-04T20:53:57.000Z | []

| [

".gitattributes"

]

| faizank2 | 0 | |||

faizraza/temp1 | 2021-06-12T11:10:53.000Z | []

| [

".gitattributes"

]

| faizraza | 0 | |||

faroit/open-unmix | 2021-02-20T21:19:23.000Z | []

| [

".gitattributes"

]

| faroit | 0 | |||

faten/test_model | 2021-03-30T12:36:44.000Z | []

| [

".gitattributes"

]

| faten | 0 | |||

fatvvs/model_name | 2021-06-07T12:13:18.000Z | []

| [

".gitattributes"

]

| fatvvs | 0 | |||

faust/broken_t5_squad2 | 2020-07-04T08:42:08.000Z | [

"pytorch",

"t5",

"seq2seq",

"transformers",

"text2text-generation"

]

| text2text-generation | [

".gitattributes",

"added_tokens.json",

"config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"spiece.model",

"tokenizer_config.json"

]

| faust | 13 | transformers | |

fbu63711/dfgdfgdf | 2020-11-18T07:34:06.000Z | []

| [

".gitattributes"

]

| fbu63711 | 0 | |||

feconroses/autonlp-SaaS_Topic_Detection-99369 | 2021-04-11T15:07:14.000Z | [

"pytorch",

"distilbert",

"text-classification",

"en",

"transformers",

"autonlp"

]

| text-classification | [

".gitattributes",

"README.md",

"config.json",

"pytorch_model.bin",

"sample_input.pkl",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.txt"

]

| feconroses | 80 | transformers | ---

tags: autonlp

language: en

widget:

- text: "I love AutoNLP 🤗"

---

# Model Trained Using AutoNLP

- Problem type: Multi-class Classification

- Model ID: 99369

## Validation Metrics

- Loss: 2.408306121826172

- Accuracy: 0.2708333333333333

- Macro F1: 0.1101851851851852

- Micro F1: 0.2708333333333333

- Weighted F1: 0.22777777777777777

- Macro Precision: 0.10891812865497075

- Micro Precision: 0.2708333333333333

- Weighted Precision: 0.212719298245614

- Macro Recall: 0.12373737373737376

- Micro Recall: 0.2708333333333333

- Weighted Recall: 0.2708333333333333

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoNLP"}' https://api-inference.huggingface.co/models/feconroses/autonlp-SaaS_Topic_Detection-99369

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("feconroses/autonlp-SaaS_Topic_Detection-99369", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("feconroses/autonlp-SaaS_Topic_Detection-99369", use_auth_token=True)

inputs = tokenizer("I love AutoNLP", return_tensors="pt")

outputs = model(**inputs)

``` |

felflare/bert-restore-punctuation | 2021-05-24T03:04:47.000Z | [

"pytorch",

"bert",

"token-classification",

"en",

"dataset:yelp_polarity",

"transformers",

"punctuation",

"license:mit"

]

| token-classification | [

".gitattributes",

"README.md",

"config.json",

"model_args.json",

"optimizer.pt",

"pytorch_model.bin",

"scheduler.pt",

"special_tokens_map.json",

"tokenizer_config.json",

"training_args.bin",

"vocab.txt"

]

| felflare | 447 | transformers | ---

language:

- en

tags:

- punctuation

license: mit

datasets:

- yelp_polarity

metrics:

- f1

---

# ✨ bert-restore-punctuation

[]()

This a bert-base-uncased model finetuned for punctuation restoration on [Yelp Reviews](https://www.tensorflow.org/datasets/catalog/yelp_polarity_reviews).

The model predicts the punctuation and upper-casing of plain, lower-cased text. An example use case can be ASR output. Or other cases when text has lost punctuation.

This model is intended for direct use as a punctuation restoration model for the general English language. Alternatively, you can use this for further fine-tuning on domain-specific texts for punctuation restoration tasks.

Model restores the following punctuations -- **[! ? . , - : ; ' ]**

The model also restores the upper-casing of words.

-----------------------------------------------

## 🚋 Usage

**Below is a quick way to get up and running with the model.**

1. First, install the package.

```bash

pip install rpunct

```

2. Sample python code.

```python

from rpunct import RestorePuncts

# The default language is 'english'

rpunct = RestorePuncts()

rpunct.punctuate("""in 2018 cornell researchers built a high-powered detector that in combination with an algorithm-driven process called ptychography set a world record

by tripling the resolution of a state-of-the-art electron microscope as successful as it was that approach had a weakness it only worked with ultrathin samples that were

a few atoms thick anything thicker would cause the electrons to scatter in ways that could not be disentangled now a team again led by david muller the samuel b eckert

professor of engineering has bested its own record by a factor of two with an electron microscope pixel array detector empad that incorporates even more sophisticated

3d reconstruction algorithms the resolution is so fine-tuned the only blurring that remains is the thermal jiggling of the atoms themselves""")

# Outputs the following:

# In 2018, Cornell researchers built a high-powered detector that, in combination with an algorithm-driven process called Ptychography, set a world record by tripling the

# resolution of a state-of-the-art electron microscope. As successful as it was, that approach had a weakness. It only worked with ultrathin samples that were a few atoms

# thick. Anything thicker would cause the electrons to scatter in ways that could not be disentangled. Now, a team again led by David Muller, the Samuel B.

# Eckert Professor of Engineering, has bested its own record by a factor of two with an Electron microscope pixel array detector empad that incorporates even more

# sophisticated 3d reconstruction algorithms. The resolution is so fine-tuned the only blurring that remains is the thermal jiggling of the atoms themselves.

```

**This model works on arbitrarily large text in English language and uses GPU if available.**

-----------------------------------------------

## 📡 Training data

Here is the number of product reviews we used for finetuning the model:

| Language | Number of text samples|

| -------- | ----------------- |

| English | 560,000 |

We found the best convergence around _**3 epochs**_, which is what presented here and available via a download.

-----------------------------------------------

## 🎯 Accuracy

The fine-tuned model obtained the following accuracy on 45,990 held-out text samples:

| Accuracy | Overall F1 | Eval Support |

| -------- | ---------------------- | ------------------- |

| 91% | 90% | 45,990

Below is a breakdown of the performance of the model by each label:

| label | precision | recall | f1-score | support|

| --------- | -------------|-------- | ----------|--------|

| **!** | 0.45 | 0.17 | 0.24 | 424

| **!+Upper** | 0.43 | 0.34 | 0.38 | 98

| **'** | 0.60 | 0.27 | 0.37 | 11

| **,** | 0.59 | 0.51 | 0.55 | 1522

| **,+Upper** | 0.52 | 0.50 | 0.51 | 239

| **-** | 0.00 | 0.00 | 0.00 | 18

| **.** | 0.69 | 0.84 | 0.75 | 2488

| **.+Upper** | 0.65 | 0.52 | 0.57 | 274

| **:** | 0.52 | 0.31 | 0.39 | 39

| **:+Upper** | 0.36 | 0.62 | 0.45 | 16

| **;** | 0.00 | 0.00 | 0.00 | 17

| **?** | 0.54 | 0.48 | 0.51 | 46

| **?+Upper** | 0.40 | 0.50 | 0.44 | 4

| **none** | 0.96 | 0.96 | 0.96 |35352

| **Upper** | 0.84 | 0.82 | 0.83 | 5442

-----------------------------------------------

## ☕ Contact

Contact [Daulet Nurmanbetov]([email protected]) for questions, feedback and/or requests for similar models.

----------------------------------------------- |

felixhusen/poem | 2021-05-21T16:01:12.000Z | [

"pytorch",

"jax",

"gpt2",

"lm-head",

"causal-lm",

"transformers",

"text-generation"

]

| text-generation | [

".gitattributes",

"config.json",

"flax_model.msgpack",

"merges.txt",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.json"

]

| felixhusen | 134 | transformers | |

felixhusen/scientific | 2021-05-21T16:02:25.000Z | [

"pytorch",

"jax",

"gpt2",

"lm-head",

"causal-lm",

"transformers",

"text-generation"

]

| text-generation | [

".gitattributes",

"config.json",

"flax_model.msgpack",

"merges.txt",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.json"

]

| felixhusen | 41 | transformers | |

feng/BERT-wwm | 2021-04-13T07:37:25.000Z | []

| [

".gitattributes"

]

| feng | 0 | |||

fenrhjen/camembert_aux_amandes | 2020-12-20T18:22:33.000Z | [

"pytorch",

"camembert",

"masked-lm",

"transformers",

"fill-mask"

]

| fill-mask | [

".gitattributes",

"README.md",

"config.json",

"eval_results.txt",

"merges.txt",

"pytorch_model.bin",

"sentencepiece.bpe.model",

"special_tokens_map.json",

"tokenizer_config.json",

"training_args.bin",

"vocab.json"

]

| fenrhjen | 34 | transformers | |

fernandopessagno/dsadsa | 2021-05-27T09:44:29.000Z | []

| [

".gitattributes"

]

| fernandopessagno | 0 | |||

fernandopessagno/test | 2021-01-27T09:32:24.000Z | []

| [

".gitattributes"

]

| fernandopessagno | 0 | |||

festival/no | 2021-04-15T21:33:35.000Z | []

| [

".gitattributes",

"README.md"

]

| festival | 0 | |||

ffarzad/xlnet_sentiment | 2021-02-23T18:37:31.000Z | []

| [

".gitattributes",

"xlnet_model.bin",

"xlnet_model__.bin"

]

| ffarzad | 0 | |||

ffrmns/t5-small_XSum-finetuned | 2021-04-20T00:59:58.000Z | [

"pytorch",

"t5",

"seq2seq",

"transformers",

"text2text-generation"

]

| text2text-generation | [

".gitattributes",

"config.json",

"pytorch_model.bin",

"spiece.model",

"tokenizer_config.json"

]

| ffrmns | 6 | transformers | |

fgjmfyhj/dtjndgyjkdrytkfr | 2021-04-03T20:02:25.000Z | []

| [

".gitattributes",

"README.md",

"sfhndsjdtjdrj"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/eruerujr6-fvgjfykj | 2021-03-15T23:56:53.000Z | []

| [

".gitattributes",

"README.mdqwdqef"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/ggfjfcgjfdsdg | 2021-03-13T22:08:33.000Z | []

| [

".gitattributes",

"README.md"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/seryseruyhed6tujr | 2021-04-08T20:39:02.000Z | []

| [

".gitattributes",

"srdgysrfthetjh"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/tjhdjddjftj | 2021-04-19T20:01:52.000Z | []

| [

".gitattributes",

"asegash"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/tjryjkrykrk | 2021-03-30T20:12:05.000Z | []

| [

".gitattributes"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/watch-army-of-the-dead-full-movie-online-free-netflix | 2021-05-25T21:45:25.000Z | []

| [

".gitattributes",

"README.md"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/zdgbsfhbsxfhs | 2021-03-26T20:09:10.000Z | []

| [

".gitattributes",

"zdbsfnsxfnsxnsxfdndf"

]

| fgjmfyhj | 0 | |||

fgjmfyhj/zdsgbsfhbsfnsx | 2021-03-28T00:17:38.000Z | []

| [

".gitattributes",

"zdsgbvsazdrhbsfhs"

]

| fgjmfyhj | 0 | |||

fhswf/bert_de_ner | 2021-05-19T16:49:54.000Z | [

"pytorch",

"tf",

"jax",

"bert",

"token-classification",

"de",

"dataset:germeval_14",

"transformers",

"license:cc-by-sa-4.0",

"German",

"NER"

]

| token-classification | [

".gitattributes",

"README.md",

"config.json",

"flax_model.msgpack",

"pytorch_model.bin",

"special_tokens_map.json",

"tf_model.h5",

"tokenizer_config.json",

"vocab.txt"

]

| fhswf | 1,696 | transformers | ---

language: de

license: cc-by-sa-4.0

datasets:

- germeval_14

tags:

- German

- de

- NER

---

# BERT-DE-NER

## What is it?

This is a German BERT model fine-tuned for named entity recognition.

## Base model & training

This model is based on [bert-base-german-dbmdz-cased](https://huggingface.co/bert-base-german-dbmdz-cased) and has been fine-tuned

for NER on the training data from [GermEval2014](https://sites.google.com/site/germeval2014ner).

## Model results

The results on the test data from GermEval2014 are (entities only):

| Precision | Recall | F1-Score |

|----------:|-------:|---------:|

| 0.817 | 0.842 | 0.829 |

## How to use

```Python

>>> from transformers import pipeline

>>> classifier = pipeline('ner', model="fhswf/bert_de_ner")

>>> classifier('Von der Organisation „medico international“ hieß es, die EU entziehe sich seit vielen Jahren der Verantwortung für die Menschen an ihren Außengrenzen.')

[{'word': 'med', 'score': 0.9996621608734131, 'entity': 'B-ORG', 'index': 6},

{'word': '##ico', 'score': 0.9995362162590027, 'entity': 'I-ORG', 'index': 7},

{'word': 'international',

'score': 0.9996932744979858,

'entity': 'I-ORG',

'index': 8},

{'word': 'eu', 'score': 0.9997008442878723, 'entity': 'B-ORG', 'index': 14}]

```

|

fhzh123/k_nc_bert | 2021-04-13T14:40:32.000Z | []

| [

".gitattributes"

]

| fhzh123 | 0 | |||

figge/intpolitics | 2021-03-23T14:40:43.000Z | []

| [

".gitattributes"

]

| figge | 0 | |||

finiteautomata/bert-contextualized-hate-category-es | 2021-05-19T16:50:33.000Z | [

"pytorch",

"bert",

"transformers"

]

| [

".gitattributes",

"config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"training_args.bin",

"vocab.txt"

]

| finiteautomata | 13 | transformers | ||

finiteautomata/bert-contextualized-hate-speech-es | 2021-05-19T16:51:14.000Z | [

"pytorch",

"jax",

"bert",

"text-classification",

"transformers"

]

| text-classification | [

".gitattributes",

"added_tokens.json",

"config.json",

"flax_model.msgpack",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"training_args.bin",

"vocab.txt"

]

| finiteautomata | 34 | transformers | |

finiteautomata/bert-non-contextualized-hate-category-es | 2021-05-19T16:51:52.000Z | [

"pytorch",

"bert",

"transformers"

]

| [

".gitattributes",

"config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"training_args.bin",

"vocab.txt"

]

| finiteautomata | 14 | transformers | ||

finiteautomata/bert-non-contextualized-hate-speech-es | 2021-05-19T16:52:40.000Z | [

"pytorch",

"jax",

"bert",

"text-classification",

"transformers"

]

| text-classification | [

".gitattributes",

"added_tokens.json",

"config.json",

"flax_model.msgpack",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"training_args.bin",

"vocab.txt"

]

| finiteautomata | 19 | transformers | |

finiteautomata/bert-title-body-hate-speech-es | 2021-04-21T02:02:28.000Z | []

| [

".gitattributes"

]

| finiteautomata | 0 | |||

finiteautomata/bertweet-base-emotion-analysis | 2021-05-27T02:47:52.000Z | [

"pytorch",

"roberta",

"text-classification",

"transformers"

]

| text-classification | [

".gitattributes",

"added_tokens.json",

"bpe.codes",

"config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.txt"

]

| finiteautomata | 248 | transformers | |

finiteautomata/bertweet-base-sentiment-analysis | 2021-05-26T11:55:15.000Z | [

"pytorch",

"roberta",

"text-classification",

"transformers"

]

| text-classification | [

".gitattributes",

"README.md",

"added_tokens.json",

"bpe.codes",

"config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer_config.json",

"vocab.txt"

]

| finiteautomata | 407 | transformers | # Sentiment Analysis in English

## bertweet-sentiment-analysis

Repository: [https://github.com/finiteautomata/pysentimiento/](https://github.com/finiteautomata/pysentimiento/)

Model trained with SemEval 2017 corpus (around ~40k tweets). Base model is [BerTweet](https://github.com/VinAIResearch/BERTweet), a RoBERTa model trained on English tweets.

Uses `POS`, `NEG`, `NEU` labels.

**Coming soon**: a brief paper describing the model and training.

Enjoy! 🤗

|

finiteautomata/beto-emotion-analysis | 2021-05-27T02:48:42.000Z | [

"pytorch",

"bert",

"text-classification",

"transformers"

]

| text-classification | [

".gitattributes",

"README.md",

"added_tokens.json",

"config.json",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer.json",

"tokenizer_config.json",

"vocab.txt"

]

| finiteautomata | 623 | transformers | # Emotion Analysis in Spanish

## beto-emotion-analysis

Repository: [https://github.com/finiteautomata/pysentimiento/](https://github.com/finiteautomata/pysentimiento/)

Model trained with TASS 2020 Task 2 corpus for Emotion detection in Spanish. Base model is [BETO](https://github.com/dccuchile/beto), a BERT model trained in Spanish.

**Coming soon**: a brief paper describing the model and training.

Enjoy! 🤗

|

finiteautomata/beto-sentiment-analysis | 2021-05-24T18:31:19.000Z | [

"pytorch",

"jax",

"bert",

"text-classification",

"transformers"

]

| text-classification | [

".gitattributes",

"README.md",

"added_tokens.json",

"config.json",

"flax_model.msgpack",

"pytorch_model.bin",

"special_tokens_map.json",

"tokenizer.json",

"tokenizer_config.json",

"vocab.txt"

]

| finiteautomata | 647,994 | transformers | # Sentiment Analysis in Spanish

## beto-sentiment-analysis

Repository: [https://github.com/finiteautomata/pysentimiento/](https://github.com/finiteautomata/pysentimiento/)

Model trained with TASS 2020 corpus (around ~5k tweets) of several dialects of Spanish. Base model is [BETO](https://github.com/dccuchile/beto), a BERT model trained in Spanish.

Uses `POS`, `NEG`, `NEU` labels.

**Coming soon**: a brief paper describing the model and training.

Enjoy! 🤗

|

firstmediabuyer/sber | 2020-11-15T12:35:34.000Z | []

| [

".gitattributes"

]

| firstmediabuyer | 0 | |||

flair/chunk-english-fast | 2021-03-02T21:59:23.000Z | [

"pytorch",

"en",

"dataset:conll2000",

"flair",

"token-classification",

"sequence-tagger-model"

]

| token-classification | [

".gitattributes",

"README.md",

"loss.tsv",

"pytorch_model.bin"

]

| flair | 0 | flair | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: en

datasets:

- conll2000

widget:

- text: "The happy man has been eating at the diner"

---

## English Chunking in Flair (fast model)

This is the fast phrase chunking model for English that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **96,22** (CoNLL-2000)

Predicts 4 tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

| ADJP | adjectival |

| ADVP | adverbial |

| CONJP | conjunction |

| INTJ | interjection |

| LST | list marker |

| NP | noun phrase |

| PP | prepositional |

| PRT | particle |

| SBAR | subordinate clause |

| VP | verb phrase |

Based on [Flair embeddings](https://www.aclweb.org/anthology/C18-1139/) and LSTM-CRF.

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/chunk-english-fast")

# make example sentence

sentence = Sentence("The happy man has been eating at the diner")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('np'):

print(entity)

```

This yields the following output:

```

Span [1,2,3]: "The happy man" [− Labels: NP (0.9958)]

Span [4,5,6]: "has been eating" [− Labels: VP (0.8759)]

Span [7]: "at" [− Labels: PP (1.0)]

Span [8,9]: "the diner" [− Labels: NP (0.9991)]

```

So, the spans "*The happy man*" and "*the diner*" are labeled as **noun phrases** (NP) and "*has been eating*" is labeled as a **verb phrase** (VP) in the sentence "*The happy man has been eating at the diner*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import Corpus

from flair.datasets import CONLL_2000

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. get the corpus

corpus: Corpus = CONLL_2000()

# 2. what tag do we want to predict?

tag_type = 'np'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize each embedding we use

embedding_types = [

# contextual string embeddings, forward

FlairEmbeddings('news-forward-fast'),

# contextual string embeddings, backward

FlairEmbeddings('news-backward-fast'),

]

# embedding stack consists of Flair and GloVe embeddings

embeddings = StackedEmbeddings(embeddings=embedding_types)

# 5. initialize sequence tagger

from flair.models import SequenceTagger

tagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type)

# 6. initialize trainer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/chunk-english-fast',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following paper when using this model.

```

@inproceedings{akbik2018coling,

title={Contextual String Embeddings for Sequence Labeling},

author={Akbik, Alan and Blythe, Duncan and Vollgraf, Roland},

booktitle = {{COLING} 2018, 27th International Conference on Computational Linguistics},

pages = {1638--1649},

year = {2018}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flair/chunk-english | 2021-03-02T22:00:37.000Z | [

"pytorch",

"en",

"dataset:conll2000",

"flair",

"token-classification",

"sequence-tagger-model"

]

| token-classification | [

".gitattributes",

"README.md",

"loss.tsv",

"pytorch_model.bin",

"training.log"

]

| flair | 0 | flair | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: en

datasets:

- conll2000

widget:

- text: "The happy man has been eating at the diner"

---

## English Chunking in Flair (default model)

This is the standard phrase chunking model for English that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **96,48** (CoNLL-2000)

Predicts 4 tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

| ADJP | adjectival |

| ADVP | adverbial |

| CONJP | conjunction |

| INTJ | interjection |

| LST | list marker |

| NP | noun phrase |

| PP | prepositional |

| PRT | particle |

| SBAR | subordinate clause |

| VP | verb phrase |

Based on [Flair embeddings](https://www.aclweb.org/anthology/C18-1139/) and LSTM-CRF.

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/chunk-english")

# make example sentence

sentence = Sentence("The happy man has been eating at the diner")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('np'):

print(entity)

```

This yields the following output:

```

Span [1,2,3]: "The happy man" [− Labels: NP (0.9958)]

Span [4,5,6]: "has been eating" [− Labels: VP (0.8759)]

Span [7]: "at" [− Labels: PP (1.0)]

Span [8,9]: "the diner" [− Labels: NP (0.9991)]

```

So, the spans "*The happy man*" and "*the diner*" are labeled as **noun phrases** (NP) and "*has been eating*" is labeled as a **verb phrase** (VP) in the sentence "*The happy man has been eating at the diner*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import Corpus

from flair.datasets import CONLL_2000

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. get the corpus

corpus: Corpus = CONLL_2000()

# 2. what tag do we want to predict?

tag_type = 'np'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize each embedding we use

embedding_types = [

# contextual string embeddings, forward

FlairEmbeddings('news-forward'),

# contextual string embeddings, backward

FlairEmbeddings('news-backward'),

]

# embedding stack consists of Flair and GloVe embeddings

embeddings = StackedEmbeddings(embeddings=embedding_types)

# 5. initialize sequence tagger

from flair.models import SequenceTagger

tagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type)

# 6. initialize trainer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/chunk-english',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following paper when using this model.

```

@inproceedings{akbik2018coling,

title={Contextual String Embeddings for Sequence Labeling},

author={Akbik, Alan and Blythe, Duncan and Vollgraf, Roland},

booktitle = {{COLING} 2018, 27th International Conference on Computational Linguistics},

pages = {1638--1649},

year = {2018}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flair/frame-english-fast | 2021-03-02T22:01:45.000Z | [

"pytorch",

"en",

"dataset:ontonotes",

"flair",

"token-classification",

"sequence-tagger-model"

]

| token-classification | [

".gitattributes",

"README.md",

"loss.tsv",

"pytorch_model.bin",

"training.log"

]

| flair | 0 | flair | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: en

datasets:

- ontonotes

widget:

- text: "George returned to Berlin to return his hat."

---

## English Verb Disambiguation in Flair (fast model)

This is the fast verb disambiguation model for English that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **88,27** (Ontonotes) - predicts [Proposition Bank verb frames](http://verbs.colorado.edu/propbank/framesets-english-aliases/).

Based on [Flair embeddings](https://www.aclweb.org/anthology/C18-1139/) and LSTM-CRF.

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/frame-english-fast")

# make example sentence

sentence = Sentence("George returned to Berlin to return his hat.")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following frame tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('frame'):

print(entity)

```

This yields the following output:

```

Span [2]: "returned" [− Labels: return.01 (0.9867)]

Span [6]: "return" [− Labels: return.02 (0.4741)]

```

So, the word "*returned*" is labeled as **return.01** (as in *go back somewhere*) while "*return*" is labeled as **return.02** (as in *give back something*) in the sentence "*George returned to Berlin to return his hat*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import Corpus

from flair.datasets import ColumnCorpus

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. load the corpus (Ontonotes does not ship with Flair, you need to download and reformat into a column format yourself)

corpus = ColumnCorpus(

"resources/tasks/srl", column_format={1: "text", 11: "frame"}

)

# 2. what tag do we want to predict?

tag_type = 'frame'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize each embedding we use

embedding_types = [

BytePairEmbeddings("en"),

FlairEmbeddings("news-forward-fast"),

FlairEmbeddings("news-backward-fast"),

]

# embedding stack consists of Flair and GloVe embeddings

embeddings = StackedEmbeddings(embeddings=embedding_types)

# 5. initialize sequence tagger

from flair.models import SequenceTagger

tagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type)

# 6. initialize trainer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/frame-english-fast',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following paper when using this model.

```

@inproceedings{akbik2019flair,

title={FLAIR: An easy-to-use framework for state-of-the-art NLP},

author={Akbik, Alan and Bergmann, Tanja and Blythe, Duncan and Rasul, Kashif and Schweter, Stefan and Vollgraf, Roland},

booktitle={{NAACL} 2019, 2019 Conference of the North American Chapter of the Association for Computational Linguistics (Demonstrations)},

pages={54--59},

year={2019}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flair/frame-english | 2021-03-02T22:02:55.000Z | [

"pytorch",

"en",

"dataset:ontonotes",

"flair",

"token-classification",

"sequence-tagger-model"

]

| token-classification | [

".gitattributes",

"README.md",

"loss.tsv",

"pytorch_model.bin",

"training.log"

]

| flair | 0 | flair | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: en

datasets:

- ontonotes

widget:

- text: "George returned to Berlin to return his hat."

---

## English Verb Disambiguation in Flair (default model)

This is the standard verb disambiguation model for English that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **89,34** (Ontonotes) - predicts [Proposition Bank verb frames](http://verbs.colorado.edu/propbank/framesets-english-aliases/).

Based on [Flair embeddings](https://www.aclweb.org/anthology/C18-1139/) and LSTM-CRF.

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/frame-english")

# make example sentence

sentence = Sentence("George returned to Berlin to return his hat.")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following frame tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('frame'):

print(entity)

```

This yields the following output:

```

Span [2]: "returned" [− Labels: return.01 (0.9951)]

Span [6]: "return" [− Labels: return.02 (0.6361)]

```

So, the word "*returned*" is labeled as **return.01** (as in *go back somewhere*) while "*return*" is labeled as **return.02** (as in *give back something*) in the sentence "*George returned to Berlin to return his hat*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

from flair.data import Corpus

from flair.datasets import ColumnCorpus

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. load the corpus (Ontonotes does not ship with Flair, you need to download and reformat into a column format yourself)

corpus = ColumnCorpus(

"resources/tasks/srl", column_format={1: "text", 11: "frame"}

)

# 2. what tag do we want to predict?

tag_type = 'frame'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize each embedding we use

embedding_types = [

BytePairEmbeddings("en"),

FlairEmbeddings("news-forward"),

FlairEmbeddings("news-backward"),

]

# embedding stack consists of Flair and GloVe embeddings

embeddings = StackedEmbeddings(embeddings=embedding_types)

# 5. initialize sequence tagger

from flair.models import SequenceTagger

tagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type)

# 6. initialize trainer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/frame-english',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following paper when using this model.

```

@inproceedings{akbik2019flair,

title={FLAIR: An easy-to-use framework for state-of-the-art NLP},

author={Akbik, Alan and Bergmann, Tanja and Blythe, Duncan and Rasul, Kashif and Schweter, Stefan and Vollgraf, Roland},

booktitle={{NAACL} 2019, 2019 Conference of the North American Chapter of the Association for Computational Linguistics (Demonstrations)},

pages={54--59},

year={2019}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flair/ner-danish | 2021-02-26T15:33:02.000Z | [

"pytorch",

"da",

"dataset:DaNE",

"flair",

"token-classification",

"sequence-tagger-model"

]

| token-classification | [

".gitattributes",

"README.md",

"pytorch_model.bin"

]

| flair | 0 | flair | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: da

datasets:

- DaNE

widget:

- text: "Jens Peter Hansen kommer fra Danmark"

---

# Danish NER in Flair (default model)

This is the standard 4-class NER model for Danish that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **81.78** (DaNER)

Predicts 4 tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

| PER | person name |

| LOC | location name |

| ORG | organization name |

| MISC | other name |

Based on Transformer embeddings and LSTM-CRF.

---

# Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/ner-danish")

# make example sentence

sentence = Sentence("Jens Peter Hansen kommer fra Danmark")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('ner'):

print(entity)

```

This yields the following output:

```

Span [1,2,3]: "Jens Peter Hansen" [− Labels: PER (0.9961)]

Span [6]: "Danmark" [− Labels: LOC (0.9816)]

```

So, the entities "*Jens Peter Hansen*" (labeled as a **person**) and "*Danmark*" (labeled as a **location**) are found in the sentence "*Jens Peter Hansen kommer fra Danmark*".

---

### Training: Script to train this model

The model was trained by the [DaNLP project](https://github.com/alexandrainst/danlp) using the [DaNE corpus](https://github.com/alexandrainst/danlp/blob/master/docs/docs/datasets.md#danish-dependency-treebank-dane-dane). Check their repo for more information.

The following Flair script may be used to train such a model:

```python

from flair.data import Corpus

from flair.datasets import DANE

from flair.embeddings import WordEmbeddings, StackedEmbeddings, FlairEmbeddings

# 1. get the corpus

corpus: Corpus = DANE()

# 2. what tag do we want to predict?

tag_type = 'ner'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize each embedding we use

embedding_types = [

# GloVe embeddings

WordEmbeddings('da'),

# contextual string embeddings, forward

FlairEmbeddings('da-forward'),

# contextual string embeddings, backward

FlairEmbeddings('da-backward'),

]

# embedding stack consists of Flair and GloVe embeddings

embeddings = StackedEmbeddings(embeddings=embedding_types)

# 5. initialize sequence tagger

from flair.models import SequenceTagger

tagger = SequenceTagger(hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type=tag_type)

# 6. initialize trainer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus)

# 7. run training

trainer.train('resources/taggers/ner-danish',

train_with_dev=True,

max_epochs=150)

```

---

### Cite

Please cite the following papers when using this model.

```

@inproceedings{akbik-etal-2019-flair,

title = "{FLAIR}: An Easy-to-Use Framework for State-of-the-Art {NLP}",

author = "Akbik, Alan and

Bergmann, Tanja and

Blythe, Duncan and

Rasul, Kashif and

Schweter, Stefan and

Vollgraf, Roland",

booktitle = "Proceedings of the 2019 Conference of the North {A}merican Chapter of the Association for Computational Linguistics (Demonstrations)",

year = "2019",

url = "https://www.aclweb.org/anthology/N19-4010",

pages = "54--59",

}

```

And check the [DaNLP project](https://github.com/alexandrainst/danlp) for more information.

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flair/ner-dutch-large | 2021-05-08T15:36:03.000Z | [

"pytorch",

"nl",

"dataset:conll2003",

"arxiv:2011.06993",

"flair",

"token-classification",

"sequence-tagger-model"

]

| token-classification | [

".gitattributes",

"README.md",

"loss.tsv",

"pytorch_model.bin",

"training.log"

]

| flair | 0 | flair | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: nl

datasets:

- conll2003

widget:

- text: "George Washington ging naar Washington"

---

## Dutch NER in Flair (large model)

This is the large 4-class NER model for Dutch that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **95,25** (CoNLL-03 Dutch)

Predicts 4 tags:

| **tag** | **meaning** |

|---------------------------------|-----------|

| PER | person name |

| LOC | location name |

| ORG | organization name |

| MISC | other name |

Based on document-level XLM-R embeddings and [FLERT](https://arxiv.org/pdf/2011.06993v1.pdf/).

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("flair/ner-dutch-large")

# make example sentence

sentence = Sentence("George Washington ging naar Washington")

# predict NER tags

tagger.predict(sentence)

# print sentence

print(sentence)

# print predicted NER spans

print('The following NER tags are found:')

# iterate over entities and print

for entity in sentence.get_spans('ner'):

print(entity)

```

This yields the following output:

```

Span [1,2]: "George Washington" [− Labels: PER (1.0)]

Span [5]: "Washington" [− Labels: LOC (1.0)]

```

So, the entities "*George Washington*" (labeled as a **person**) and "*Washington*" (labeled as a **location**) are found in the sentence "*George Washington ging naar Washington*".

---

### Training: Script to train this model

The following Flair script was used to train this model:

```python

import torch

# 1. get the corpus

from flair.datasets import CONLL_03_DUTCH

corpus = CONLL_03_DUTCH()

# 2. what tag do we want to predict?

tag_type = 'ner'

# 3. make the tag dictionary from the corpus

tag_dictionary = corpus.make_tag_dictionary(tag_type=tag_type)

# 4. initialize fine-tuneable transformer embeddings WITH document context

from flair.embeddings import TransformerWordEmbeddings

embeddings = TransformerWordEmbeddings(

model='xlm-roberta-large',

layers="-1",

subtoken_pooling="first",

fine_tune=True,

use_context=True,

)

# 5. initialize bare-bones sequence tagger (no CRF, no RNN, no reprojection)

from flair.models import SequenceTagger

tagger = SequenceTagger(

hidden_size=256,

embeddings=embeddings,

tag_dictionary=tag_dictionary,

tag_type='ner',

use_crf=False,

use_rnn=False,

reproject_embeddings=False,

)

# 6. initialize trainer with AdamW optimizer

from flair.trainers import ModelTrainer

trainer = ModelTrainer(tagger, corpus, optimizer=torch.optim.AdamW)

# 7. run training with XLM parameters (20 epochs, small LR)

from torch.optim.lr_scheduler import OneCycleLR

trainer.train('resources/taggers/ner-dutch-large',

learning_rate=5.0e-6,

mini_batch_size=4,

mini_batch_chunk_size=1,

max_epochs=20,

scheduler=OneCycleLR,

embeddings_storage_mode='none',

weight_decay=0.,

)

)

```

---

### Cite

Please cite the following paper when using this model.

```

@misc{schweter2020flert,

title={FLERT: Document-Level Features for Named Entity Recognition},

author={Stefan Schweter and Alan Akbik},

year={2020},

eprint={2011.06993},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

---

### Issues?

The Flair issue tracker is available [here](https://github.com/flairNLP/flair/issues/).

|

flair/ner-dutch | 2021-03-02T22:03:57.000Z | [

"pytorch",

"nl",

"dataset:conll2003",

"flair",

"token-classification",

"sequence-tagger-model"

]

| token-classification | [

".gitattributes",

"README.md",

"loss.tsv",

"pytorch_model.bin",

"test.tsv",

"training.log"

]

| flair | 0 | flair | ---

tags:

- flair

- token-classification

- sequence-tagger-model

language: nl

datasets:

- conll2003

widget:

- text: "George Washington ging naar Washington."

---